Happy Sunday! We just had another crazy week in AI. OpenAI launched GPT-5.4 with native computer control, a new open-source tool claims it can remove refusal behavior from AI models in under an hour, and Luma introduced creative agents that can run entire multimedia workflows.

And that's not all, here are the most important AI moves you need to know this week.

OpenAI’s latest model combines stronger reasoning, coding, and professional productivity skills with native computer use, allowing AI to interact with software and perform multi-step tasks across your device.

Native computer use lets the model issue keyboard and mouse commands and operate apps from screenshots

Improved reasoning helps it search across multiple sources and synthesize answers more reliably

33% fewer false claims compared to GPT-5.2, making it OpenAI’s most factual model yet

New GPT-5.4 Thinking mode in ChatGPT outlines its reasoning and allows users to adjust requests mid-response

Try it now → https://chatgpt.com/

A new open-source tool called Heretic, claims it can remove refusal behavior from large language models in about 45 minutes. Instead of relying on jailbreak prompts or prompt engineering tricks, the tool modifies the model so it no longer declines prompts.

Runs a single command that automatically removes refusal mechanisms

Designed to preserve the model’s core intelligence and reasoning abilities

Works with multiple open-source models including Llama, Qwen, and Gemma

Runs locally on consumer hardware with zero configuration required

Try it now → https://github.com/p-e-w/heretic

AI video startup Luma introduced Luma Agents, a new system designed to handle end-to-end creative workflows across text, images, video, and audio. The agents are powered by Luma’s new Unified Intelligence models, which use a single multimodal reasoning architecture.

Built on Luma’s Uni-1 model trained across video, image, audio, language, and spatial reasoning

Can coordinate with external AI models like Veo, Seedream, and ElevenLabs for generation tasks

Maintains persistent context across assets, collaborators, and creative iterations

Uses self-critique loops to evaluate and refine outputs automatically

Try it now → https://lumalabs.ai/

Google DeepMind released a preview of Gemini 3.1 Flash-Lite, boosting intelligence by 12 points while keeping blazing-fast speeds and a 1M-token context window.

Scores 34 on the Intelligence Index (+12 vs 2.5 Flash-Lite) while generating 360+ tokens/sec

Beats top-tier models on multimodal benchmarks (78% on MMMU-Pro)

Delivers first token 2.5× faster and 45% higher output speed vs Gemini 2.5 Flash

Pricing more than tripled: $0.25/M input tokens and $1.50/M output tokens

Try it now → https://gemini.google.com/app

Google is upgrading NotebookLM’s video features with Cinematic Video Overviews, a new system that transforms your research materials into fully structured, animated videos with AI acting as a creative director.

Uses a combination of Gemini 3, Nano Banana Pro, and Veo 3 to generate animations and rich visuals

AI acts as a “creative director,” deciding narrative structure, visuals, and storytelling style

Creates immersive videos directly from your uploaded sources and research notes

Rolling out now in English for Google AI Ultra users on web and mobile

Try it now → https://notebooklm.google/

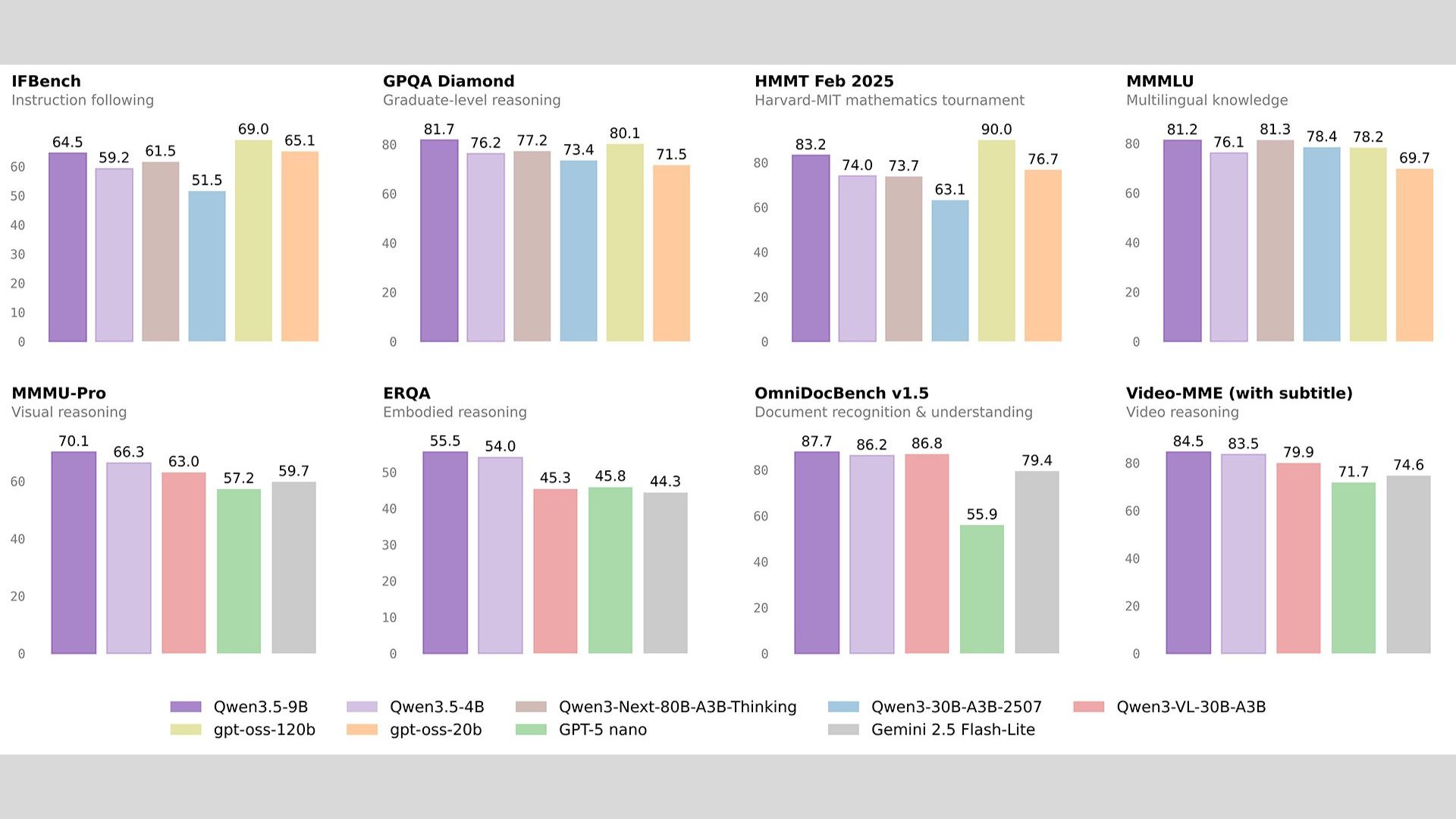

Alibaba’s Qwen team released Qwen 3.5 Small, designed around “More Intelligence, Less Compute.” Instead of scaling to massive parameter counts, the lineup focuses on efficient reasoning, native multimodality, and edge deployment.

Models range from 0.8B to 9B parameters, optimized for mobile, IoT, and local-first apps

4B model features native multimodal architecture (text + vision in one latent space)

9B model uses Scaled Reinforcement Learning to boost reasoning and reduce hallucinations

Available now on Hugging Face and ModelScope (Base + Instruct versions)

Try it now → https://huggingface.co/collections/Qwen/qwen35

Thanks for making it to the end! I put my heart into every email I send. I hope you are enjoying it. Let me know your thoughts so I can make the next one even better.

See you tomorrow :)

Dr. Alvaro Cintas