Happy Sunday! We just had another crazy week in AI. China just dropped a Claude Opus 4.5 level model that runs locally while another new open source tool lets you spin up an AI agency with AI employees.

And that's not all, here are the most important AI moves you need to know this week.

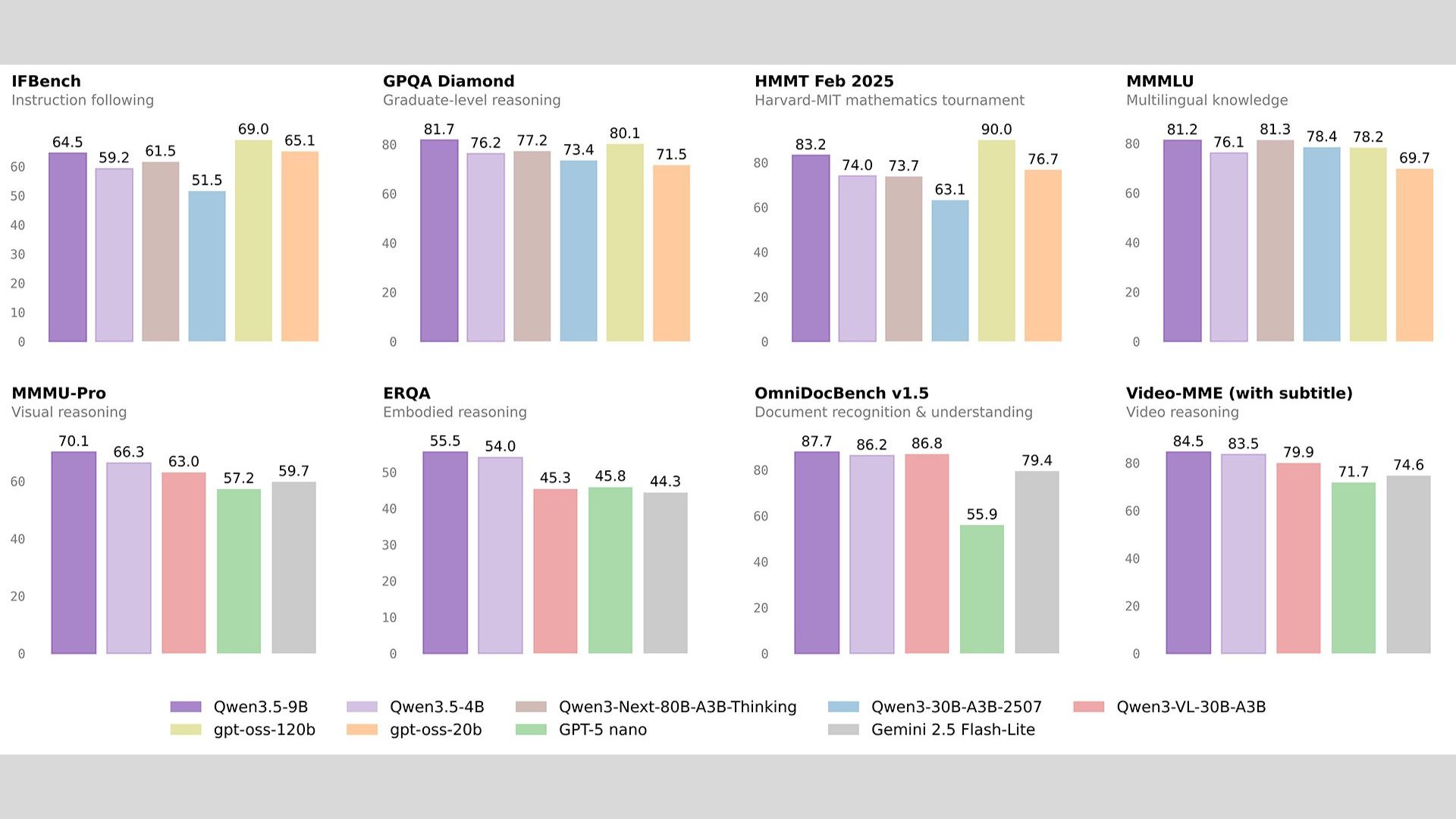

Alibaba’s Qwen team released Qwen 3.5 Small, designed around “More Intelligence, Less Compute.” Instead of scaling to massive parameter counts, the lineup focuses on efficient reasoning, native multimodality, and edge deployment.

Models range from 0.8B to 9B parameters, optimized for mobile, IoT, and local-first apps

4B model features native multimodal architecture (text + vision in one latent space)

9B model uses Scaled Reinforcement Learning to boost reasoning and reduce hallucinations

Available now on Hugging Face and ModelScope (Base + Instruct versions)

Try it now → huggingface.co/collections/Qwen/qwen35

Agency Agents is a viral new open-source framework that exploded with over 10,000 GitHub stars in under seven days. Instead of relying on one monolithic AI to handle your entire codebase, this project structures your AI like an actual company.

Replaces the single-agent approach with a corporate structure: specialized agents with clear responsibilities coordinate and ship ideas together.

Drops directly into Claude Code, Cursor, or any other coding tool so you can put these 120+ AI employees to work instantly.

Covers the core tech and growth departments: Engineering (frontend/backend/AI), Design, Marketing, Product, and Project Management.

Handles the rest of the business too: Testing, Support, Spatial Computing (XR/Vision Pro), and Specialized multi-agent orchestration.

Try it now → github.com/msitarzewski/agency-agents/

Perplexity unveiled Perplexity Computer, a general-purpose AI agent that can create and execute full workflows across tools, files, the web, and code, running for hours or even months with minimal input.

Works like a digital employee: operates browsers, tools, files, coding environments, and research workflows

Orchestrates 19 AI models (including OpenAI, Google, and others) by assigning tasks to the best model for each job

Lets users specify an outcome, then auto-splits work into agents and sub-agents that run in parallel

Launches web-only for Max users ($200/month) with per-token billing and bonus launch tokens

Try it now → https://www.perplexity.ai/

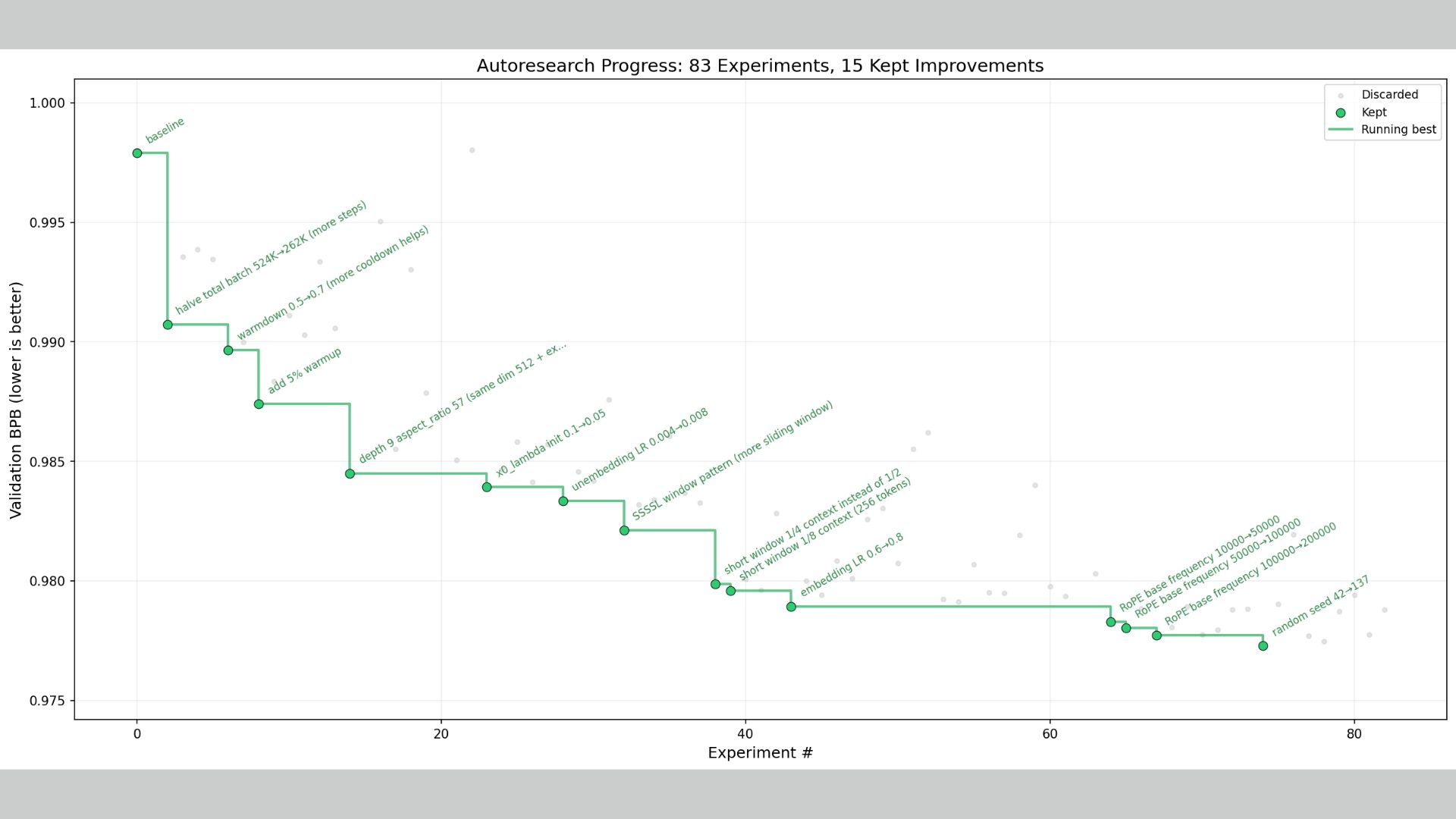

Andrej Karpathy just dropped "autoresearch," a free, open-source system that fully automates AI research. It puts the power of training frontier intelligence into the hands of anyone with a single GPU, breaking the pay-to-play monopoly of the giant labs.

The agent runs an autonomous loop: it modifies the model's code, trains it for exactly 5 minutes, checks if the validation score improved, and repeats.

It can execute ~100 experiments overnight. In tests, it hit an 18% success rate at finding better setups, roughly matching the hit rate of a human ML researcher.

The entire repo is incredibly minimal (just three files) with no complex configs. The human iterates on the text prompt, while the AI agent iterates entirely on the Python training code.

Try it now → github.com/karpathy/autoresearch

Nvidia continues to step up its open-source game. The company debuted Nemotron 3 Super, a 120 billion parameter open model built to be faster, more efficient, and more accurate at complex agentic reasoning than its predecessor.

Features a hybrid mixture-of-experts architecture and a massive 1 million token context window

Outranked several models from OpenAI, Amazon, and Google on benchmarks, running up to 2.2x faster than GPT-OSS in reasoning workloads

Goes beyond just "open weights" by open-sourcing the entire methodology: pre- and post-training datasets, training environments, and evaluation recipes

Early access partner CrowdStrike found the model performed 3x more accurately than their previous production model for threat hunting

Learn More → blogs.nvidia.com/blog/nemotron-3-super-agentic-ai/

The rise of "vibe coding" has completely changed how developers work, but it's created a massive new problem: humans simply can't review the sheer flood of AI-generated code fast enough. To fix this bottleneck, Anthropic just launched "Code Review" inside Claude Code.

Instead of nagging engineers about code style, this multi-agent system strictly hunts down critical logic errors before they merge into the codebase.

It automatically analyzes pull requests, explaining exactly what the issue is, why it’s a problem, and how to fix it with color-coded severity rankings.

The tool uses multiple AI agents working in parallel to examine the code from different dimensions, with a final agent aggregating and prioritizing the most urgent fixes.

It’s a premium, token-based feature estimated to cost $15 to $25 per review, targeted squarely at enterprise giants like Uber and Salesforce.

Try it now → claude.ai

Thanks for making it to the end! I put my heart into every email I send. I hope you are enjoying it. Let me know your thoughts so I can make the next one even better.

See you tomorrow :)

Dr. Alvaro Cintas