Happy Sunday! We just had another crazy week in AI. There is a new open source AI model that beats major models like ChatGPT and Claude while there's another new AI agent that can use computers like a human.

And that's not all, here are the most important AI moves you need to know this week.

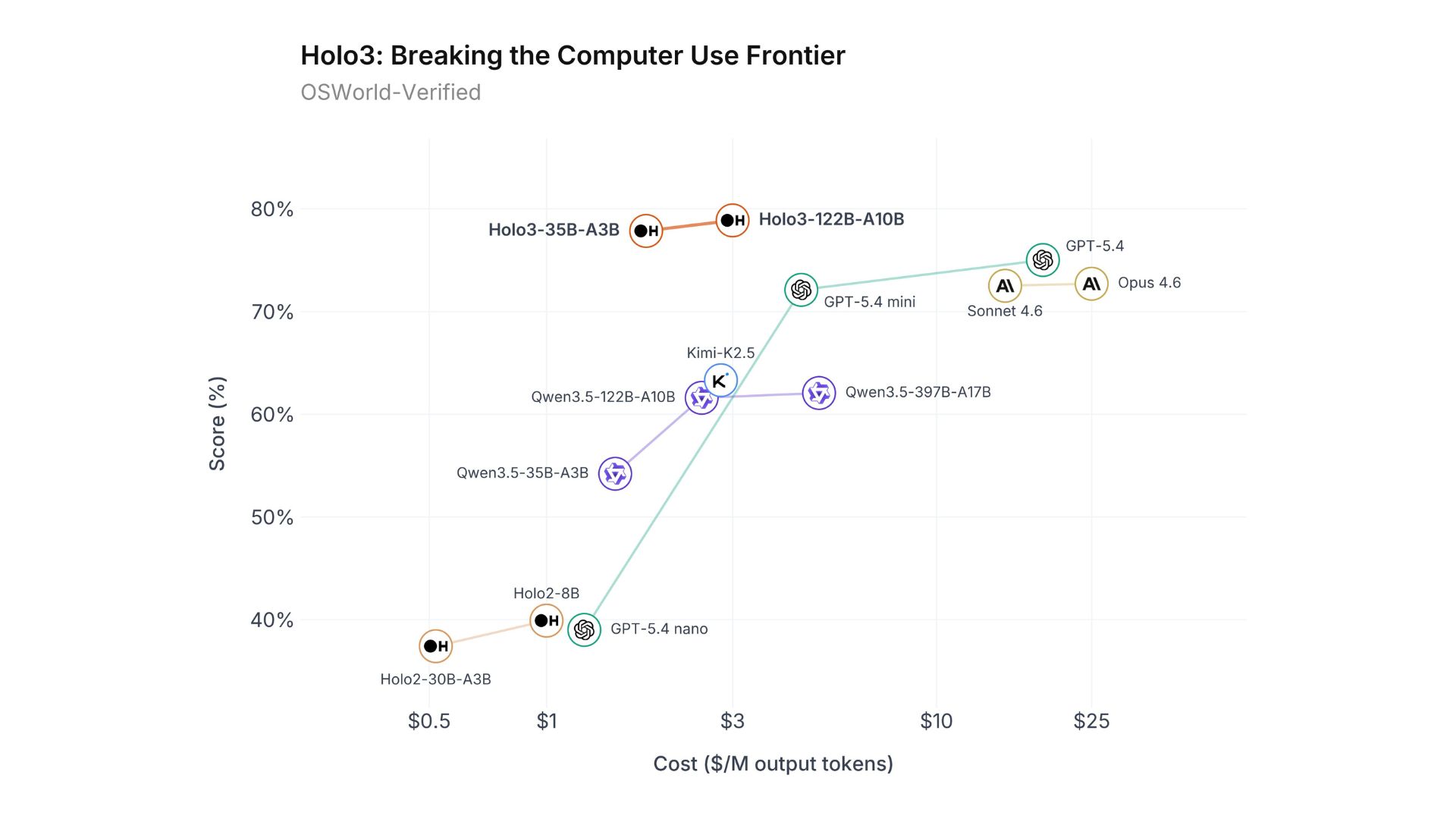

H Company has officially released the Holo3 series, setting new industry standards for GUI agents. Built specifically for computer usage automation across web, desktop, and mobile environments, the new Vision-Language Model is shattering benchmarks at a radically lower price point.

The flagship Holo3-122B-A10B achieved an impressive 78.85% on the OSWorld-Verified benchmark, outperforming mainstream giants like GPT-5.4 and Opus 4.6.

It accomplishes this state-of-the-art performance at just one-tenth of the inference cost of its leading competitors.

Built on a Qwen3.5 sparse Mixture of Experts (MoE) architecture, the model is highly efficient, the 122B version activates only 10B parameters during use, while the 35B version activates just 3B.

A fully open-source, lightweight version (Holo3-35B-A3B) is already available on Hugging Face under an Apache 2.0 license for free-tier users and local deployment.

Try it now → hcompany.ai/holo3

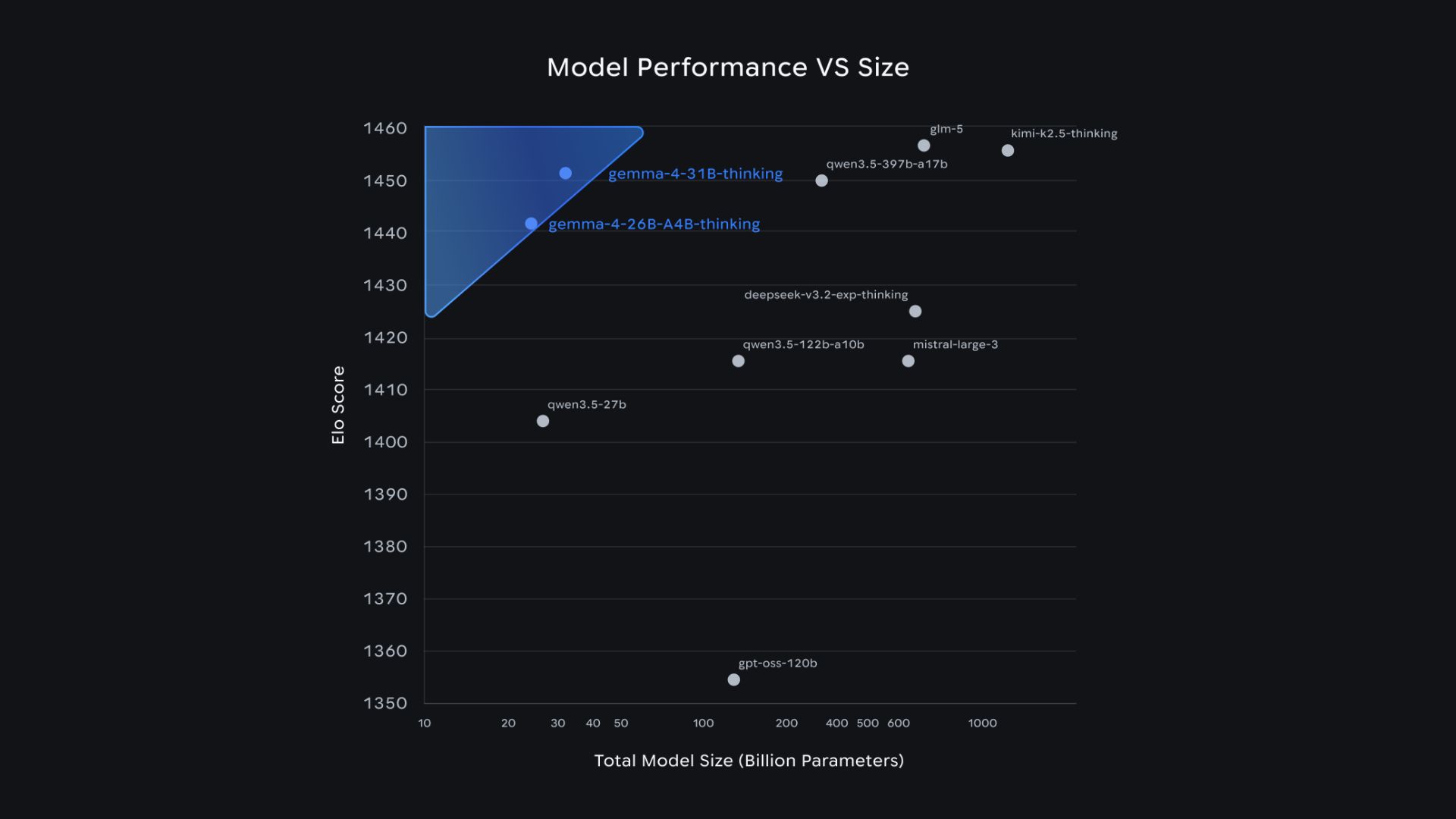

Google has officially released the Gemma 4 family, built on the exact same technology that powers the proprietary Gemini 3. But the biggest news isn't just the performance, it's that Google has shifted from restrictive proprietary licenses to a commercially permissive Apache 2.0 license, giving developers total control over their data and infrastructure.

The lineup includes four model sizes: Effective 2B (E2B) and 4B (E4B) for mobile and IoT devices, alongside a highly efficient 26B Mixture-of-Experts (MoE) and a 31B Dense model for workstations.

All models natively support agentic workflows right out of the box, featuring built-in function calling, structured JSON output, and system instructions.

The smaller edge models (E2B and E4B) are multimodal powerhouses, capable of natively processing image, video, and audio inputs locally on smartphones, Raspberry Pis, or Jetson Orin Nanos.

Despite their highly efficient footprint, the 31B model currently ranks #3 worldwide among open models on the Arena AI Leaderboard, while the 26B MoE ranks #6, outperforming competitors up to 20 times their size.

Try it now → https://aistudio.google.com/

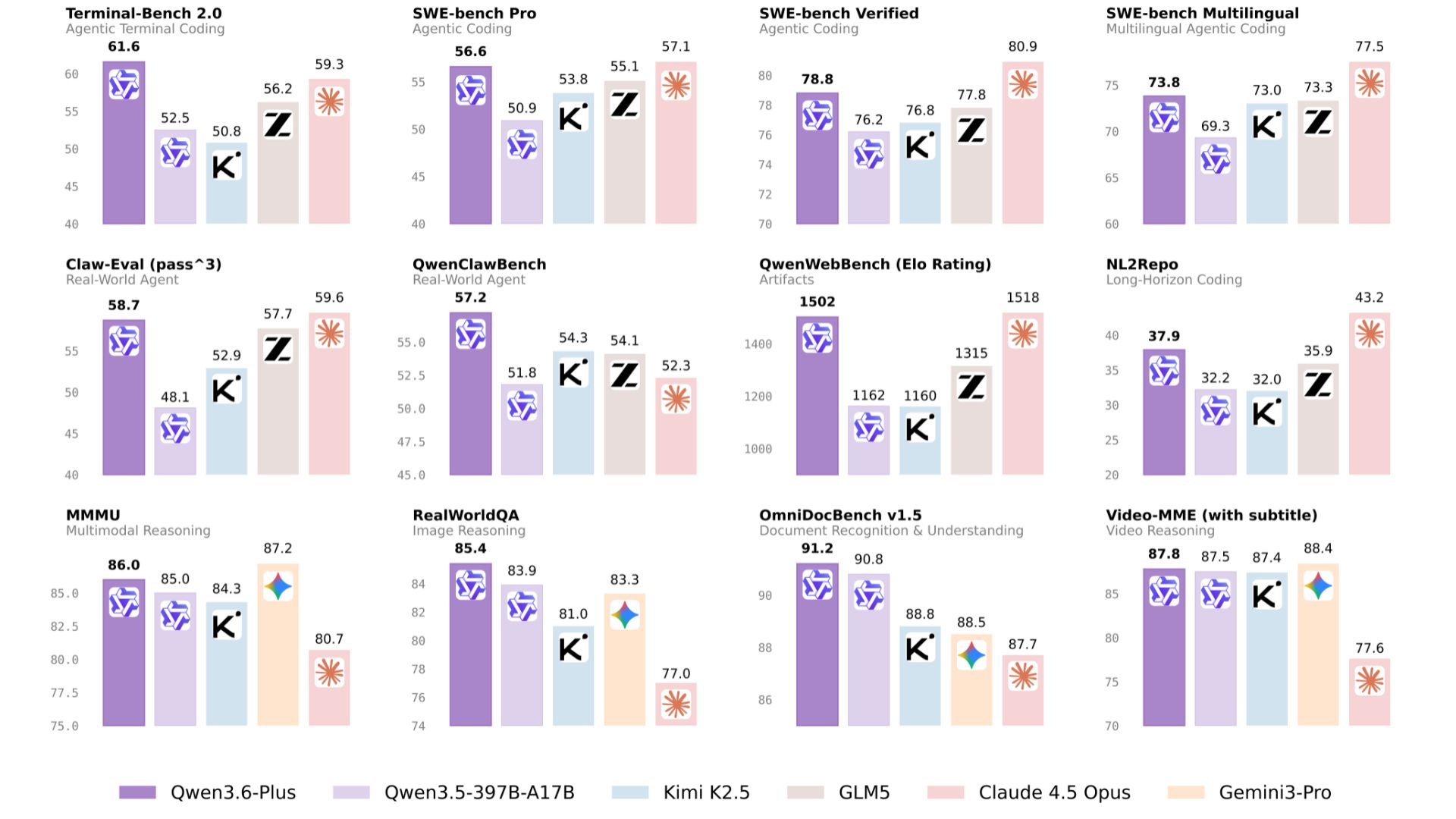

Alibaba has released Qwen3.6-Plus, a proprietary AI model with a massive one million token context window. Integrated into the company's new "Wukong" enterprise AI service, the model is laser-focused on agentic coding and complex frontend development tasks.

Qwen3.6-Plus outperforms the older Qwen3.5 model and even partially beats Anthropic's Claude 4.5 Opus in internal benchmarks.

The model will be available via the Alibaba Cloud Model Studio API and integrated directly into the Qwen chatbot app.

This release marks a major strategy shift for Alibaba: moving away from open-source models (like earlier Qwen versions) to monetize proprietary enterprise solutions.

The pivot comes as Alibaba's cloud division faces intense competition from ByteDance, with Alibaba targeting $100 billion in AI revenue over the next five years.

Try it now → https://chat.qwen.ai/

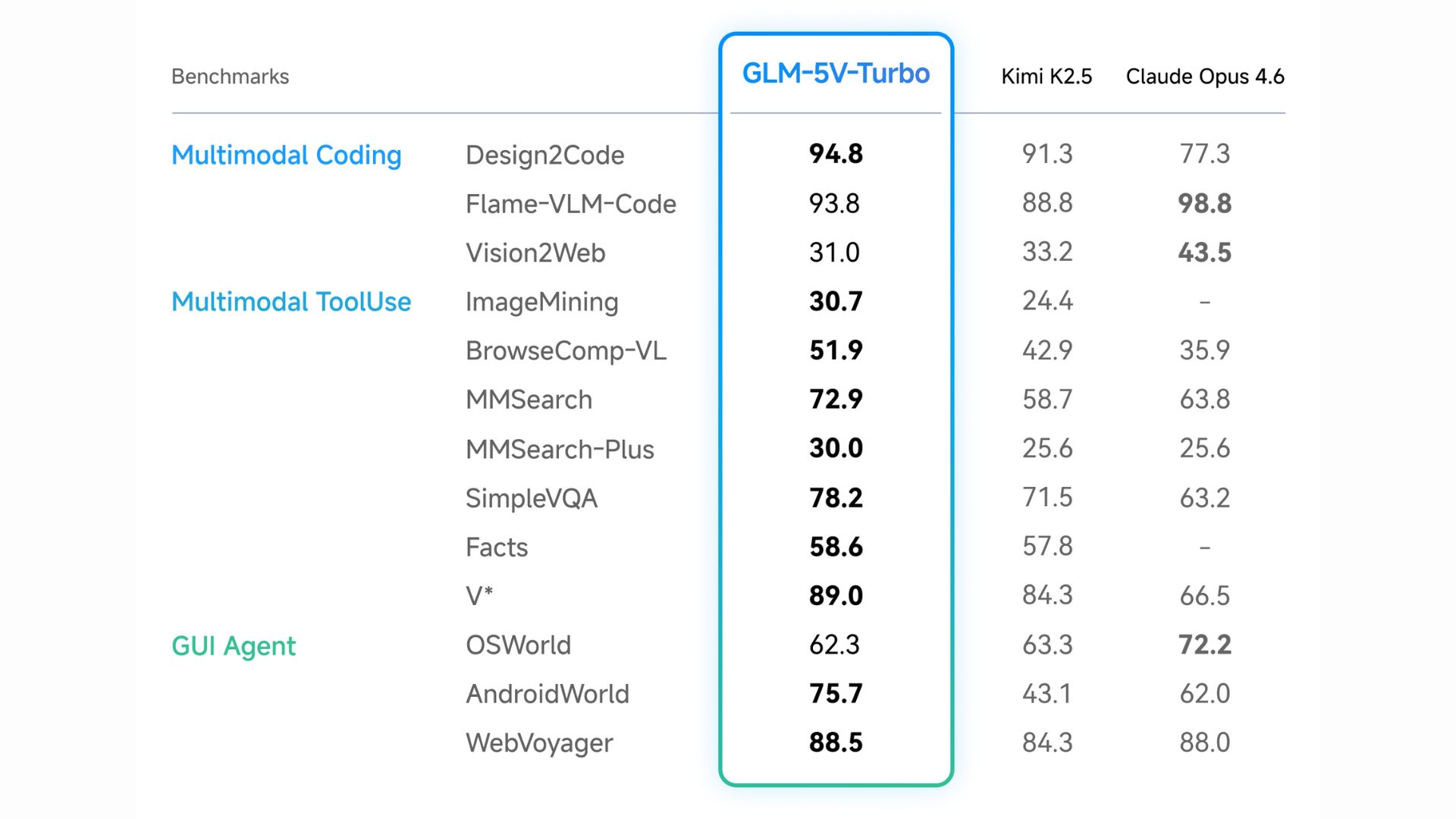

In the world of vision-language models, getting an AI to accurately "see" a user interface and simultaneously output complex software engineering code has been a massive challenge. Zhipu AI (Z.ai) aims to fix that with GLM-5V-Turbo, a native multimodal coding model built specifically to power high-capacity agentic workflows.

Native Multimodal Fusion: Unlike older systems that separate vision and language, this model natively processes images, video, design drafts, and complex document layouts as primary data without needing text translations.

Agentic Optimization: GLM-5V-Turbo is deeply integrated for workflows involving frameworks like OpenClaw and Claude Code, mastering the "perceive → plan → execute" loop for autonomous environment interaction.

30+ Task Joint Reinforcement Learning: The model was trained simultaneously on 30+ domains to prevent the "see-saw" effect, ensuring visual recognition improvements don't degrade strict STEM reasoning and programming logic.

Try it now → https://Z.ai

Following OpenAI's sudden exit from the AI video race last week, Google announced its unwavering commitment to the medium by launching Veo 3.1 Lite, its most cost-effective video generation model to date.

Designed for high-volume applications, Veo 3.1 Lite slots beneath Veo 3.1 Fast, offering the exact same generation speeds but at less than half the cost.

The model supports both Text-to-Video and Image-to-Video workflows in 720p and 1080p resolutions, covering both landscape (16:9) and portrait (9:16) aspect ratios.

Developers can now tightly control their spend by customizing video durations to 4, 6, or 8 seconds, with pricing scaling dynamically based on the length.

The new model is rolling out today on the Gemini API and Google AI Studio, alongside news that the higher-tier Veo 3.1 Fast model will receive a price cut on April 7.

Try Now → https://gemini.google.com/app

Perplexity has introduced Model Council, a new multi-model research feature exclusively for its Max subscribers. Instead of relying on a single AI's output, Model Council runs your query across three different frontier models simultaneously and uses a fourth to synthesize the results.

Users can select three top-tier models (like Claude Opus 4.6, GPT-5.2, and Gemini 3 Pro) to independently answer the exact same prompt at the exact same time.

A fourth "synthesizer" or "chair" model reviews all three outputs and produces a single consolidated answer.

The final output explicitly highlights where the models agree, where they diverge, and what unique insights or blind spots each individual model had.

It is designed specifically for high-stakes tasks like investment research, coding architecture, and strategic decision-making where bias, hallucinations, or missing context could be costly.

Try it now → https://www.perplexity.ai/

Thanks for making it to the end! I put my heart into every email I send. I hope you are enjoying it. Let me know your thoughts so I can make the next one even better.

See you tomorrow :)

Dr. Alvaro Cintas