Happy Sunday! We just had another crazy week in AI. Z.ai just dropped the world’s most advanced open-source AI model while this new free and open-source tool clones any voice from just a 3-second audio clip.

And that's not all, here are the most important AI moves you need to know this week.

Z.ai (Zhupai AI) just unveiled GLM-5.1, a 754-billion parameter Mixture-of-Experts model released under a permissive MIT License. While competitors are fiercely battling over raw speed, Z.ai optimized this model for endurance, it is designed to work completely autonomously for up to eight hours on a single task.

The model can execute a staggering 1,700 tool calls and steps in a single run without suffering from "strategy drift" or forgetting its original goal.

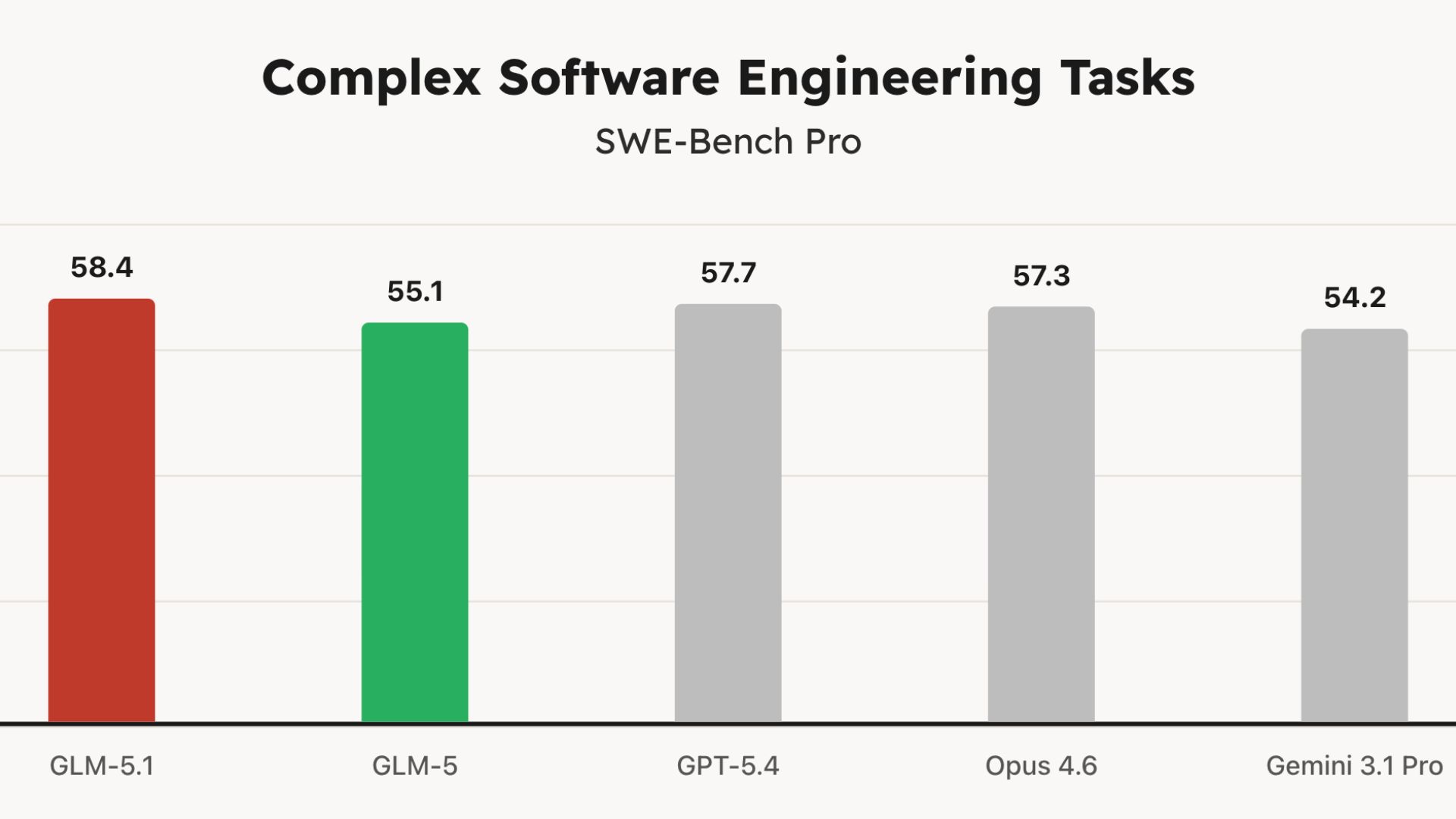

It officially crushed the SWE-Bench Pro coding benchmark with a score of 58.4, dethroning heavyweights like OpenAI's GPT-5.4 and Anthropic's Claude Opus 4.6.

In a wild 8-hour test, GLM-5.1 was tasked with building a Linux-style desktop environment from scratch. It autonomously coded a file browser, terminal, text editor, system monitor, and functional games, iteratively testing and polishing the UI until it was complete.

It functions as its own R&D department. The model can write code, compile it, run it in a live Docker container, analyze where it bottlenecked, and autonomously rewrite its own architecture to fix the problem.

Try it now → github.com/zai-org/GLM-5

Google has officially released the Gemma 4 family, built on the exact same technology that powers the proprietary Gemini 3. But the biggest news isn't just the performance, it's that Google has shifted from restrictive proprietary licenses to a commercially permissive Apache 2.0 license, giving developers total control over their data and infrastructure.

The lineup includes four model sizes: Effective 2B (E2B) and 4B (E4B) for mobile and IoT devices, alongside a highly efficient 26B Mixture-of-Experts (MoE) and a 31B Dense model for workstations.

All models natively support agentic workflows right out of the box, featuring built-in function calling, structured JSON output, and system instructions.

The smaller edge models (E2B and E4B) are multimodal powerhouses, capable of natively processing image, video, and audio inputs locally on smartphones, Raspberry Pis, or Jetson Orin Nanos.

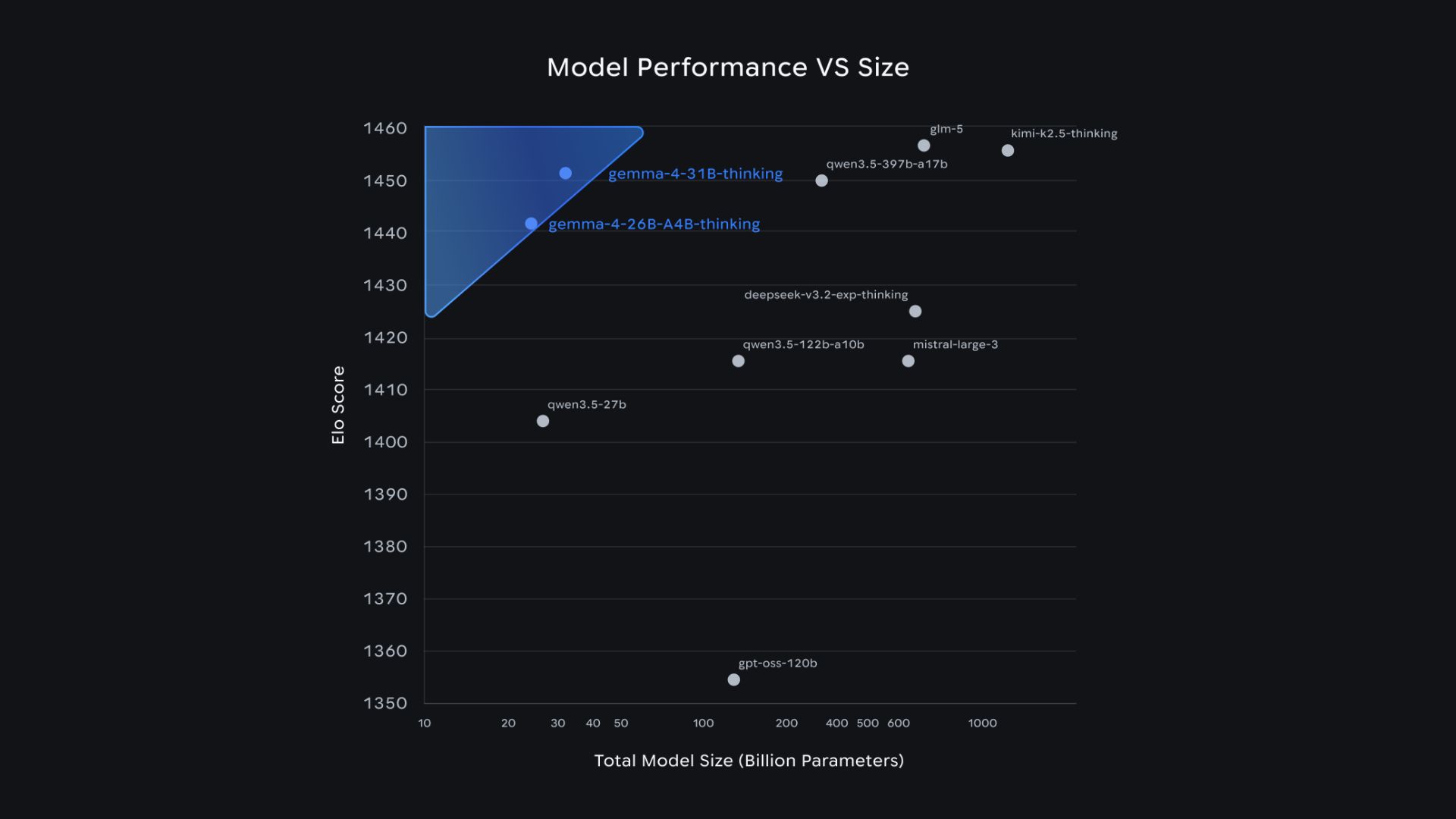

Despite their highly efficient footprint, the 31B model currently ranks #3 worldwide among open models on the Arena AI Leaderboard, while the 26B MoE ranks #6, outperforming competitors up to 20 times their size.

Try it now → https://aistudio.google.com/

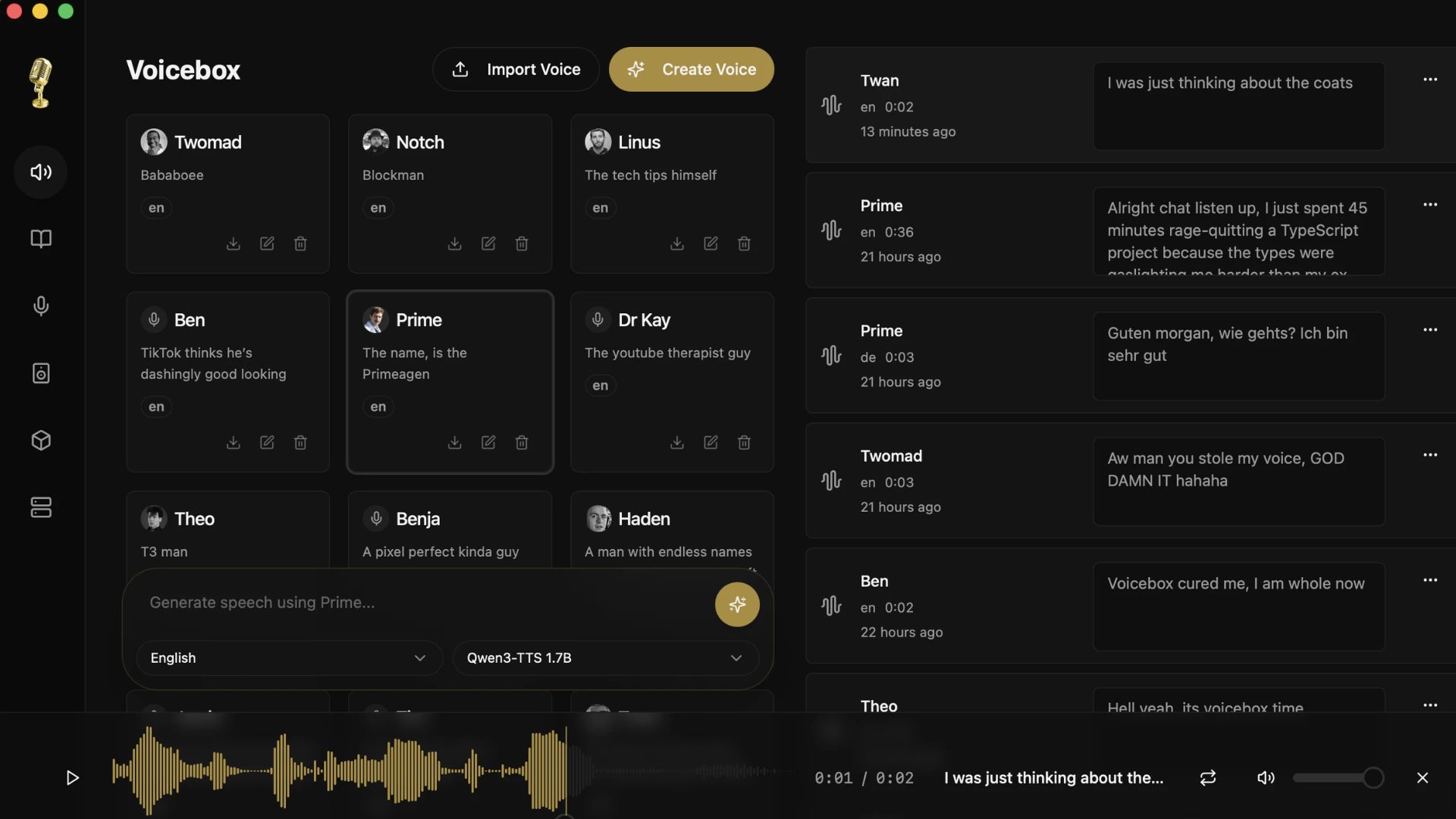

Voicebox is a viral new local-first voice cloning studio that lets you clone any voice from just a 3-second audio clip. It is exploding on GitHub with nearly 15,000 stars by offering a completely private, zero-cost studio that runs 100% on your own hardware without needing a single API key.

Clones voices from seconds of audio and generates speech across 23 languages using 5 different specialized TTS engines (including Qwen3-TTS and Chatterbox Turbo).

Supports paralinguistic tags, allowing you to synthesize expressive speech with inline emotions like [laugh], [sigh], [gasp], or [clear throat].

Features a multi-track "Stories" timeline editor for composing complex narratives, conversations, and podcasts directly in the app.

Includes a built-in "pedalboard" with 8 real-time audio effects like reverb, pitch shift, and compression to polish your output.

Try it now → github.com/jamiepine/voicebox

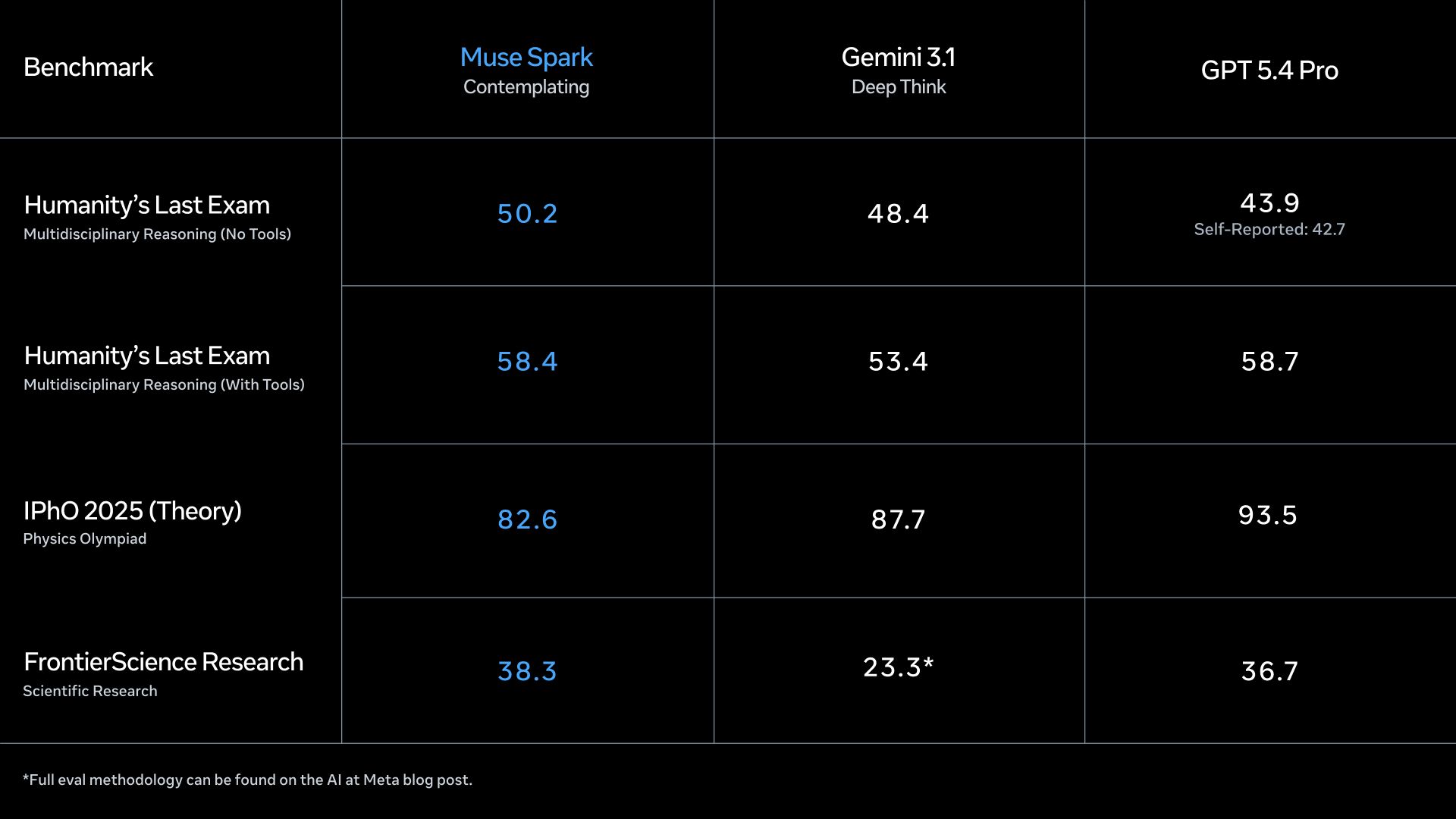

Following a massive internal overhaul, Meta just debuted Muse Spark. It's the first model to emerge from the newly formed "Meta Superintelligence Labs," led by former Scale AI CEO Alexandr Wang, marking a definitive pivot in Zuckerberg's quest to dominate the AI space.

The new lab was created after Zuckerberg reportedly grew frustrated with Llama lagging behind ChatGPT and Claude, prompting Meta to invest a staggering $14.3 billion for a 49% stake in Scale AI.

Muse Spark is available now on the web and the Meta AI app, and it specifically excels at visual STEM questions, coding mini-games, and troubleshooting physical hardware.

An upcoming "Contemplating" mode will use multiple parallel AI agents collaborating on the same problem to solve complex reasoning tasks quickly without massive latency spikes.

Because users must log in with a Facebook or Instagram account, privacy concerns are already swirling around how Meta might use personal social data to feed this new "personal superintelligence."

Try it now → meta.ai/

AIDesigner is a new MCP that effectively gives Claude Code its own design engine. Instead of jumping between Figma and your IDE, you can now generate and refine production-ready UI right inside your codebase.

Before generating anything, it reads your framework, component library, and CSS tokens so the output perfectly matches your actual stack.

generate_design: Creates production-ready UI straight from a text prompt.refine_design: Lets you adjust layouts and colors using natural language.Works seamlessly across Cursor, Codex, VS Code, and Windsurf.

Connects to your environment with just one command.

Try Now → aidesigner.ai/docs/mcp

Andrej Karpathy published a viral GitHub Gist called "LLM Wiki" that amassed over 5,000 stars in just 48 hours. Instead of an AI retrieving information from scratch every time you ask a question (like traditional RAG), this pattern uses an AI agent to build and maintain a persistent, ever-growing knowledge base of interlinked markdown files.

Replaces standard RAG by compiling knowledge once and keeping it current, rather than re-discovering fragments and starting over on every single query.

When you drop a raw source (article, paper, transcript) into a folder, the AI reads it, writes a summary, updates entity pages, flags contradictions, and builds cross-references automatically.

One source can update 10 to 15 wiki pages simultaneously, meaning your explorations compound into a smarter knowledge base over time.

Highly versatile use cases: tracking personal goals, evolving a research thesis over months, building book/fan wikis, or maintaining business docs from Slack threads and meeting notes.

Thanks for making it to the end! I put my heart into every email I send. I hope you are enjoying it. Let me know your thoughts so I can make the next one even better.

See you tomorrow :)

Dr. Alvaro Cintas