Happy Sunday! We just had another crazy week in AI. Alibaba just dropped the world’s most advanced open-source AI that rivals frontier models for free, while a new tool lets AI agents produce polished videos from just a URL or a doc.

And that's not all, here are the most important AI moves you need to know this week.

Nvidia’s Spatial Intelligence Lab has officially released Lyra 2.0. Unlike previous models that only generated single objects, this framework builds entire "navigable" environment, sstreetscapes, interiors, and landscapes, from a single photograph, solving the massive technical hurdle of "spatial forgetting."

Converts a single image into a camera-controlled video walkthrough, which is then "lifted" into 3D representations like Gaussian Splats and meshes.

Solves "spatial forgetting" by maintaining per-frame 3D geometry; if you turn back to a previous area, the model retrieves historical frames to ensure the room looks exactly the same.

Features an interactive GUI where you can draw a path, and the model progressively expands the world as your virtual camera moves forward.

Fully compatible with physics simulators and real-time rendering engines, including Nvidia Isaac Sim for training autonomous robots.

Try it now → https://huggingface.co/nvidia/Lyra-2.0

The new Gemini macOS app brings Google’s assistant directly to the desktop, allowing users to interact with the AI without ever switching tabs. It positions Gemini as a direct competitor to the Spotlight-style integrations from OpenAI, Anthropic, and Perplexity.

Features a new Option + Space shortcut that pulls up a floating chat bubble for instant access from any screen.

Includes a "share window" feature that allows Gemini to see and pull information from your active apps to help answer questions or provide context.

Deeply integrated with the Google ecosystem, allowing users to upload files, photos, and documents directly from Google Drive.

Supports native generation of images, videos (via Veo), and music (via Lyria) directly within the desktop interface.

Try it now → https://gemini.google/mac/

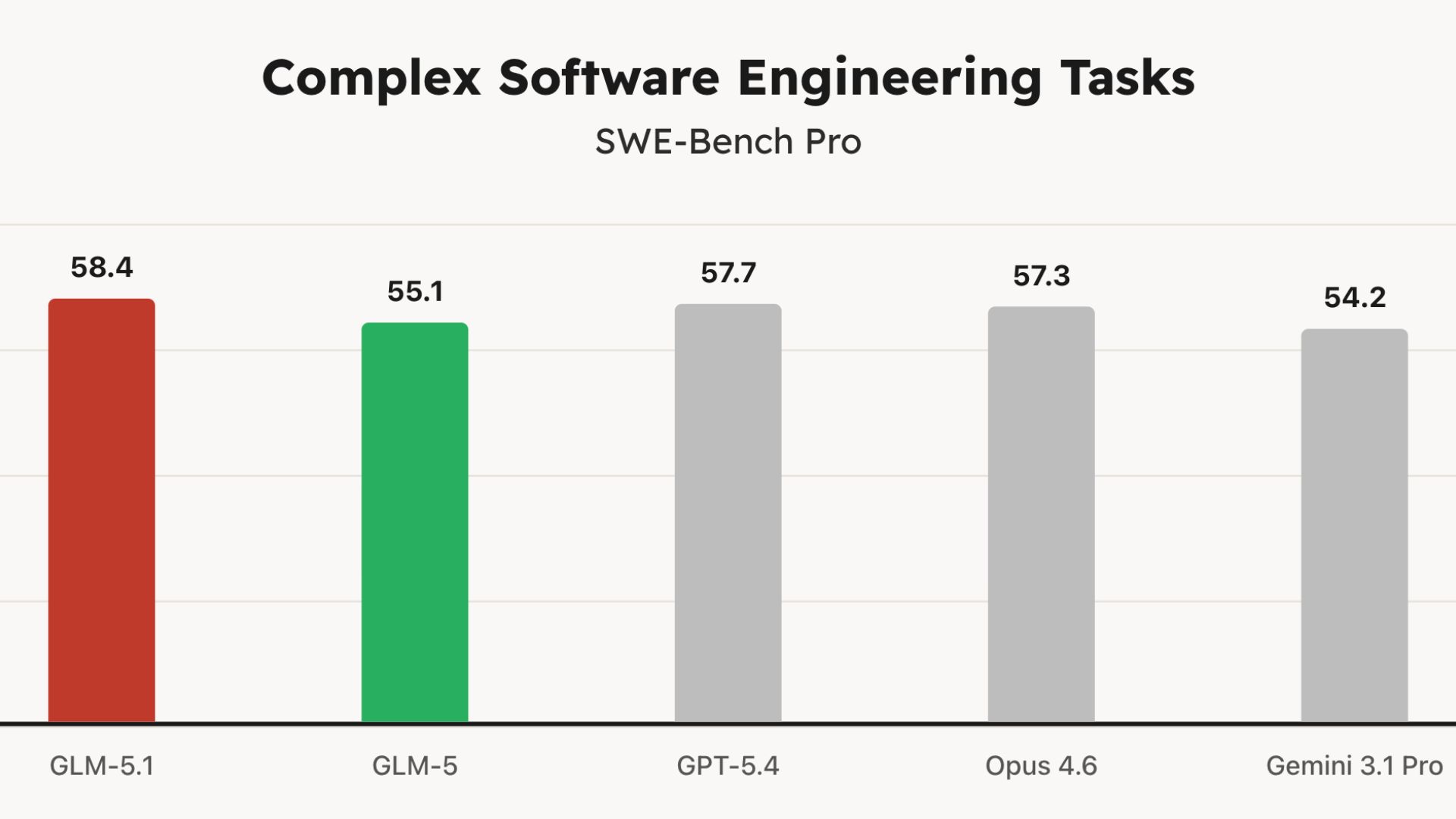

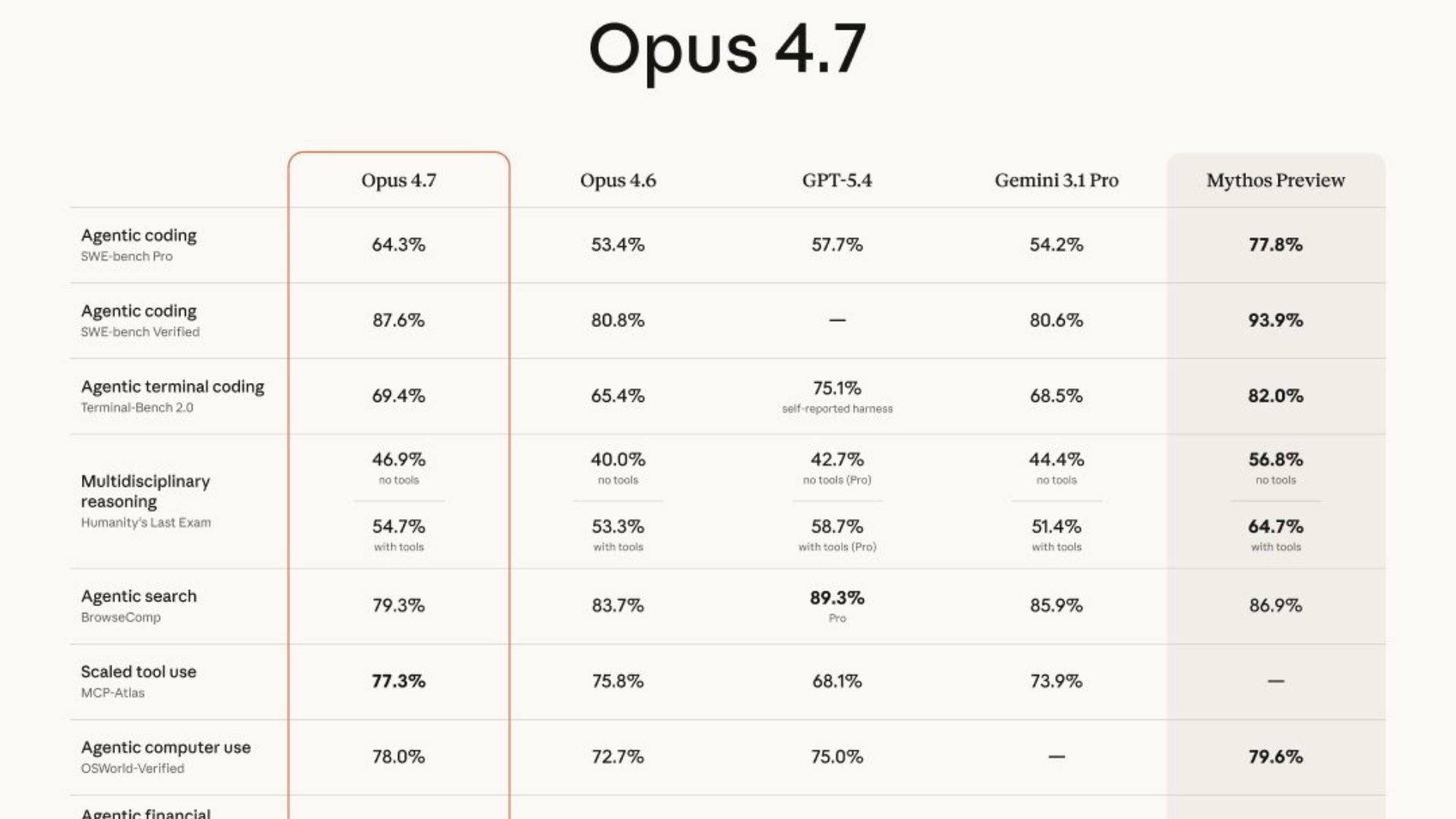

Anthropic has officially launched Claude Opus 4.7, a major upgrade designed for the burgeoning agentic economy. While it beats GPT-5.4 on several key benchmarks like finance and tool use, the real story is that Anthropic has intentionally "dialed down" its power compared to their restricted Mythos model to ensure safety.

Substantial improvements in coding and instruction-following; it interprets prompts more literally, requiring less back-and-forth but more precise instructions.

A massive 3x vision upgrade allows the model to process high-resolution images up to 3.75 megapixels, a game-changer for AI agents analyzing dense UI screenshots or technical diagrams.

It’s a "practical frontier" model, optimized for reliability and long-horizon autonomy, it now self-validates outputs and recovers from tool failures instead of freezing.

Pricing remains at $5/$25 per million tokens via API, though a new tokenizer may increase effective costs by up to 35% depending on your content mix.

Try it now → https://claude.ai/

HeyGen has officially released HyperFrames, an open-source toolchain that lets AI agents and developers render high-quality MP4 videos directly from HTML, CSS, and JavaScript. By using the web languages that AI models already know best, HyperFrames bypasses the need for complex video editing software.

Designed specifically for AI agents like Claude Code, Cursor, and Codex—one command installs a "skill pack" that teaches them how to scaffold, animate, and render full video projects.

Instead of learning After Effects, agents use familiar web primitives: CSS for positioning, GSAP for timelines, Three.js for 3D, and Lottie for animations.

The framework adds simple data-attributes to standard HTML to define timelines, durations, and layers, making video production as scriptable as building a website.

Try it now → https://github.com/heygen-com/hyperframes

Anthropic’s latest product, Claude Design, is a specialized workspace for creating prototypes, pitch decks, and one-pagers through pure conversation. Powered by Claude Opus 4.7, it’s designed to eliminate the "blank page" problem for non-designers by generating high-fidelity drafts in seconds.

Users can describe a visual vision, like a "serene meditation app with calming typography", and receive a fully realized prototype that can be tweaked with follow-up instructions.

The tool can ingest a company’s actual codebase and design files to automatically apply existing design systems, ensuring every output stays on-brand.

It is designed to complement tools like Canva rather than replace them; projects can be exported as editable PPTX or sent directly to Canva for collaborative refinement.

Claude Design is now available in research preview for Pro, Max, Team, and Enterprise subscribers.

Try Now → https://claude.ai/new

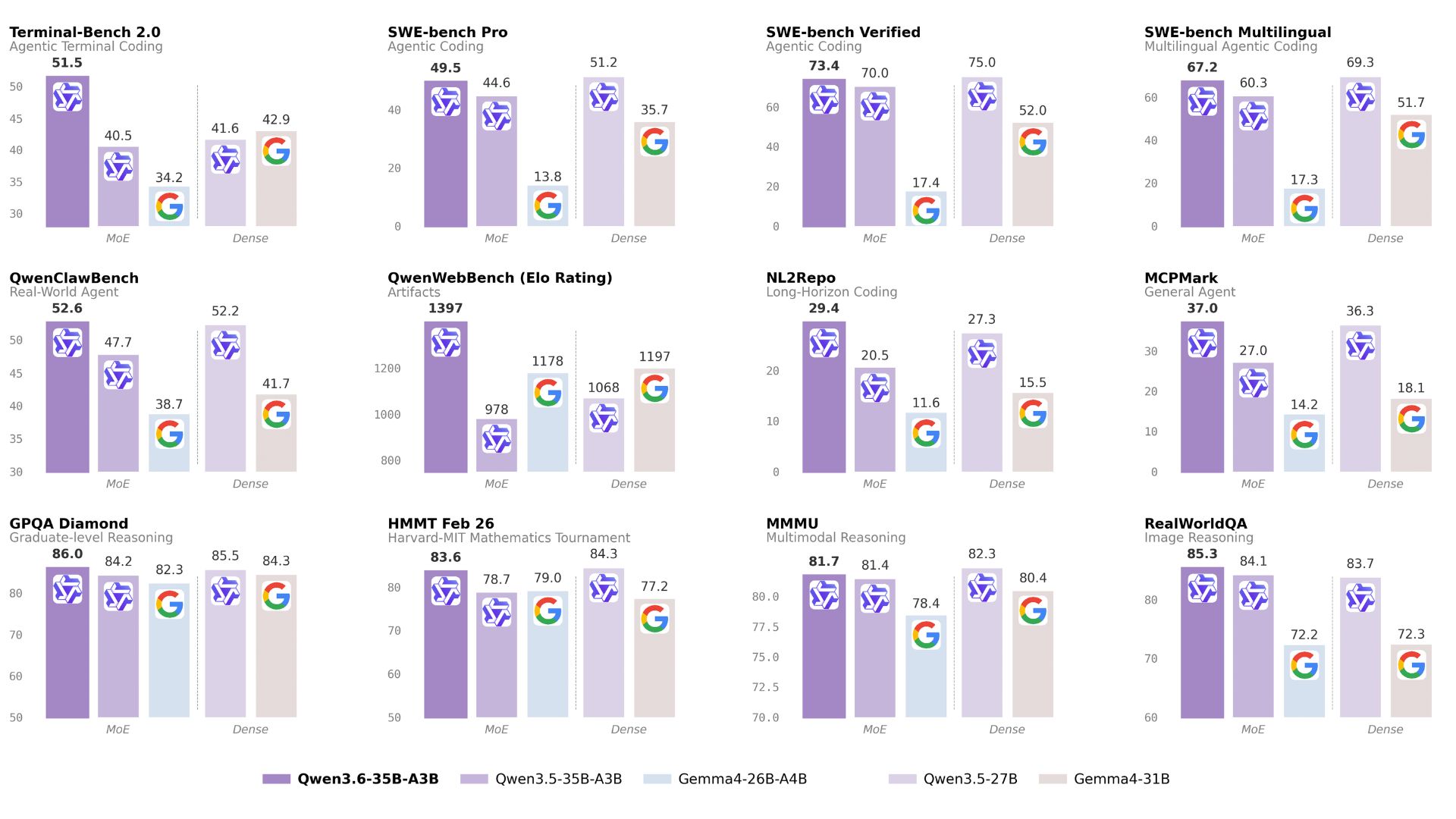

In a head-to-head SVG coding challenge, Alibaba’s new Qwen3.6-35B-A3B model, running locally on a laptop, beat out Anthropic’s massive proprietary Claude Opus 4.7. The task? Drawing a pelican riding a bicycle.

Running a 21GB quantized version of Qwen 3.6 on a MacBook Pro M5, the model produced a structurally correct bicycle frame, added atmospheric details like clouds, and included an accurate caption.

In contrast, Claude Opus 4.7 struggled with basic geometry, failing the bicycle frame twice, even when pushed with "max" thinking levels enabled.

To prove Qwen wasn't just "training for the test," Willison ran a secret backup benchmark: a flamingo riding a unicycle. Qwen won again, adding "charisma" like sunglasses, a bowtie, and a cigarette.

The results break a long-standing correlation where model size usually dictated SVG quality, showing that smaller, highly efficient models are catching up in niche spatial reasoning.

Try it now → https://chat.qwen.ai/

Thanks for making it to the end! I put my heart into every email I send. I hope you are enjoying it. Let me know your thoughts so I can make the next one even better.

See you tomorrow :)

Dr. Alvaro Cintas