Happy Sunday! We just had another crazy week in AI. OpenAI just launched the first voice model that can actually reason and take action in real time, while this new open-source AI lets you run a 70 billion parameter model on just a 4 gigabyte GPU.

And that's not all, here are the most important AI moves you need to know this week.

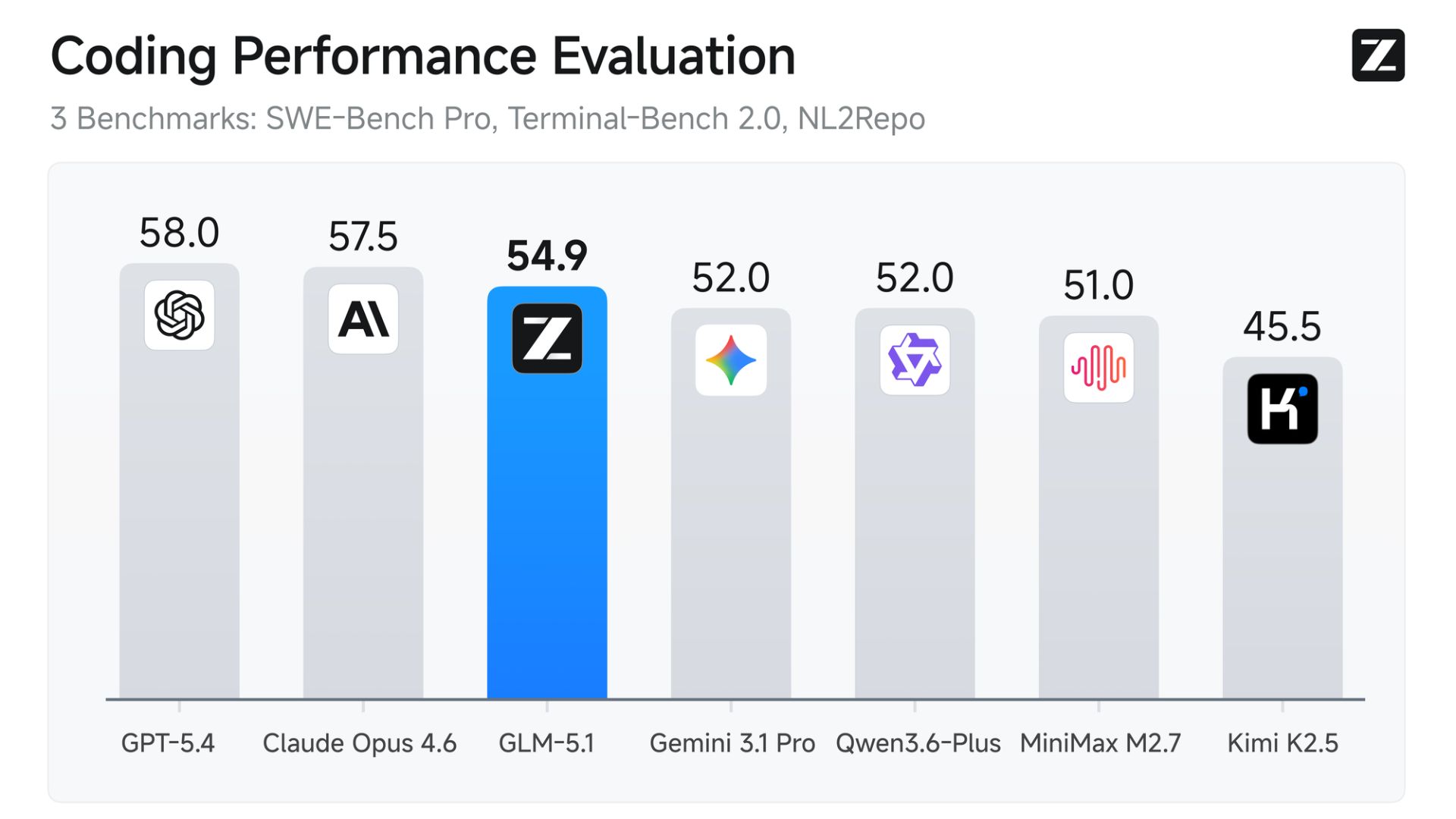

Zhipu AI has officially released GLM-5.1, a colossal 744 billion parameter open-weight model that is actively beating out top Western closed models on coding benchmarks. Best of all? It’s completely free and open-source under the MIT license.

Massive Scale: It uses a Mixture-of-Experts (MoE) architecture with 744 billion total parameters, activating roughly 40 billion per token for highly efficient inference alongside a 200K context window.

True Open Source: Unlike other restrictive "open" models, the MIT license allows for full commercial use, unrestricted fine-tuning, and self-hosting with zero royalty fees.

Coding Leaderboard Dominance: GLM-5.1 scored a massive 58.4 on SWE-Bench Pro, officially edging past both OpenAI's GPT-5.4 (57.7) and Anthropic's Claude Opus 4.6 (57.3) in software engineering benchmarks.

8-Hour Autonomous Execution: Instead of just generating single code snippets, GLM-5.1 is built for "long-horizon agentic engineering." It can be given a complex objective and work autonomously for up to 8 hours, compiling, testing, and self-correcting across thousands of tool calls.

Try it now → https://chat.z.ai/

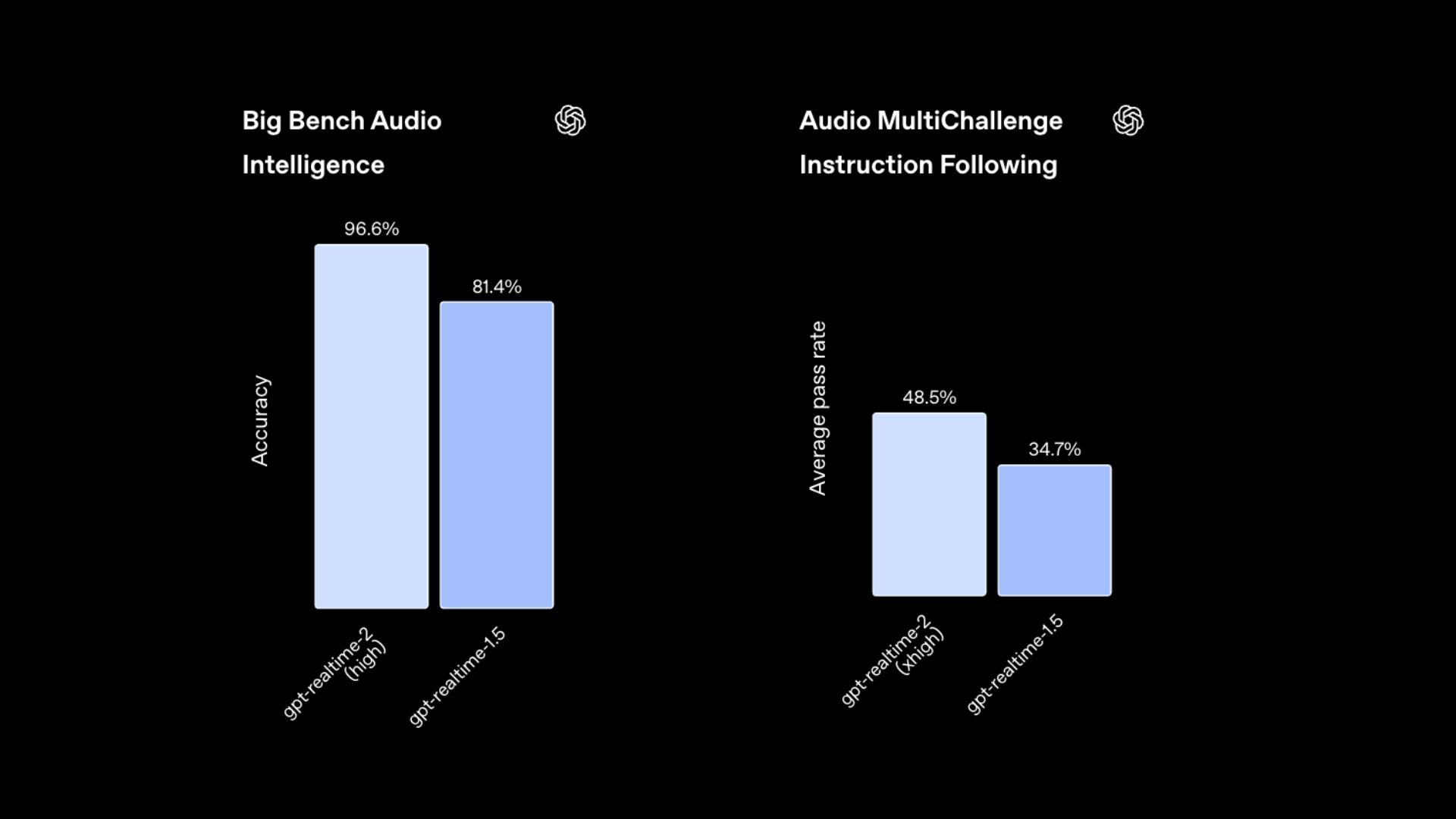

OpenAI has launched three new discrete voice models, GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper. This isn't just a single voice product; it’s a modular stack designed to reduce the massive overhead and context-ceiling issues that have plagued enterprise voice deployments for years.

GPT-Realtime-2 is the headline act: the first voice-native model with "GPT-5 class reasoning," capable of handling complex logic and difficult requests mid-conversation without breaking flow.

The system decouples tasks: instead of one model doing everything poorly, enterprises can route translation to Realtime-Translate (70+ languages) and transcription to Realtime-Whisper.

Features a massive 128K-token context window, meaning agents can remember the details of an hour-long conversation without needing expensive "state reconstruction" layers.

Designed for deep orchestration: these models can act as discrete primitives within a larger agent stack, allowing voice agents to actually do work in the background rather than just talking.

Try it now → https://chatgpt.com/

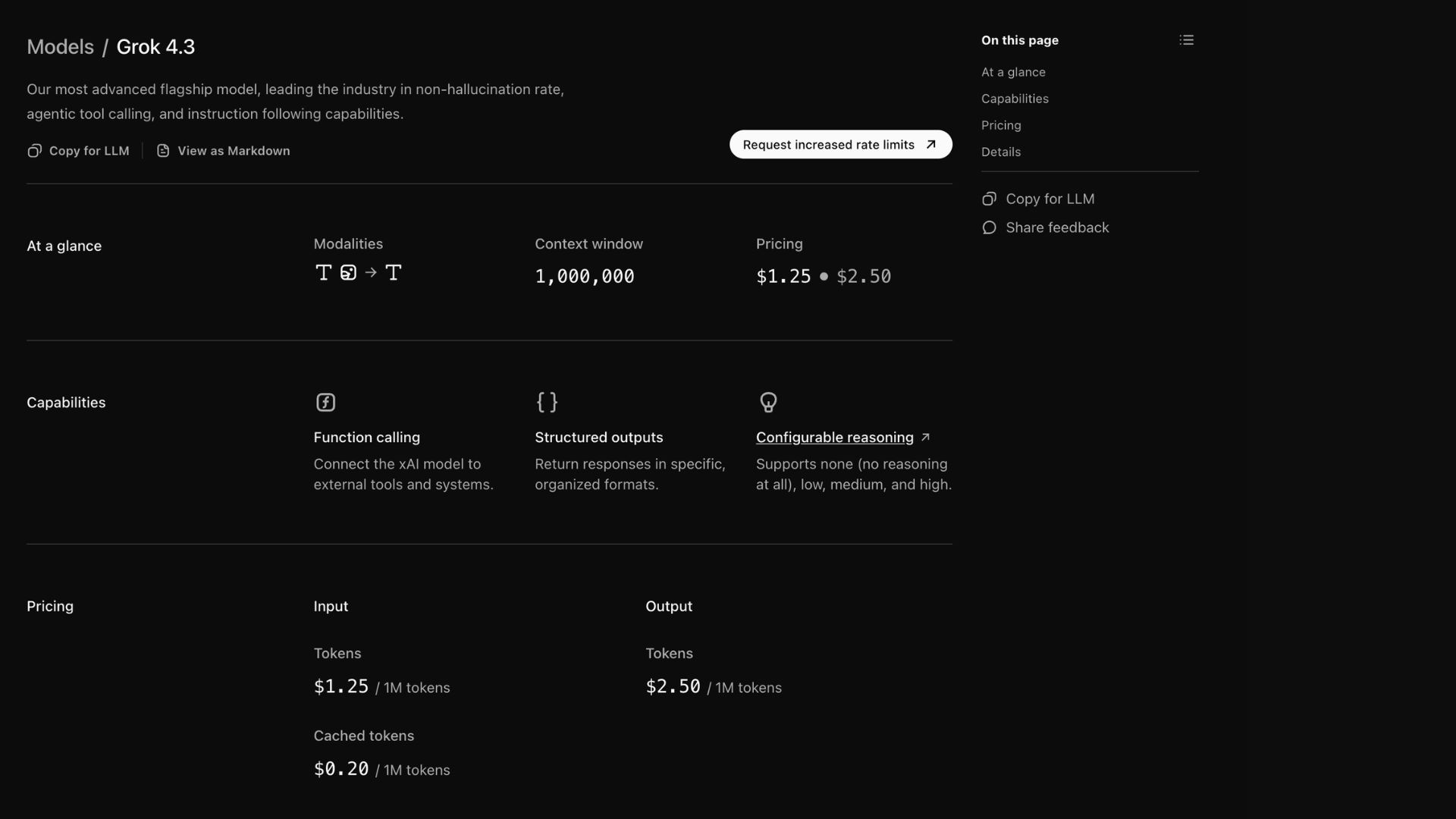

While Elon Musk battles OpenAI in court, his rival firm xAI just shipped a massive platform update. Grok 4.3 is a new frontier LLM built entirely around "always-on reasoning," designed specifically for complex agentic workflows, launched alongside a highly competitive new voice cloning API.

Grok 4.3 features a massive 1 million token context window (enough to hold several thick novels or a mid-sized codebase) and native text, image, and video inputs.

"Always-on reasoning" is permanently active, meaning the model "thinks" before it speaks for every single query to maximize factual accuracy and handle complex, multi-step instructions.

It can autonomously run Python code in a sandbox, search the live web (and X), and output fully formatted multi-sheet Excel dashboards, PDFs, and 9-slide PowerPoint decks directly in chat.

Aggressive pricing: At just $1.25 per million input tokens and $2.50 per million output tokens, it is significantly cheaper than GPT-5.4 and Claude Opus 4.7 (though prices double for context exceeding 200k tokens).

Try it now → https://grok.com/

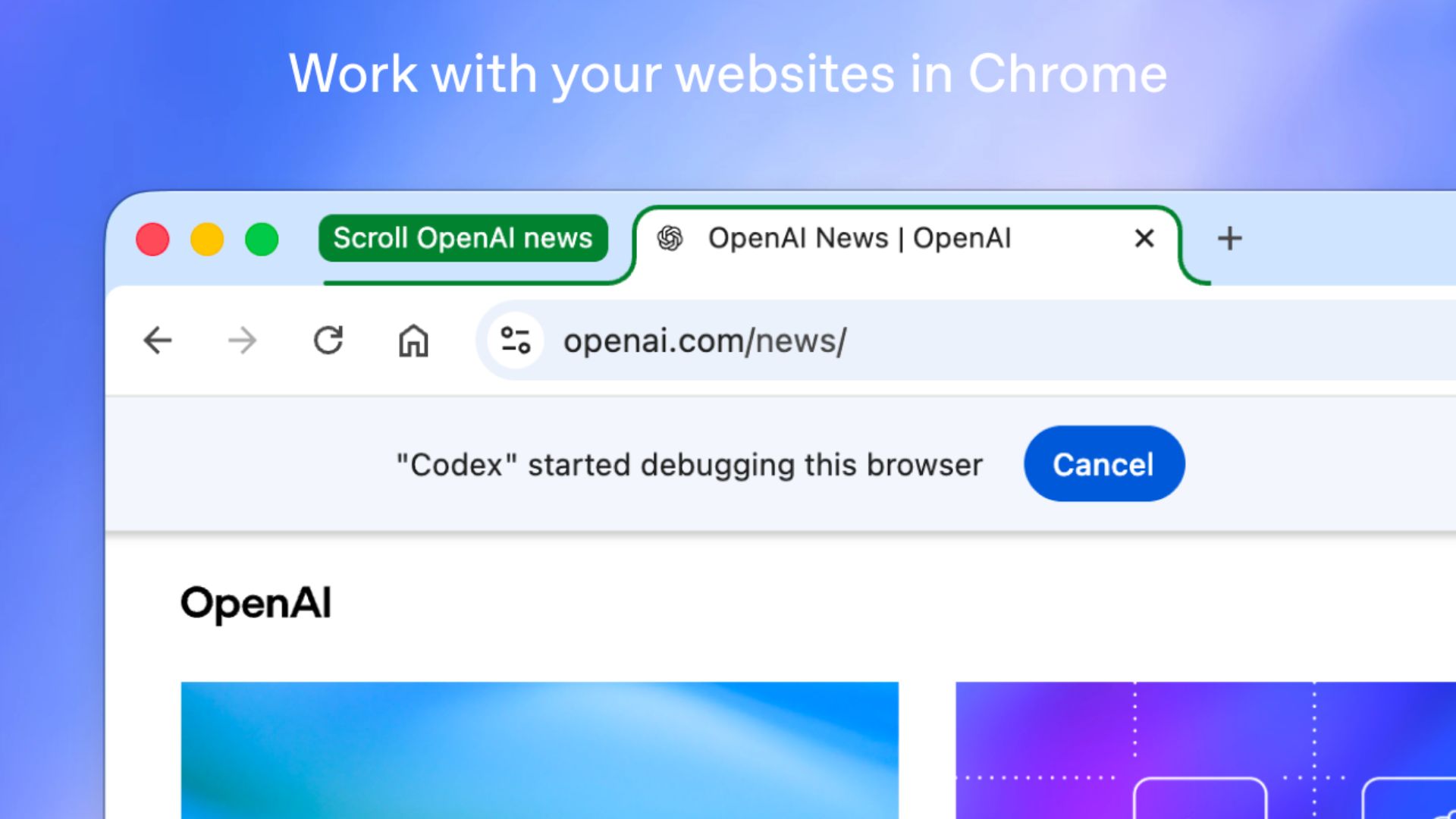

OpenAI has launched a new Chrome extension for its Codex app, allowing the AI coding tool to operate natively inside your browser on both Mac and PC. Instead of relying purely on terminal outputs or local environments, Codex can now read real-time DOM states and console errors to fix bugs exactly where they happen.

Grants Codex access to your actual signed-in browser state, meaning it can work securely inside internal dashboards, CRMs like Salesforce, and web apps like Gmail or LinkedIn without needing authentication tokens in the prompt.

Uses Chrome DevTools to test web apps, inspect logs, and review live page states in real time, all without needing a terminal or taking over your active browsing session.

Runs tasks in the background using task-specific tab groups, keeping the AI's work organized and separate from your personal workflow.

Includes strict permission controls, Codex asks before interacting with a new website host, and users can manage allowlists or blocklists for specific domains.

AirLLM is an open-source Python library that completely redefines what's possible on low-end commodity hardware. Instead of requiring tens of gigabytes of VRAM to load a massive model, AirLLM streams it layer-by-layer directly from your disk, allowing you to run massive Hugging Face models on a single 4GB GPU.

It uses a sequential execution strategy: it loads a single transformer layer from storage into the GPU, computes the output, discards it, and moves to the next layer, keeping VRAM usage incredibly low.

By default, it requires absolutely zero quantization, distillation, or pruning, meaning you get the exact same accuracy and output quality as a model running on a massive server rack.

Setup is completely frictionless: simply run

pip install airllm, point the library at a standard Hugging Face model repository (like Llama 3 70B, Qwen, or Mixtral), and start generating.

Try Now → https://github.com/lyogavin/airllm

Anthropic has released "The Complete Guide to Building Skills for Claude," a detailed playbook on a powerful new way to customize the AI. Instead of constantly pasting your company's style guides, sprint planning rules, or coding standards into every single chat, you can now package them into a simple "Skill" folder that Claude automatically triggers precisely when needed.

Skills use a clever "progressive disclosure" architecture to save on token costs. Claude only keeps a tiny text description in its active memory; if a user's prompt matches that description, it dynamically loads the full, multi-page instructions in the background.

You can pair Skills with Anthropic's Model Context Protocol (MCP). If MCP gives Claude access to your company's tools and data (the kitchen), Skills provide the exact step-by-step instructions (the recipes) on how to properly use them.

Skills go beyond just text instructions. You can embed Python or JavaScript scripts directly inside the folder for deterministic tasks like complex math or data sorting, letting Claude execute code reliably instead of just guessing the output.

The 33-page guide covers everything from writing the perfect YAML frontmatter to testing your Skill's automatic activation rate, claiming anyone can build their first functional Skill in just 15 to 30 minutes.

Thanks for making it to the end! I put my heart into every email I send. I hope you are enjoying it. Let me know your thoughts so I can make the next one even better.

See you tomorrow :)

Dr. Alvaro Cintas