Happy Sunday! We just had another crazy week in AI. OpenAI fired back at Google with an image generator that's 4x faster, while Google rolled out Gemini 3 Flash bringing Pro-level AI to billions worldwide.

And that's not all, here are the most important AI moves you need to know this week.

Alibaba has released Wan2.6, China's first reference-to-video generation model that enables anyone to star in AI-generated videos using their own appearance and voice. The breakthrough comes as video AI shifts from generic content creation toward personalized, character-consistent storytelling that puts real people into synthetic scenes.

Upload a 5-second reference video to clone both appearance and voice characteristics

Generates 15-second 1080p HD videos with full audio-visual synchronization

Enables intelligent multi-shot storytelling with consistent characters across cuts

Delivers cinematic-quality output with enhanced visual refinement and aesthetic expression

Try it Now → https://create.wan.video/

OpenAI has released GPT-Image 1.5, its latest image generation model built to compete directly with Google's Nano Banana Pro. The new model delivers 4x faster generation speeds, precise editing capabilities, and improved text rendering, marking OpenAI's push to reclaim leadership in AI image generation after Google's recent dominance.

Generates images up to 4x faster than GPT-Image 1

Preserves facial likeness, logos, and composition across iterative edits

Handles dense text, small lettering, and complex layouts like infographics

20% cheaper per image compared to previous generation

Try it Now → https://chatgpt.com/images/

Google has rolled out Gemini 3 Flash globally, bringing Pro-level intelligence at Flash speed and pricing to billions of users. The model now powers AI Mode in Search, the Gemini app, and developer tools, marking Google's most aggressive push yet to make frontier AI accessible at scale.

Matches Gemini 3 Pro performance at a fraction of the cost and latency

Delivers state-of-the-art reasoning with unprecedented depth and nuance

Better at understanding context and intent, requiring less prompting

Available immediately in AI Studio, Vertex AI, and Google Antigravity

Try it Now → https://gemini.google.com/

Meta has unveiled SAM Audio, the first unified multimodal model for audio separation that lets users isolate specific sounds from complex audio mixtures using text, visual, or time-based prompts. The release extends Meta's Segment Anything vision to audio, transforming how creators edit and manipulate sound.

Isolate any sound using text descriptions ("dog barking," "guitar riff")

Click on objects or people in video to extract their associated audio

Mark time segments where target sounds occur (industry-first "span prompting")

Combine multiple prompt types for precision control

Achieves state-of-the-art performance across speech, music, and sound effects

Try it Now → https://www.segment-anything.com/

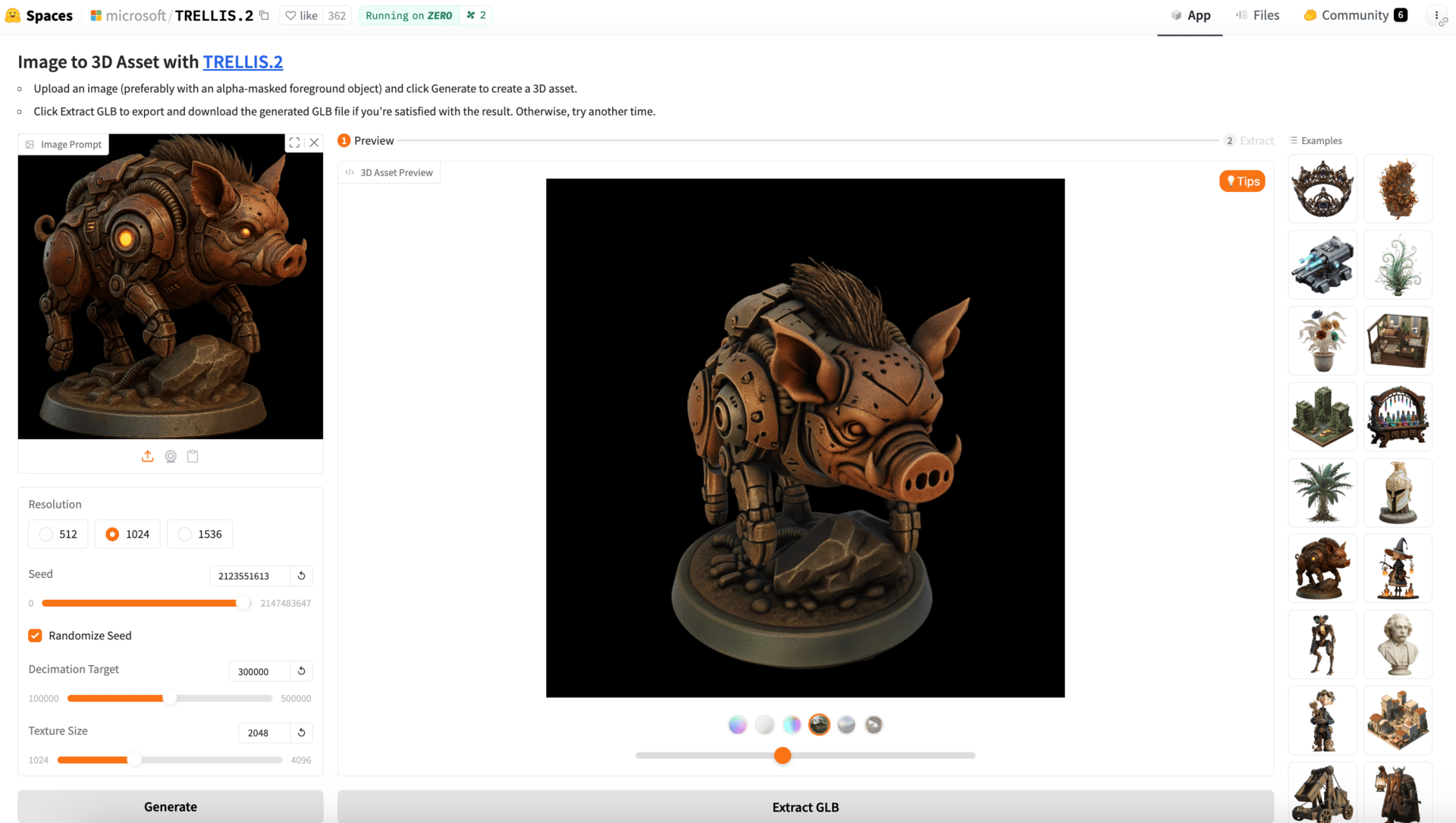

Microsoft has released Trellis 2, a 4-billion parameter model that converts any image into high-fidelity 3D assets with full PBR materials in seconds. The open-source release represents a major breakthrough in image-to-3D generation, making professional 3D asset creation accessible to anyone.

Generates up to 1536³ resolution 3D models with full textures

Handles complex topologies including open surfaces and transparent materials

Exports to GLB, OBJ, and other industry-standard formats

Includes complete PBR materials (base color, metallic, roughness, opacity)

Try it Now → https://huggingface.co/spaces/microsoft/TRELLIS.2

GetStream has released Vision Agents, the first open-source framework for building AI that watches and responds to live video in real-time. Unlike voice-only agents, Vision Agents can see what's happening, analyze it using computer vision, and provide intelligent feedback, opening entirely new use cases for AI coaching and monitoring.

Built video-first with native WebRTC support for true real-time streaming

Integrates with OpenAI Realtime, Gemini Live, and other leading models

Includes processors like YOLO for pose detection and object recognition

Provides turn detection, voice activity detection, and tool calling

Try it Now → https://github.com/GetStream/Vision-Agents

Nvidia has released Nemotron 3, a family of open models designed specifically for building multi-agent AI systems that need to work together at scale. The release includes advanced training data, reinforcement learning environments, and libraries, marking Nvidia's most comprehensive push into transparent, customizable AI for enterprises.

Nemotron 3 Nano delivers 4x higher throughput than previous generation

Supports 1 million token context window for long-running agent workflows

Uses hybrid Mamba-Transformer MoE architecture activating only 3B of 30B parameters

Released under open license with complete model weights, datasets, and training recipes

Thanks for making it to the end! I put my heart into every email I send. I hope you are enjoying it. Let me know your thoughts so I can make the next one even better.

See you tomorrow :)

Dr. Alvaro Cintas