Good Morning! Researchers have just mathematically confirmed the "AI Layoff Trap", a collective suicide pact where companies are forced to automate themselves into a bankrupt economy. Plus, I’ll show you how to generate cinematic text-reveal videos with Veo 3.1 light.

Plus, in today’s AI newsletter:

Anthropic launches "Claude for Word"

The AI Layoff Trap: A Mathematical Death Spiral

MiniMax has open sourced M2.7

How to Generate Cinematic Text-Reveal Videos with Veo 3.1 Light

4 new AI tools worth trying

AI RESEARCH

Two researchers from UPenn and Boston University have published a paper proving that the current wave of AI automation is a classic Prisoner’s Dilemma. While individual companies save on costs by firing workers, they are simultaneously destroying the very consumer base they need to survive.

Every laid-off worker is a lost customer; as automation scales, the collective loss of purchasing power eventually bankrupts the companies that automated in the first place.

CEOs are stuck in a "Red Queen effect": if they don’t automate, competitors will undercut their prices and kill them immediately. If they do automate, they contribute to a total economic collapse later.

Real-world numbers are already confirming the trend: Block (formerly Square) cut nearly 5,000 employees, Salesforce replaced 4,000 agents with AI, and over 100,000 tech workers were laid off in 2025 alone.

Research shows that 80% of the US workforce holds jobs with tasks susceptible to AI automation, creating a massive "deadweight loss" where both workers and owners eventually lose.

It’s a structural trap built into the market. Because no voluntary agreement between companies can stop the race to automate, the math suggests that market forces alone cannot break this cycle. Without significant policy intervention like an automation tax, we are headed toward an economy where AI produces everything, but nobody has the money to buy any of it.

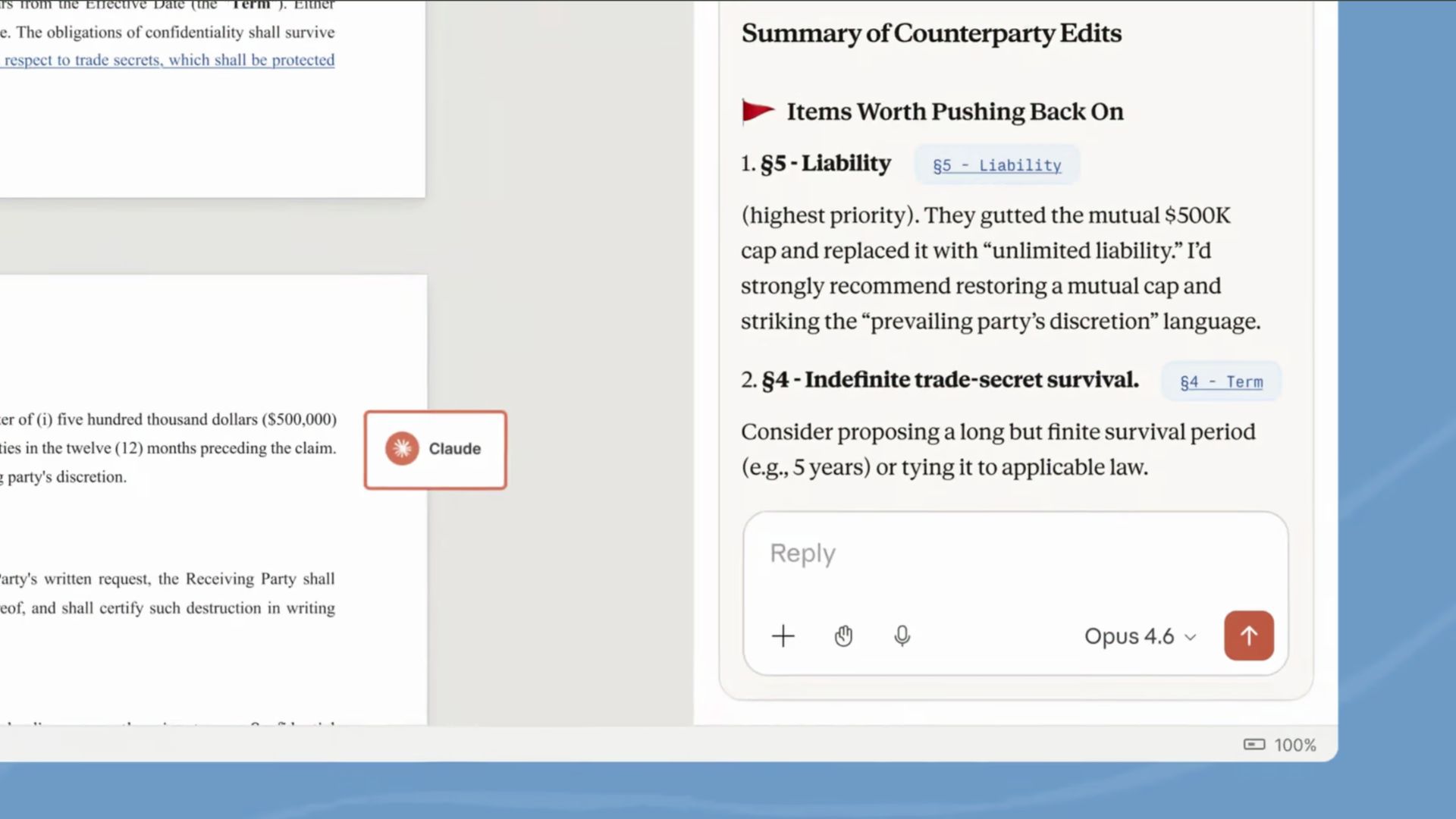

AI INTEGRATIONS

Anthropic has officially launched the "Claude for Word" add-in, allowing Team and Enterprise users to access Claude’s writing and reasoning capabilities directly within the Microsoft Word sidebar.

Draft, edit, and rewrite entire documents or specific sections without ever leaving the Word interface

Maintains strict formatting and layout integrity, ensuring that AI-generated text doesn't break your document’s design

Integrated with Microsoft’s native "Track Changes" feature, allowing users to review, accept, or reject AI edits just like they would from a human colleague

Positioned as a direct competitor to Microsoft’s own Copilot, specifically targeting professional workflows that require high-fidelity document revision

The battle for the "AI office" is heating up. By launching a dedicated Word integration that respects enterprise formatting and tracking, Anthropic is making a play for the billions of hours humans spend in documents. This move proves that "vibe coding" isn't just for software, it's coming for every professional document, memo, and contract on the planet.

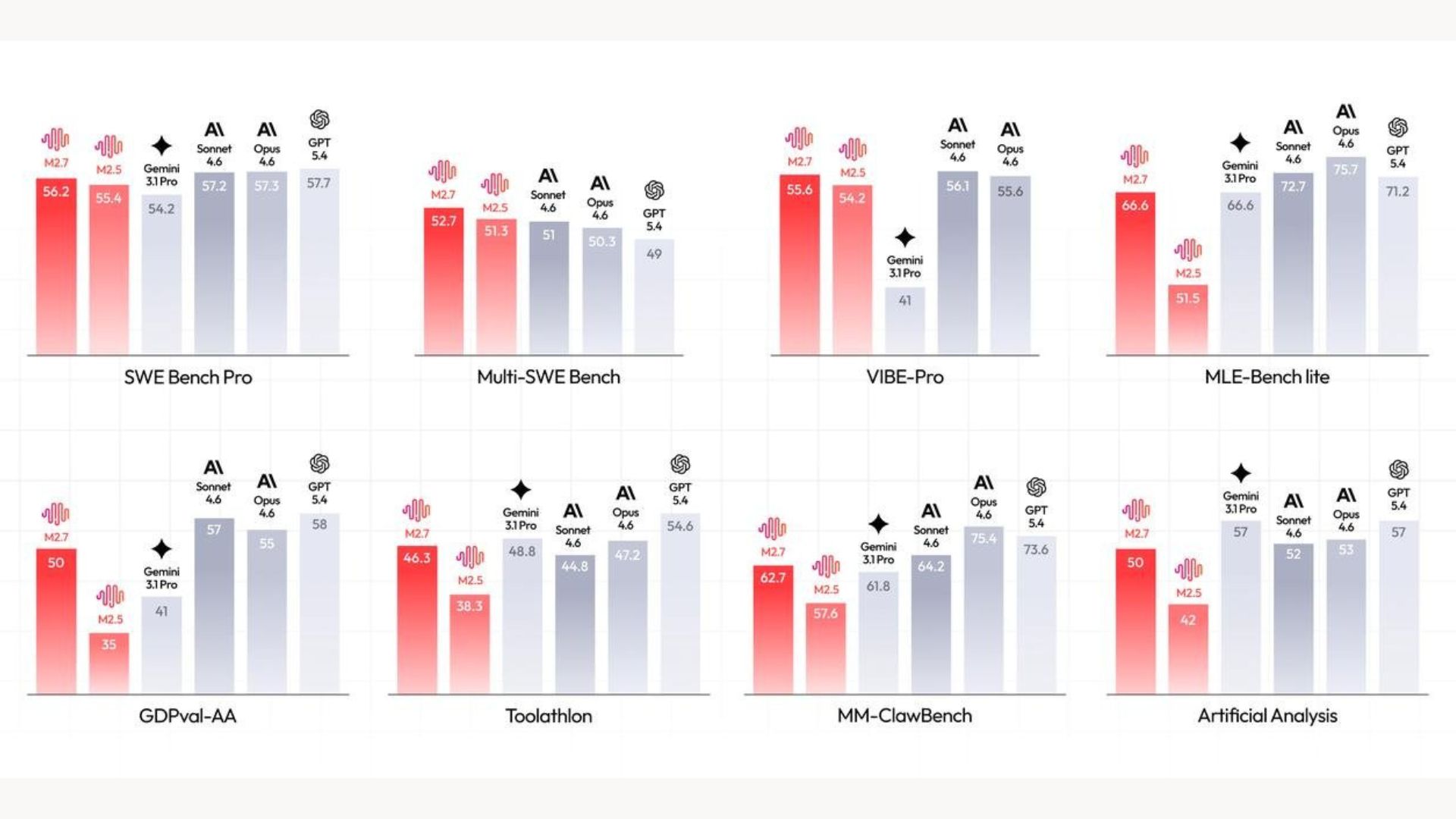

AI MODELS

MiniMax’s latest release is built specifically for agent-based workflows and complex engineering tasks. The standout feature is its self-evolution capability, allowing the model to improve its own performance by 30% through a cycle of autonomous testing and refinement.

Achieves a staggering 66.6% medal rate in machine learning competitions, proving its ability to solve high-level technical problems.

Delivers elite software engineering performance with a 56.22% score on SWE-Pro, putting it within striking distance of the world’s top proprietary models.

Specialized in multi-agent collaboration and "skill compliance," ensuring it follows complex instructions 97% of the time during tool-use tasks.

Features rapid "incident recovery" (under 3 minutes), making it a reliable choice for production-level engineering and real-world automation.

We are seeing the gap between open-source and closed-source models vanish in real-time. By open-sourcing a model that can autonomously improve its own logic and engineering skills, MiniMax is handing developers a tool that doesn't just stay static, it gets better the more you use it. For "vibe coders" and agentic startups, this is a massive win for building reliable, self-correcting AI systems without the massive API costs.

HOW TO AI

🗂️ How to Generate Cinematic Text-Reveal Videos with Veo 3.1 Light

In this tutorial, you will learn how to bypass complicated API setups and instantly generate hyper-realistic text-reveal videos using Google's new Veo 3.1 Light model.

🧰 Who is This For

People creating short-form content (Reels, TikToks, Shorts)

Creators who want cinematic edits without heavy tools

Social media marketers making scroll-stopping videos

Personal brands posting storytelling content

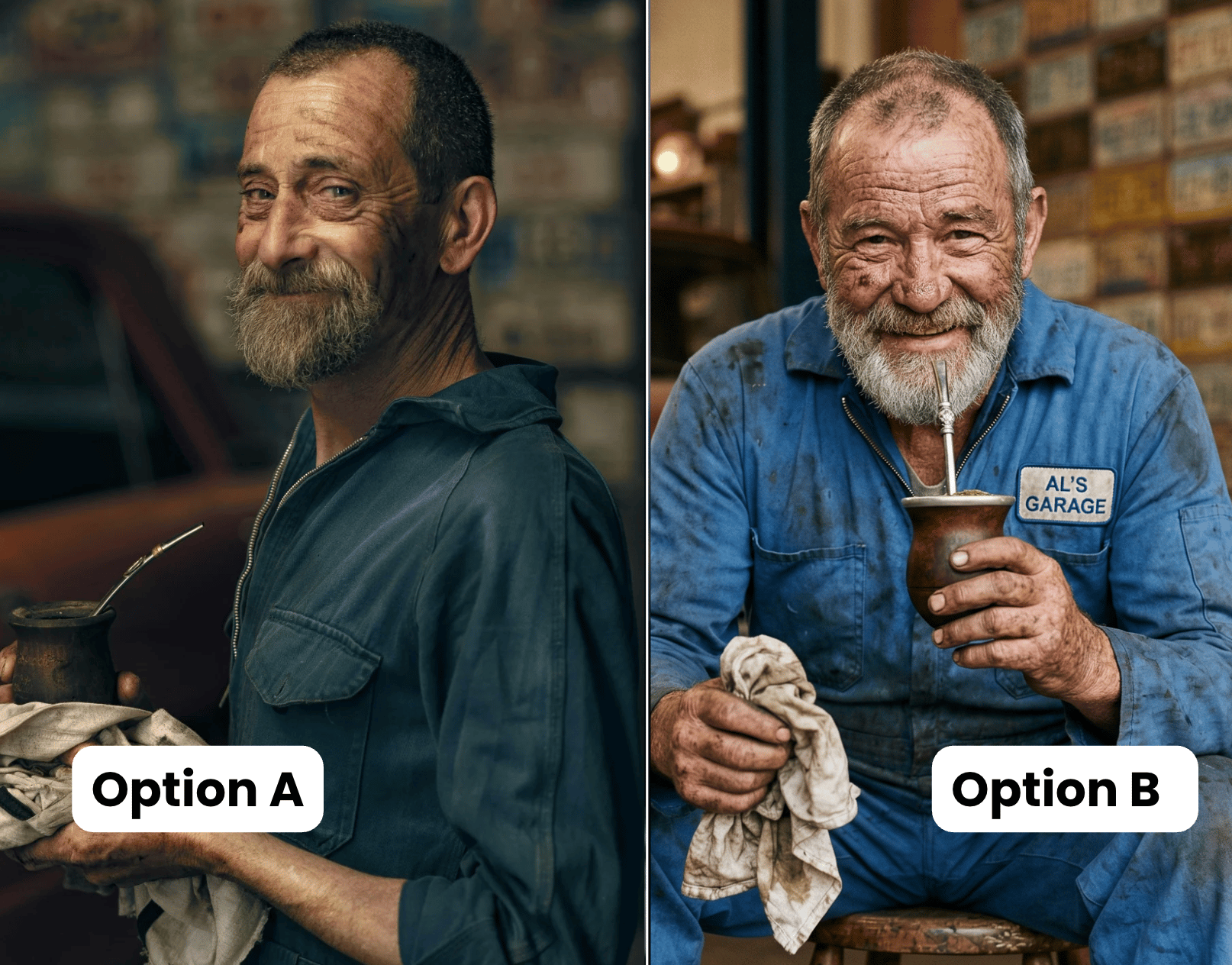

STEP 1: Set Your Scene with a Starting Frame

Head over to Google Flow and start a new project. To get the most photorealistic and physically accurate results, always start with a background image rather than generating from a blank canvas.

Upload a high-resolution starting frame from your computer, like a cinematic beach at night, a sweeping landscape, or even the classic Windows XP hill. This gives the AI's physics engine a realistic environment to ground the text in.

STEP 2: Craft the Perfect Material Prompt

Instead of using the complex type Motion demo app, we are going to use a single, highly detailed paragraph prompt.

Prompt:

“ETERNITY / APPROACH / MYSTIC] in a [SCRIPT FONT / SANS SERIF / HANDWRITTEN] style seamlessly materializes as a physical 3D object within the attached scene. The letters must be realistically sculpted from [SOFT WHITE VAPOR / GLOWING MOLTEN EMBERS / TURBULENT LIQUID DROPLETS], inheriting the exact lighting and textures of the environment. The motion should be a smooth reveal, appearing as if the text is being [SHAPED BY THE WIND / ERUPTING FROM THE HEAT / BLASTED BY A HIDDEN CURRENT] to create a high-quality, atmospheric transition.”

You need to define four key elements: the exact text you want to reveal, the typography style (like a sleek sans-serif), the physical material the text is made of, and exactly how it animates into existence.

Crucial tip: Keep the materials grounded in reality (like water, stone, or cloud) rather than impossible physics like "pure light." The model is trained on real-world video physics, so it struggles to render elements that cannot exist in nature.

STEP 3: Configure Veo 3.1 Light and Generate

With your image and prompt ready, navigate to the video section. Set your aspect ratio to 16:9 for that cinematic wide shot, and choose to generate a couple of variations at once. For the model engine, select Veo 3.1 Light. Even though Fast and Pro variations exist, the Light model is incredibly affordable (and can be used with the 100 free credits Google gives users every month) while remaining just as capable for this task. Hit generate and let the model work its magic for about two to three minutes.

STEP 4: Review, Refine, and Export

Once generated, preview your variations. You will notice the video automatically includes natively generated background music. If the physics and textures look right, you can download the clip in your preferred resolution. If you want to push the visual fidelity further, use Google Flow's built-in tools to extend the video's duration, alter the camera angle, or adjust the camera movement before exporting your final asset.

Apple is testing four AI glasses designs with rectangular and oval frames, multiple colors, and a camera system with vertically oriented oval lenses.

Anthropic met with Christian leaders in March to seek input on Claude's moral and spiritual development and if it could be considered a “child of God”.

Analysts and researchers say Google's TurboQuant compression algorithm to make LLMs more efficient is more likely to expand memory chip demand than reduce it.

Alibaba and China Telecom launch a data center in southern China that is powered by 10,000 of Alibaba's Zhenwu chips designed for AI training and inferencing.

🔥 Muse Spark: Meta’s new AI for personal, context-aware agents

💎 Gemma 4: Google’s powerful small AI model

🎥 Avatar V: HeyGen’s AI that creates high-quality avatar videos

🧠 PikaStream 1.0: turns AI agents into talking, face-to-face video bots

THAT’S IT FOR TODAY

Thanks for making it to the end! I put my heart into every email I send, I hope you are enjoying it. Let me know your thoughts so I can make the next one even better!

See you tomorrow :)

- Dr. Alvaro Cintas