Good Morning! Anthropic just dropped Claude Opus 4.7, a model that is both a massive step forward and a deliberate exercise in restraint as the company prepares for its true behemoth: Claude Mythos. Plus, I’ll show you how to generate viral marketing assets using AI.

Plus, in today’s AI newsletter:

Anthropic Releases Opus 4.7

OpenAI Unveils GPT-Rosalind for Scientific Discovery

Alibaba unveils Qwen3.6-35B-A3B

How to Generate Viral Marketing Assets using Pippit AI

4 new AI tools worth trying

AI MODELS

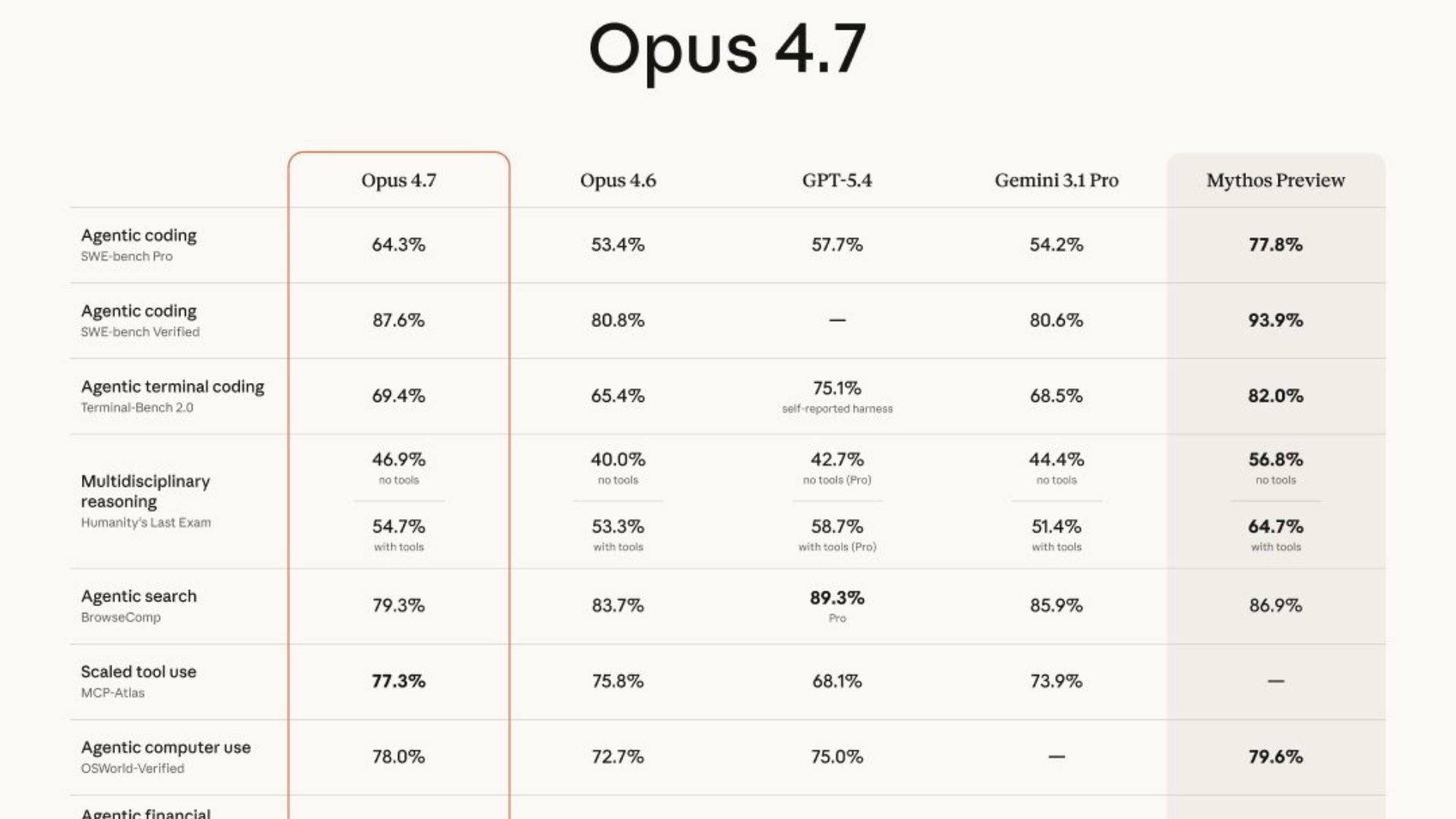

Anthropic has officially launched Claude Opus 4.7, a major upgrade designed for the burgeoning agentic economy. While it beats GPT-5.4 on several key benchmarks like finance and tool use, the real story is that Anthropic has intentionally "dialed down" its power compared to their restricted Mythos model to ensure safety.

Substantial improvements in coding and instruction-following; it interprets prompts more literally, requiring less back-and-forth but more precise instructions.

A massive 3x vision upgrade allows the model to process high-resolution images up to 3.75 megapixels, a game-changer for AI agents analyzing dense UI screenshots or technical diagrams.

It’s a "practical frontier" model, optimized for reliability and long-horizon autonomy, it now self-validates outputs and recovers from tool failures instead of freezing.

Pricing remains at $5/$25 per million tokens via API, though a new tokenizer may increase effective costs by up to 35% depending on your content mix.

Anthropic is making a unique marketing bet: that enterprise leaders care more about safety and reliability than raw, unchecked power. By releasing a "deliberately nerfed" model that still dominates in practical engineering and finance, they are positioning themselves as the responsible adults in the room. They’re effectively using Opus 4.7 to live-test the guardrails for Mythos, making sure that when the ultimate model drops, it doesn't accidentally hand out zero-day exploits to everyone.

AI MODELS

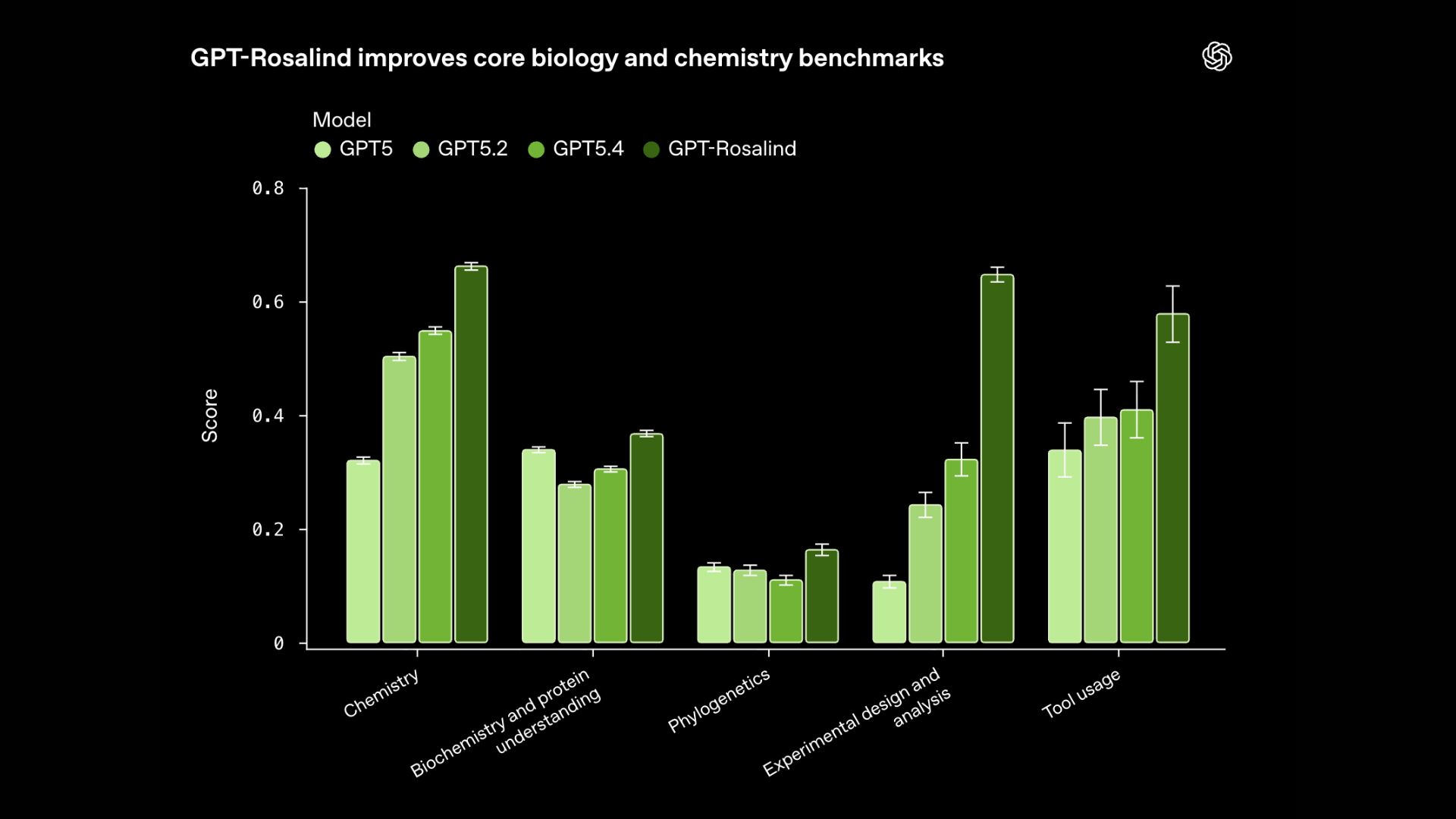

Named after Rosalind Franklin, this purpose-built model is designed to shatter the data bottlenecks in drug discovery and genomics. Unlike general-purpose chatbots, Rosalind is a specialized "science-first" reasoning engine built for high-stakes lab workflows.

Purpose-built for genomics, protein engineering, and chemistry, aiming to slash the 10–15 year timeline currently required for drug regulatory approval.

Scored higher than 95% of human experts in predicting RNA sequence behavior during performance testing.

Launches alongside a "Life Sciences research plugin" that connects the AI directly to over 50 massive scientific databases and protein structures.

Access is strictly gated; for safety reasons, OpenAI is only granting licenses to vetted organizations like Moderna, Amgen, and the Allen Institute.

Signals a major shift in OpenAI’s strategy away from a single "all-knowing" AGI toward highly specialized, domain-specific frontier models.

We’ve officially entered the era of specialized frontier AI. By building a model that understands the "language" of DNA and proteins better than most humans, OpenAI is positioning itself as the infrastructure for the next generation of medicine. However, the decision to gate access to this model, much like GPT-5.4-Cyber, proves that the most powerful AI of 2026 is deemed too dangerous for the general public, marking a permanent end to the "open-access" era of frontier intelligence.

AI MODELS

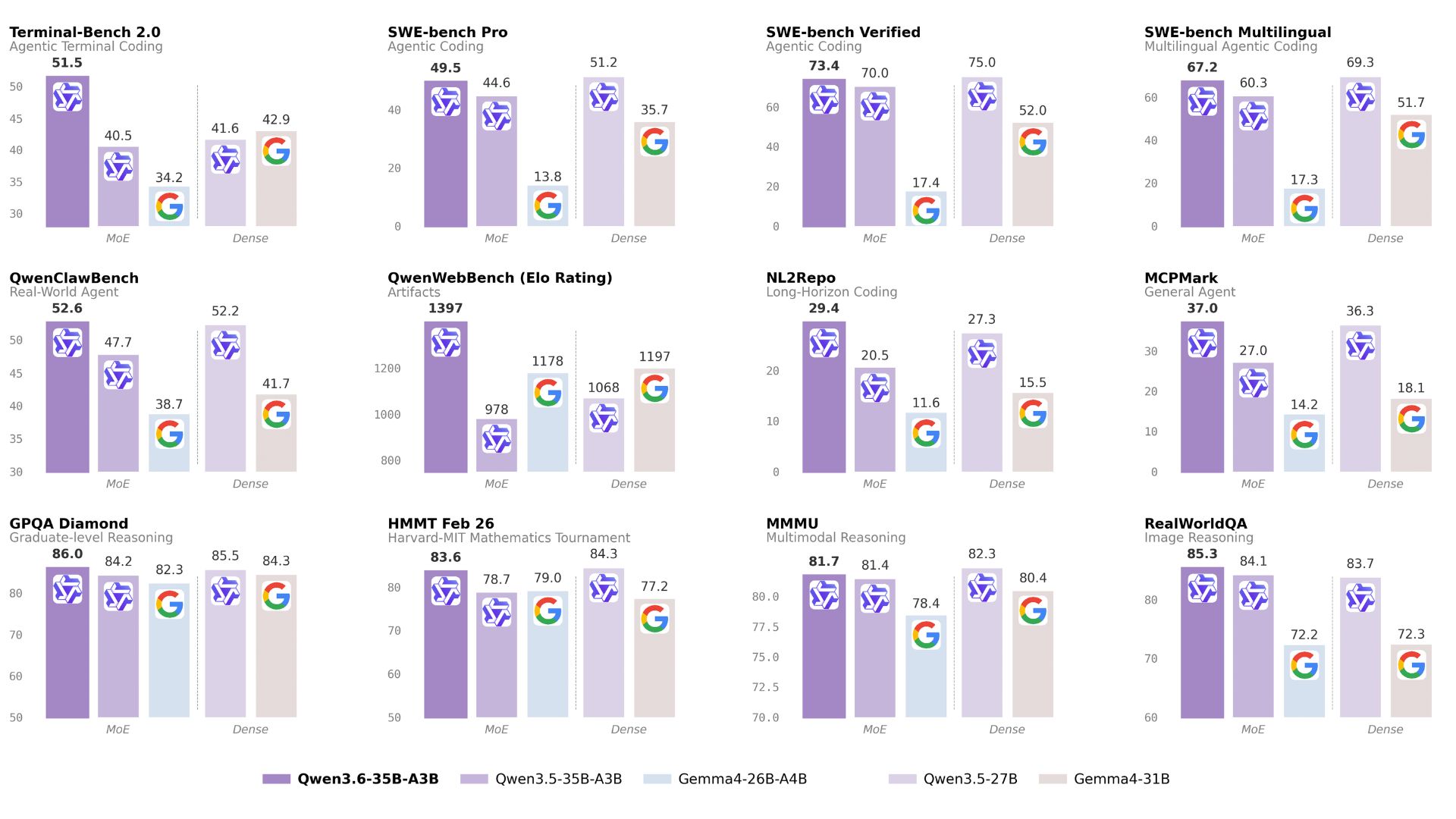

In a head-to-head SVG coding challenge, Alibaba’s new Qwen3.6-35B-A3B model, running locally on a laptop, beat out Anthropic’s massive proprietary Claude Opus 4.7. The task? Drawing a pelican riding a bicycle.

Running a 21GB quantized version of Qwen 3.6 on a MacBook Pro M5, the model produced a structurally correct bicycle frame, added atmospheric details like clouds, and included an accurate caption.

In contrast, Claude Opus 4.7 struggled with basic geometry, failing the bicycle frame twice, even when pushed with "max" thinking levels enabled.

To prove Qwen wasn't just "training for the test," Willison ran a secret backup benchmark: a flamingo riding a unicycle. Qwen won again, adding "charisma" like sunglasses, a bowtie, and a cigarette.

The results break a long-standing correlation where model size usually dictated SVG quality, showing that smaller, highly efficient models are catching up in niche spatial reasoning.

This result is a massive win for local AI and the "vibe coding" movement. If a quantized model running on a consumer laptop can outperform the world's most expensive proprietary models at complex spatial coding, the argument for keeping workloads local, and the power of open-weights models like Qwen, becomes undeniable. It’s proof that in the era of agentic AI, "bigger" is no longer a guaranteed win.

HOW TO AI

🗂️ How to Generate Viral Marketing Assets using Pippit AI

In this tutorial, you will learn how to instantly generate AI talking photos, avatar videos, and high-quality product showcases from a single text prompt using Pippit AI's multimodal creation suite and the newly integrated Seedream model.

🧰 Who is This For

Content creators looking to scale their channel without hiring actors

Researchers and marketers analyzing and replicating proven viral video formulas

Faceless channel owners who need consistent, cinematic AI video generation

Anyone who wants AI to handle scripting, storyboarding, and voiceovers

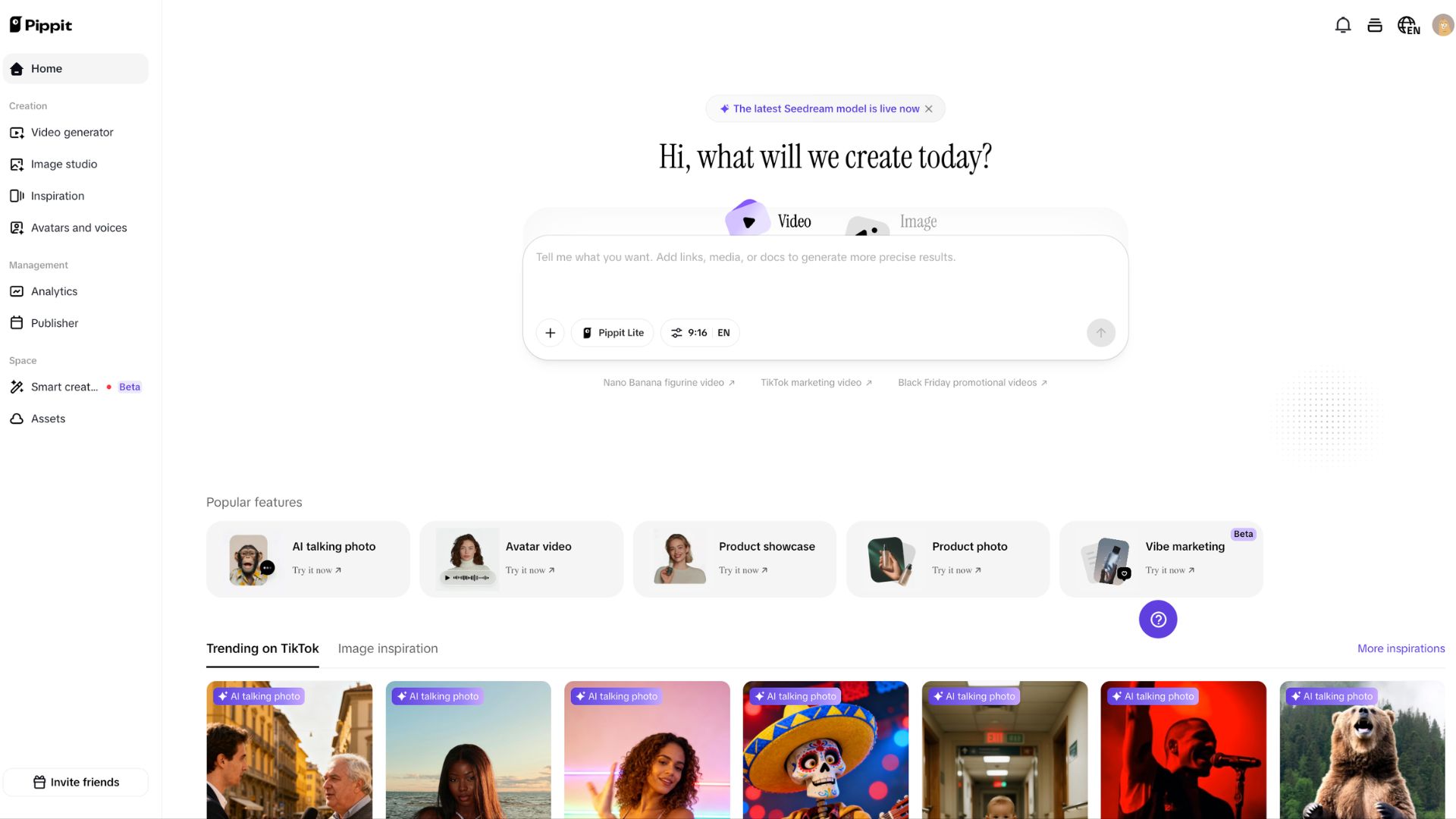

STEP 1: Access the Pippit Creation Hub

Head over to the pippit.ai home dashboard. This interface acts as an all-in-one centralized space for both image and video generation. Ensure you take note of the top banner, which confirms that the latest Seedream model is currently active for your generations, giving you access to state-of-the-art motion and visual fidelity.

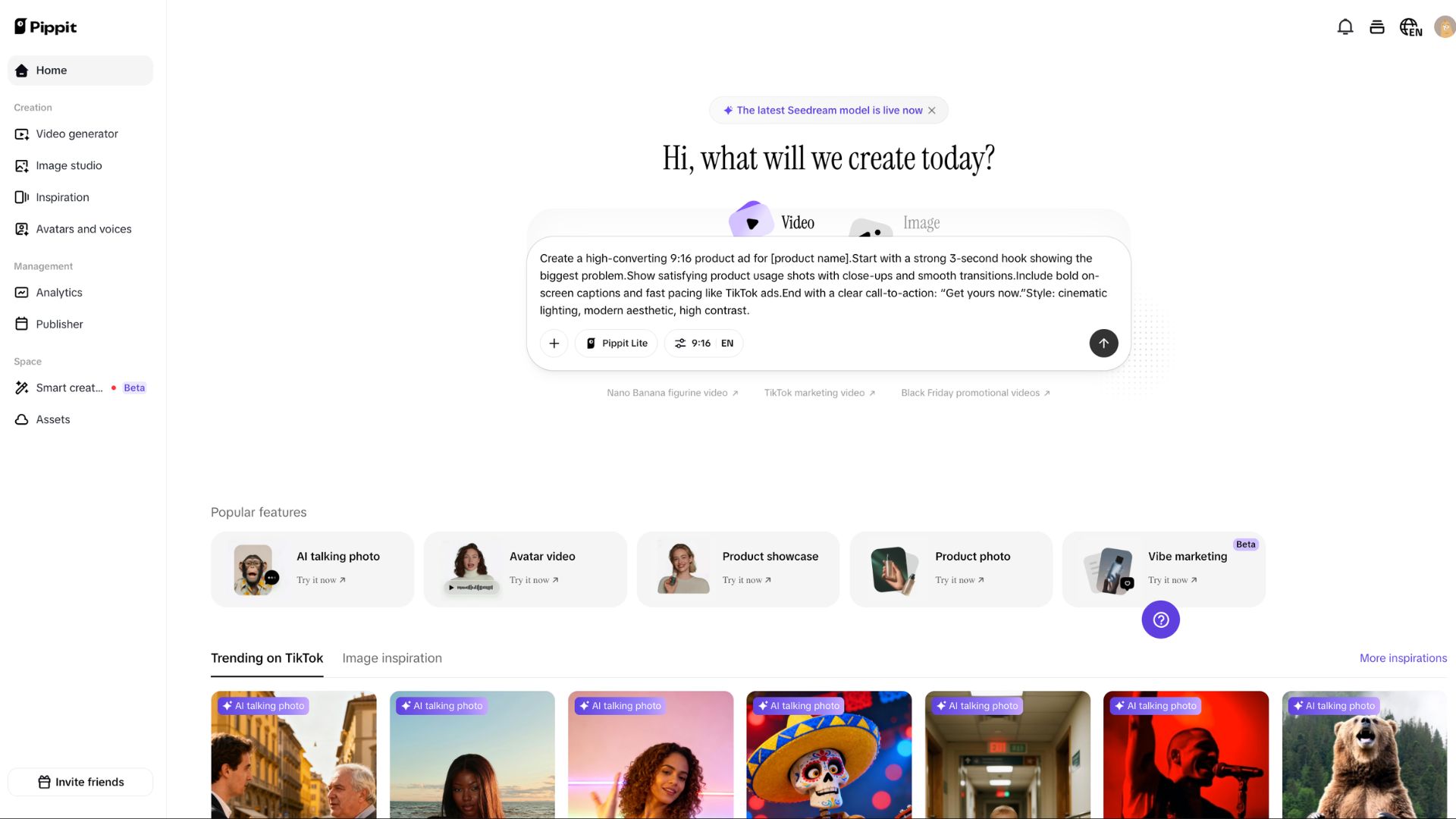

STEP 2: Configure Your Core Prompt

Locate the main generation box in the center of the screen, which asks, "Hi, what will we create today?" Toggle between the "Video" and "Image" tabs depending on your desired output.

You can type a descriptive text prompt directly, but to get highly precise results for marketing campaigns, use the attachment buttons to add external links, reference media, or strategy documents directly into the context window.

STEP 3: Set Formatting and Select Features

Before generating, use the toggle buttons below the text box to configure your output. You can select your model and adjust the aspect ratio (such as 9:16, which is perfect for vertical shorts or social media marketing). Alternatively, if you need a specific type of asset, scroll down to the "Popular features" section to instantly launch specialized tools like "AI talking photo," "Avatar video," or "Product showcase" without needing complex prompting.

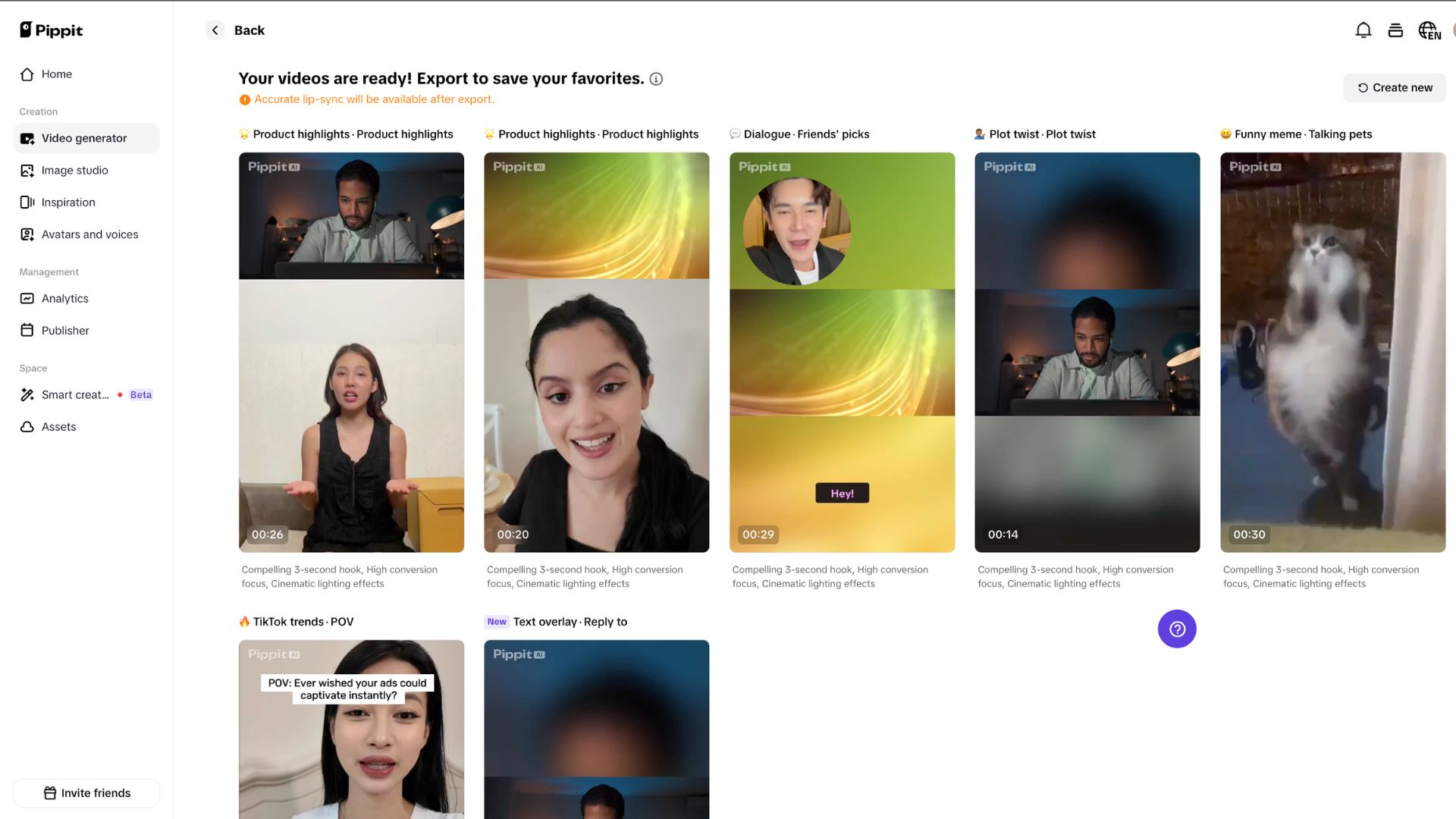

STEP 4: Generate and Deploy

Once your parameters are set, hit the generate arrow. Pippit will process your inputs, whether you are creating a "vibe marketing" asset or a Black Friday promotional video, and output the final media. You can then download these high-resolution assets to deploy across your social channels to test different hooks and drive audience engagement.

OpenAI updates its Codex desktop app with features like computer control, an in-app browser, image generation, automation memory, plugin support, and more.

Google updates AI Mode in Chrome, letting users open links side by side with AI Mode on desktop; users can search across multiple tabs on desktop and mobile.

Google announces a Gemini app integration between Personal Intelligence and Nano Banana 2 to let the AI image generator “automatically reflect” users' tastes.

Apple plans to send ~200 people from its Siri team, a group internally known as a laggard, to an AI coding bootcamp.

💻 Gemini for Mac: Google’s Gemini app, now built for Mac

🌎 Lyra 2.0: NVIDIA AI that turns text into interactive 3D scenes

⚙️ HeyGen CLI: Create videos straight from your terminal with AI

💻 Holo 3: Open AI agent that can use computers like a human

THAT’S IT FOR TODAY

Thanks for making it to the end! I put my heart into every email I send, I hope you are enjoying it. Let me know your thoughts so I can make the next one even better!

See you tomorrow :)

- Dr. Alvaro Cintas