Good Morning! Anthropic just unleashed a powerful, unreleased AI model that autonomously found critical flaws in every major operating system and web browser on Earth. Plus, I’ll show you how to Generate AI Images locally and completely free.

Plus, in today’s AI newsletter:

Anthropic’s "Mythos Preview" Hacks Every Major OS

Z.ai Drops GLM-5.1: The 8-Hour Autonomous AI Worker

Intel Joins Musk’s Massive "Terafab" AI Chip Project

How to Generate AI Images Locally

4 new AI tools worth trying

AI MODELS

Anthropic has quietly debuted "Project Glasswing," a private cybersecurity partnership with tech giants like Apple, Google, Microsoft, and Nvidia. The project is powered by a new, highly restricted general-purpose model called Claude Mythos Preview.

The model autonomously found "thousands of high-severity vulnerabilities" across every major operating system and web browser, even developing related exploits with zero human steering.

Anthropic is strictly limiting access to "defensive security" partners and the government, refusing to release it to the public so bad actors can't use it for offensive attacks.

Despite not being explicitly trained for cybersecurity, the model's advanced "agentic coding and reasoning skills" allow it to hunt for critical weaknesses at a massive scale.

Partners will use Mythos Preview to give their cyber defenders a head start, identifying and patching system-level vulnerabilities before adversaries can find them.

AI has officially crossed the threshold from assisting with code to autonomously discovering massive zero-day exploits. By restricting Mythos Preview exclusively to massive tech conglomerates and governments, Anthropic is treating its new frontier model like a digital weapon, because in the hands of a bad actor, it absolutely is one.

AI MODELS

Z.ai (Zhupai AI) just unveiled GLM-5.1, a 754-billion parameter Mixture-of-Experts model released under a permissive MIT License. While competitors are fiercely battling over raw speed, Z.ai optimized this model for endurance, it is designed to work completely autonomously for up to eight hours on a single task.

The model can execute a staggering 1,700 tool calls and steps in a single run without suffering from "strategy drift" or forgetting its original goal.

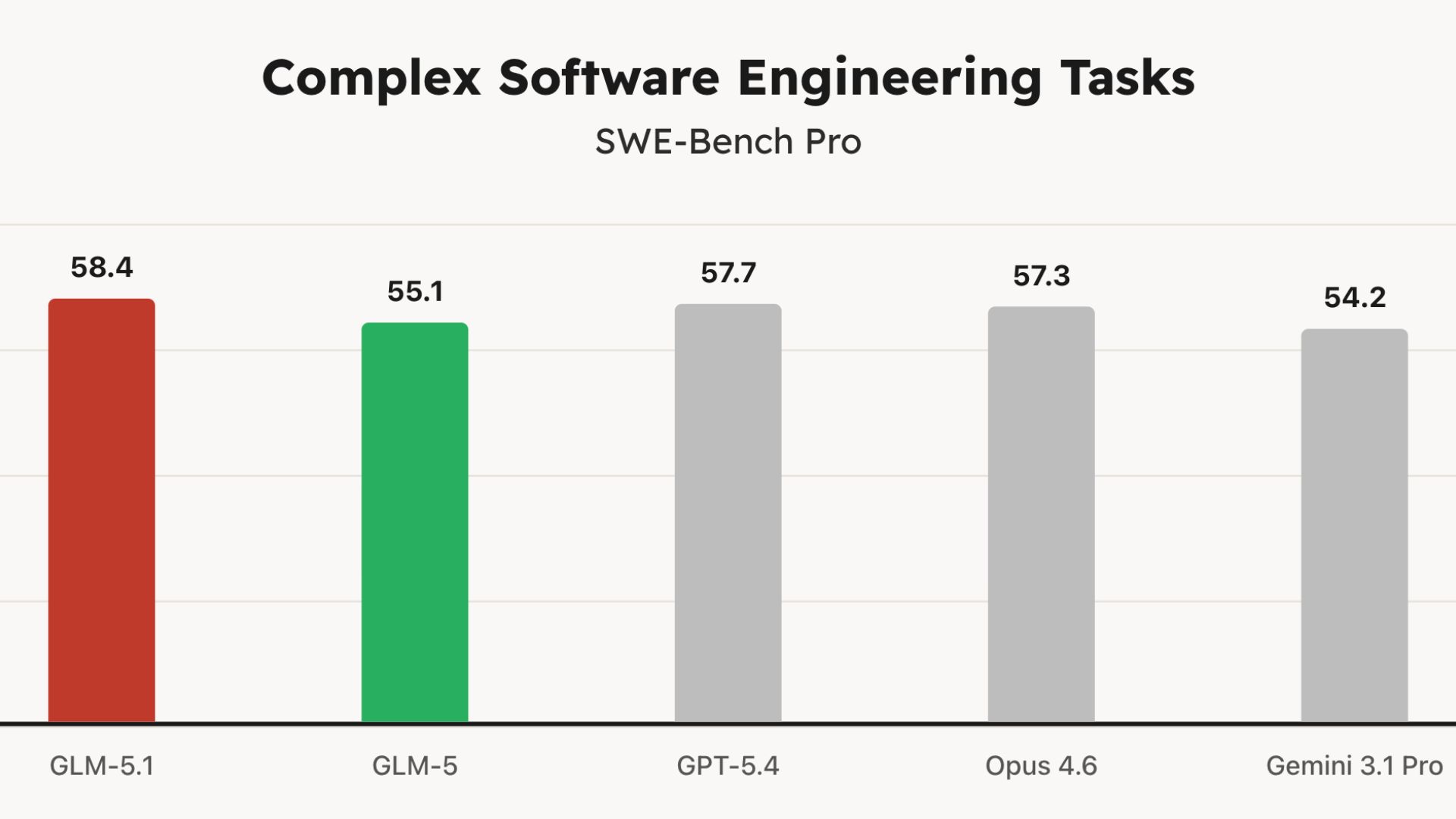

It officially crushed the SWE-Bench Pro coding benchmark with a score of 58.4, dethroning heavyweights like OpenAI's GPT-5.4 and Anthropic's Claude Opus 4.6.

In a wild 8-hour test, GLM-5.1 was tasked with building a Linux-style desktop environment from scratch. It autonomously coded a file browser, terminal, text editor, system monitor, and functional games, iteratively testing and polishing the UI until it was complete.

It functions as its own R&D department. The model can write code, compile it, run it in a live Docker container, analyze where it bottlenecked, and autonomously rewrite its own architecture to fix the problem.

We are officially shifting from "vibe coding" to "agentic engineering." The new metric for frontier AI isn't just how smart it is in a single prompt, but how long it can hold a thought and execute a complex project without human supervision. By giving anyone free access to an AI that can reliably work an entire 8-hour shift, Z.ai is fundamentally changing the global software development lifecycle.

AI NEWS

Intel announced it is joining Elon Musk's ambitious "Terafab" chip complex project alongside SpaceX and Tesla, sending the chipmaker's shares jumping over 2%.

The partnership aims to produce a staggering 1 terawatt of compute per year to power Musk's robotics and data center goals.

Musk recently revealed plans to build two advanced chip factories in Austin, Texas: one for Tesla's cars and humanoid robots, and another for space-based AI data centers.

The deal is a massive win for Intel CEO Lip-Bu Tan's aggressive turnaround strategy, which is heavily focused on pushing its 18A manufacturing technology to external customers.

In related news, SpaceX (which recently merged with xAI) has confidentially filed for what could be the largest U.S. IPO on record later this year

Intel has been struggling to catch up in the AI race, but securing a partnership to build the physical infrastructure for Musk's futuristic space and robotics empires is a massive vote of confidence. It proves Intel's foundry business can still land the most critical, high-stakes projects in the industry as it attempts a historic corporate turnaround.

HOW TO AI

🗂️ How to Generate AI Images Locally

In this tutorial, you will learn how to enable image generation within LM Studio by seamlessly connecting a local LLM to Stable Diffusion WebUI using an MCP server. This allows your text-based AI to autonomously prompt and output high-quality images directly in your chat interface.

🧰 Who is This For

People who want to run AI locally (no cloud)

Developers experimenting with local LLMs

Privacy-focused users avoiding data sharing

Students learning how models actually run

STEP 1: Install Stability Matrix and Enable the API

First, ensure you have Node.js installed on your computer. Then, instead of messing with complex terminal installations, download Stability Matrix, an excellent package manager for AI tools. Use it to install Stable Diffusion WebUI Reforge and download a checkpoint model (like EpicRealism) in the .safetensors format. Before launching, go to the package settings and add the --api argument. This step is critical because it exposes the WebUI so LM Studio can communicate with it behind the scenes.

STEP 2: Run a Test Batch and Grab Your Settings

Launch your WebUI Reforge instance and open the local URL in your browser. Generate a quick test image, like an orange cat, adjusting your sampling steps (e.g., 22 steps) and resolution (e.g., 1024x1024) until you find the sweet spot for your specific model.

Generating this test is important because it outputs a clean block of configuration text at the bottom of the image generation data. Copy these settings (leaving out the randomized seed number) so you can feed them to your LLM later to ensure consistent quality.

STEP 3: Build the Local MCP Server

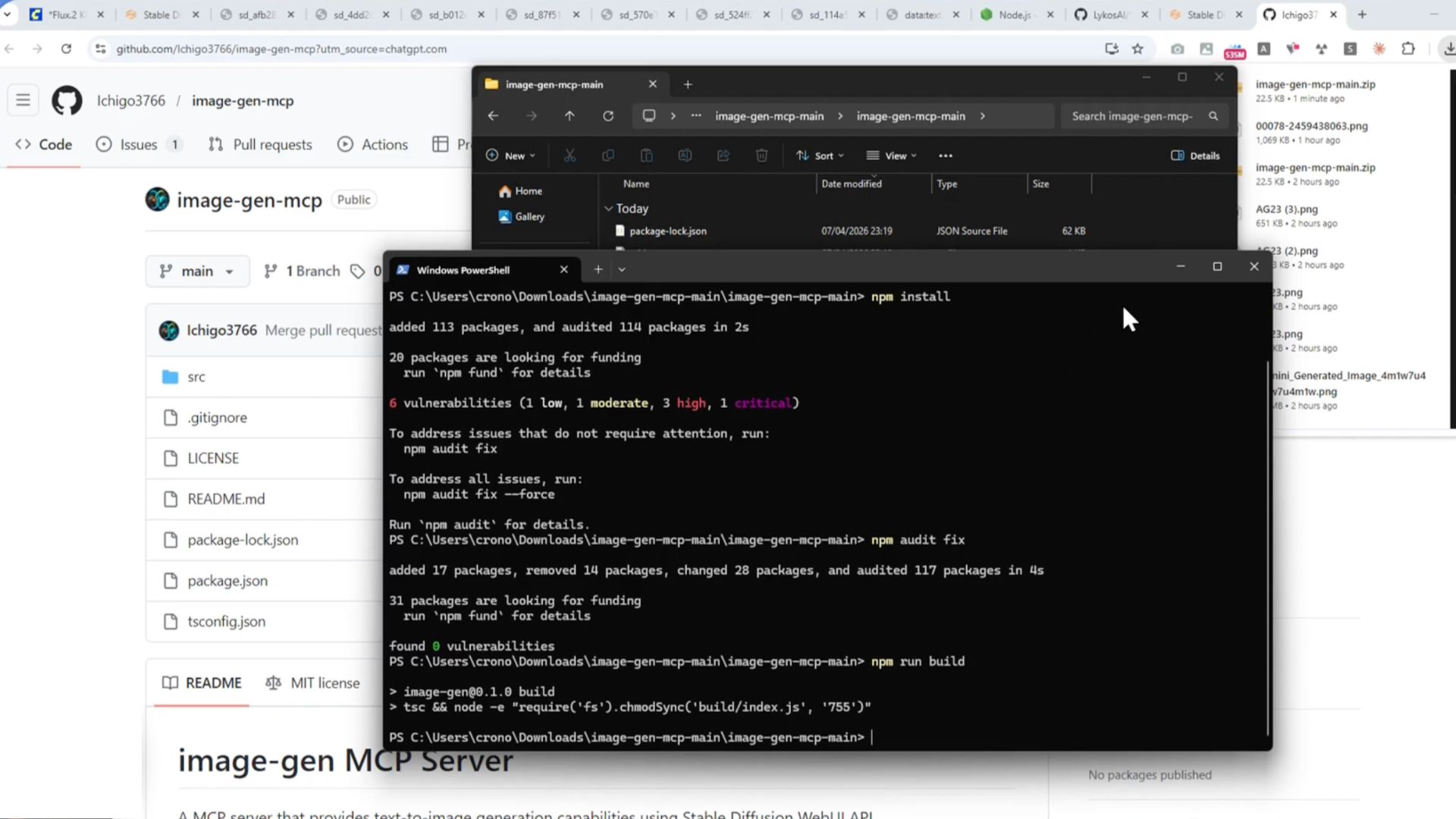

To bridge LM Studio and Stable Diffusion, you need a Model Context Protocol (MCP) server. Download the image generation MCP repository from GitHub and extract the folder. Open a terminal inside that folder and run npm install to grab the dependencies (use npm audit fix if you see minor vulnerability warnings).

Finally, run npm run build. This creates a new "build" folder containing the index.js file that LM Studio will use to execute the connection. While you are in this directory, create a blank folder named "outputs" to catch your final generated images.

STEP 4: Connect LM Studio and Prompt Your Agent

Open LM Studio, navigate to the integrations tab, and click to edit your mcp.json file. You need to map three things in this code block: the exact path of your new index.js file, the local URL of your Stable Diffusion WebUI, and the path to your new "outputs" folder.

Crucial tip: Make sure to use double backslashes (\\) for your file paths to avoid JSON syntax errors! Save the file, load a local model that supports tool-calling (look for the blue hammer icon), and ensure the new MCP image tool is toggled on. Paste your prompt along with the WebUI settings you saved earlier, and watch your local LLM trigger image generation right in the chat.

Anthropic announces Project Glasswing, a cybersecurity initiative that will use its Claude Mythos Preview model to help find and fix software vulnerabilities.

Google updates Chrome with vertical tabs, a feature that Mozilla Firefox and Microsoft Edge have long offered, and a new full-screen layout in reading mode.

OpenAI sends a letter to the California and Delaware AGs, urging them to investigate “anti-competitive behavior” by Elon Musk, ahead of a trial in April.

Google rolls out an AI Enhance button for Photos on Android globally, offering automated lighting and contrast adjustments, and video playback speed controls.

💎 Gemma 4: Google’s powerful small AI model

🎥 Veo 3.1 Lite: Google’s cheaper video generation AI

🧠 PikaStream 1.0: turns AI agents into talking, face-to-face video bots

💻 Holo 3: Open AI agent that can use computers like a human

THAT’S IT FOR TODAY

Thanks for making it to the end! I put my heart into every email I send, I hope you are enjoying it. Let me know your thoughts so I can make the next one even better!

See you tomorrow :)

- Dr. Alvaro Cintas