I ran out of Claude Code credits constantly.

I'd been using it heavily on a project, and the credits keeps slowing me down. I had two options: wait it out every time, or find a real alternative that doesn’t mean starting over with a completely different tool.

That's when I set up Gemma 4 locally, Google’s most intelligent open model.

It's built on the same research as Gemini 3. It can code, reason, and read images. It runs entirely on your local machine. And unlike most "open" models, it comes with an Apache 2.0 license, which means full commercial rights.

You can deploy it, fine-tune it, sell products built on it. No restrictions. No royalties. No permission required.

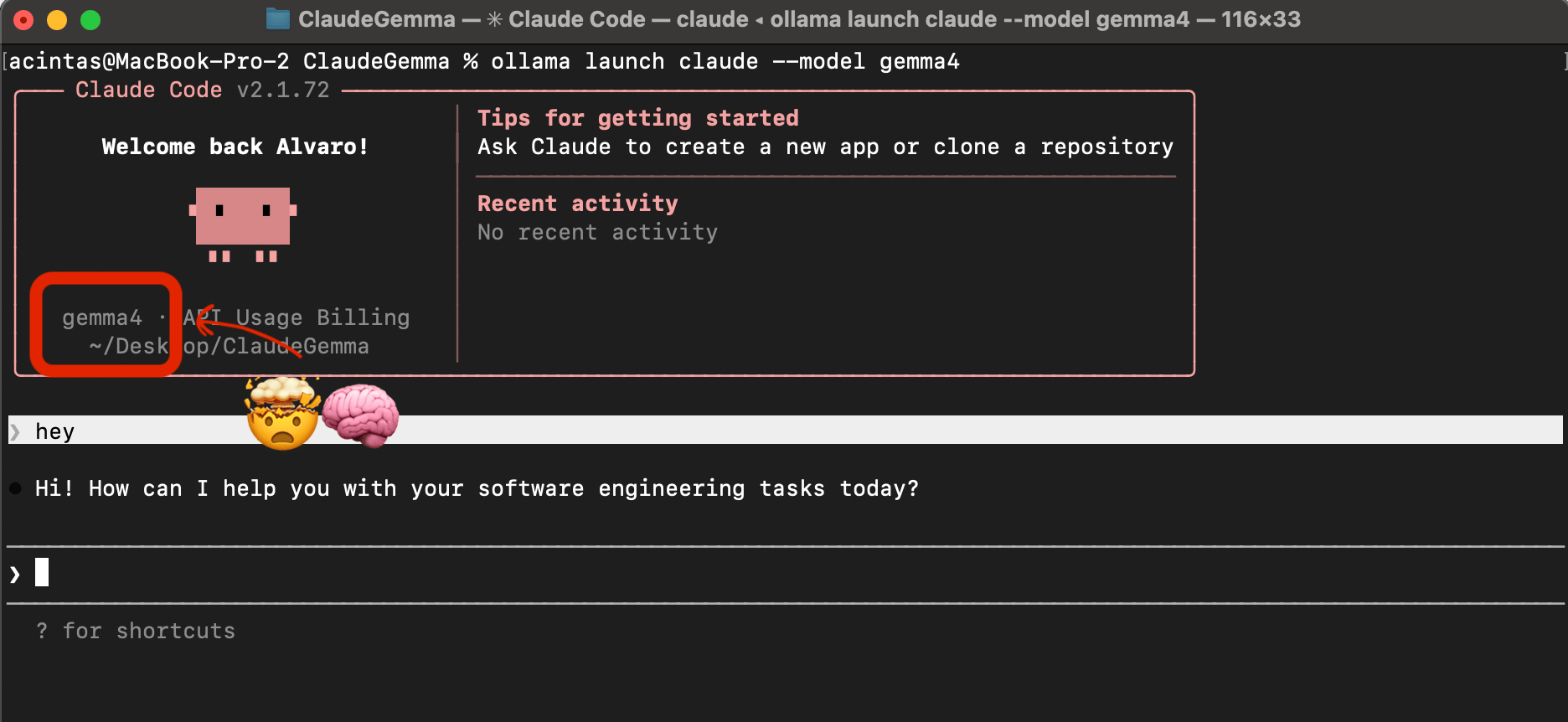

And you can run it inside Claude Code right now without paying a single dollar to an API provider.

This guide covers everything. What Gemma 4 is, how to get it running locally with Ollama, how to launch it inside Claude Code, and, honestly, where it's impressive and where it falls short.

What Gemma 4 actually is.

The model, in plain terms:

Gemma 4 is Google's most capable open-source model to date. It comes in four sizes:

Size | Size | Context | Best for |

|---|---|---|---|

E2B | 7.2 GB | 128k | Lightweight tasks, limited hardware |

E4B | 9.6 GB | 128k | Balanced performance |

26B | 18 GB | 256k | Complex reasoning, higher-end hardware |

31B Dense | 20 GB | 256k | Best performance: top open-source model |

The 31B dense ranks #3 on Arena AI among all open models globally, outcompeting models with 20 times more parameters.

We're using E4B in this guide. It's the default when you pull Gemma 4 through Ollama, runs on most machines with 16 GB RAM, and supports text and image input. E2B is there if your hardware is very constrained.

The license, why this matters:

Most AI models released for "free" have restrictions. You can use them for personal projects, but the moment you build something commercial, you're in murky territory.

Gemma 4 is Apache 2.0. Full commercial license. Build products, sell them, deploy them at scale. No strings attached.

That changes the calculation entirely.

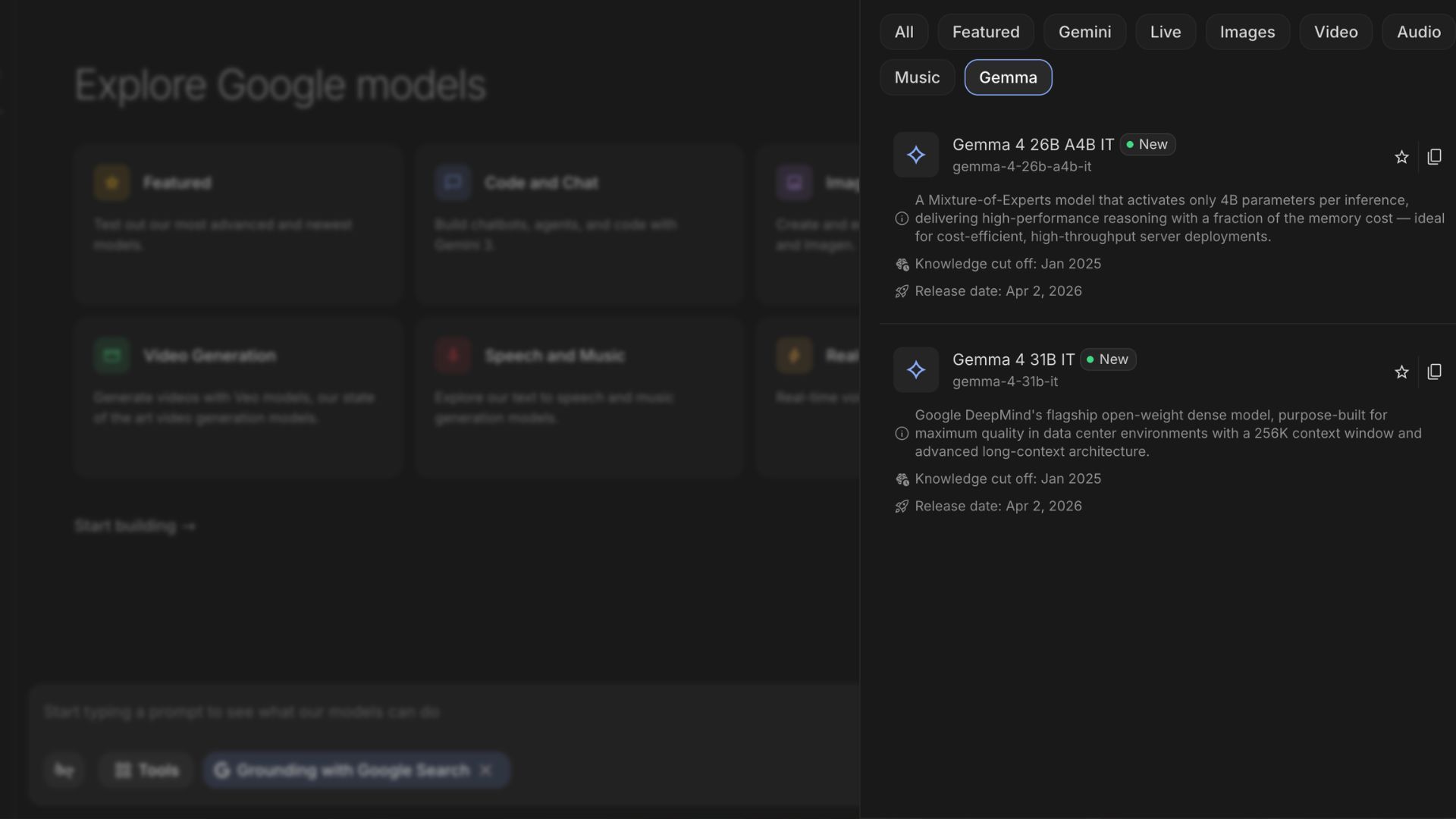

Test it online first (before downloading anything).

Before you install anything locally, you can test Gemma 4 right now in Google AI Studio, completely free, no setup required.

Go to aistudio.google.com

Under models, search for "Gemma 4"

You'll see the 26B MoE and the 31B Dense versions available

The 31B dense is a thinking model, it reasons through a query before answering. Worth seeing how that works before you download anything.

Test it with a simple question. Then try uploading a screenshot and asking it to interpret what's on screen. Then try asking it to write a basic HTML page.

If the outputs look useful to you, it's worth installing locally.

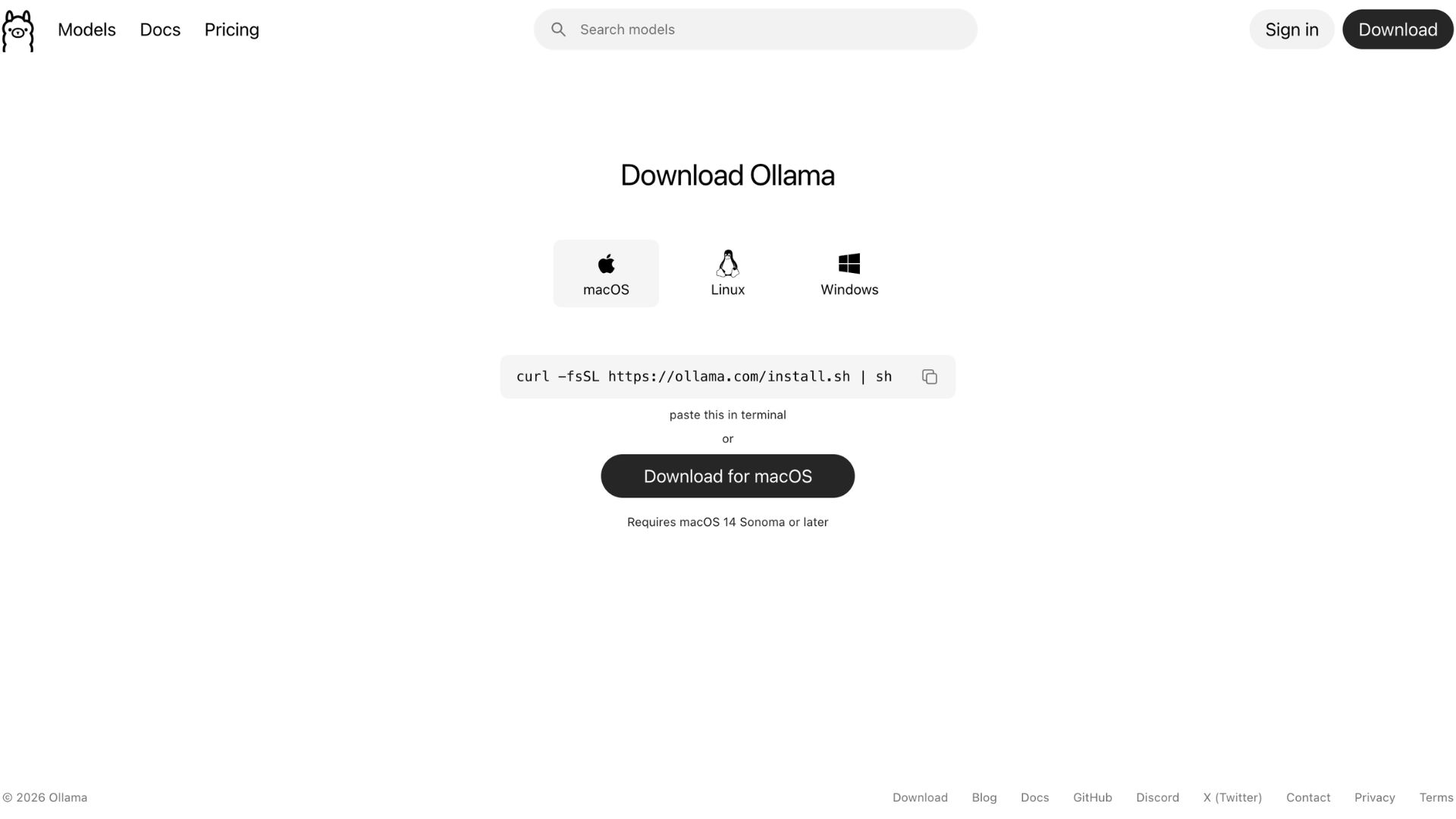

What you need: Ollama.

Ollama is a platform for managing and running large language models locally on your machine. Think of it as the container that lets you download, run, and switch between local models through a simple interface.

Install it:

Go to ollama.com. Download it for your system. Install it. Open the application.

Once it's running, you'll see it in your dock or system tray.

One setting to modify:

Open Ollama settings. Increase the the content length to at least 32,000 or 64,000 for better performance.

Note: Context length determines how much of your conversation local LLMs can remember and use to generate responses.

Downloading Gemma 4.

Want to keep reading?

Become a paying subscriber to get access to this post and lots of other premium content.