- Simplifying AI

- Posts

- 🗜️ Google unveils TurboQuant

🗜️ Google unveils TurboQuant

PLUS: How to record and summarize any meeting using ChatGPT

Good Morning! Google just dropped a mind-bending AI memory compression algorithm that the internet is already dubbing the real-life "Pied Piper. Plus, I’ll show you how to record and summarize any meeting using ChatGPT.

Plus, in today’s AI newsletter:

Google Unveils "TurboQuant" AI Memory Compression

Mark Zuckerberg is Training an AI to be CEO

ARC-AGI 3 Drops and Frontier Models Bomb

How to Record and Summarize Any Meeting using ChatGPT

4 new AI tools worth trying

AI NEWS

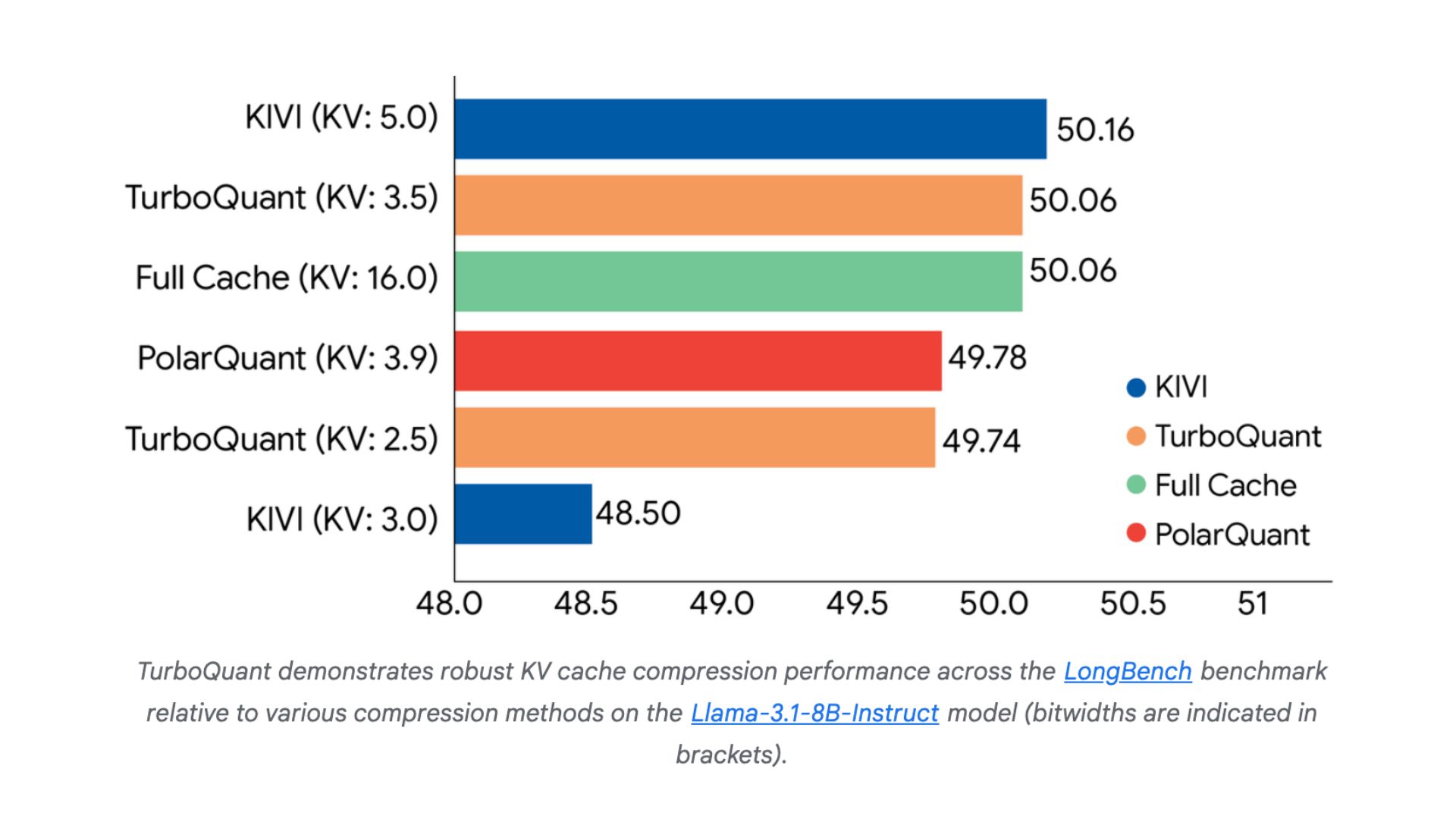

Google Research just unveiled TurboQuant, a radical new compression algorithm designed to shrink an AI's working memory without sacrificing an ounce of performance. The breakthrough is drawing massive comparisons to the fictional lossless compression tech from HBO's Silicon Valley, and for good reason.

TurboQuant targets the Key-Value (KV) cache, the massive memory bottleneck during AI inference, compressing it by at least 6x (down to just 3 bits per value) with zero accuracy loss.

In benchmarks on Nvidia H100 GPUs, it delivered up to an 8x speedup in computing attention compared to uncompressed 32-bit setups.

It achieves this using a two-stage mathematical shield: "PolarQuant" maps data vectors onto a predictable circular grid to eliminate overhead, and "QJL" acts as a 1-bit error-checker to maintain perfect logic.

The tech is so efficient that memory chip stocks (like Micron and Western Digital) actually dropped on the news, as investors realized data centers might suddenly need way less RAM.

Memory is the ultimate invisible tax on modern AI. As context windows get larger, the RAM required to store that context becomes cripplingly expensive. By successfully compressing the KV cache without lobotomizing the model's intelligence, Google isn't just making AI cheaper to run, they are fundamentally altering the hardware economics of the entire industry.

AI NEWS

According to a new scoop from The Wall Street Journal, Meta chief executive Mark Zuckerberg is building a personal AI agent to help him run the company. It seems he believes in his own tech hype enough to let it shadow his role, a bold move from the guy who previously burned $80 billion trying to force the Metaverse into existence.

Zuckerberg's "CEO agent" is designed to retrieve information instantly, bypassing the layers of human bureaucracy he would normally have to navigate.

Meta is aggressively forcing an AI-native culture to flatten its 78,000-person headcount, even tying employee performance reviews partially to their AI usage.

Employees are building their own bots, like "My Claw" (which accesses work files and talks to colleagues on their behalf) and "Second Brain" (an AI chief of staff built on Anthropic's Claude).

Meta recently acquired "Moltbook," a viral social network exclusively for AI agents, and employees have already set up internal message boards where their personal AIs chat with each other.

If the CEO of one of the world's most powerful tech giants is actively trying to automate his own executive workflows, it signals a massive shift in how mega-corporations operate. We are moving past AI as just a coding assistant; Meta is trying to prove that autonomous, agentic AI can literally help manage a trillion-dollar company from the top down.

AI MODELS

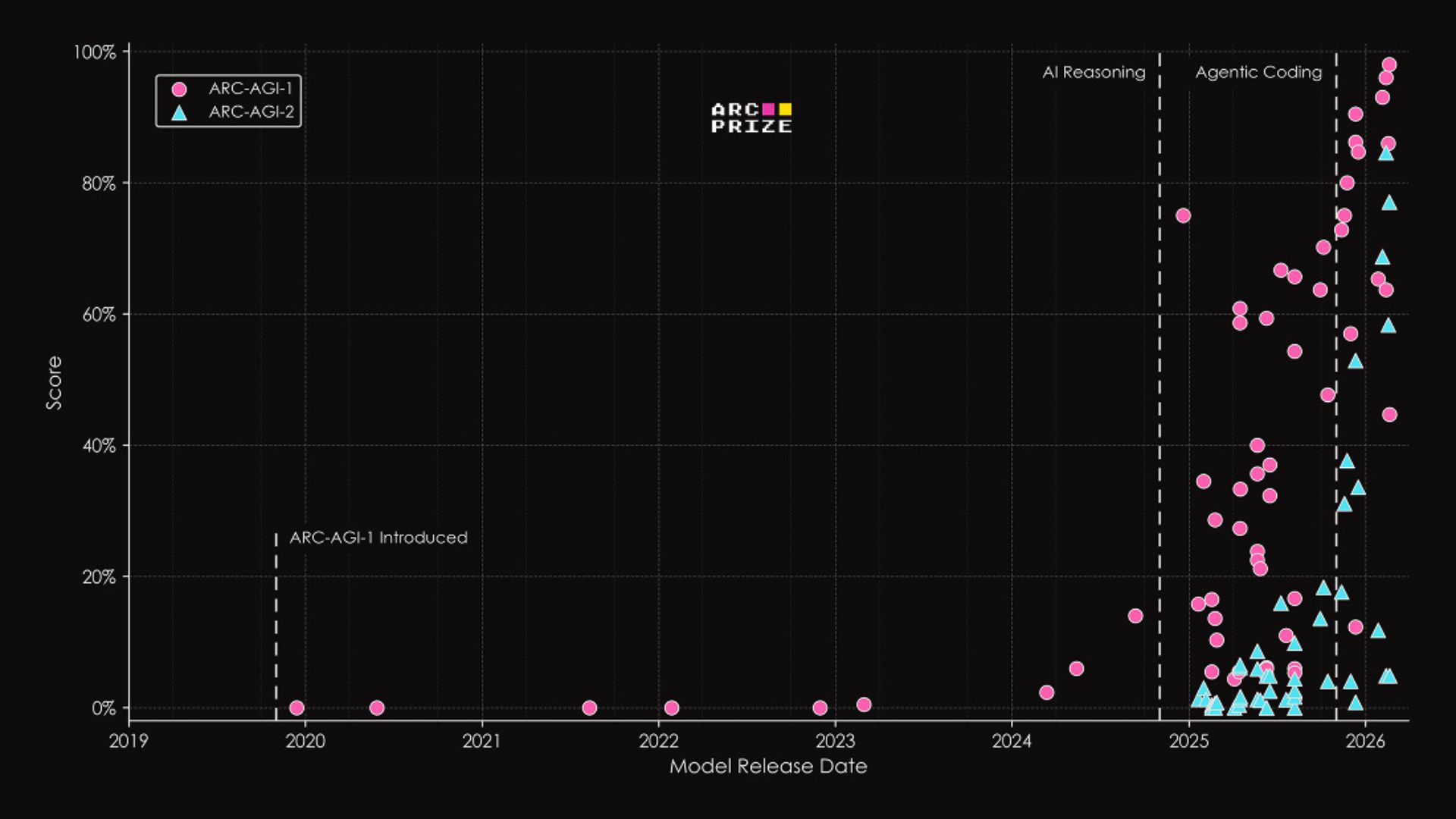

The third iteration of the notoriously difficult ARC-AGI benchmark is officially here. Designed to test true fluid intelligence and agentic reasoning rather than memorized knowledge, ARC-AGI 3 is proving to be a massive hurdle for the industry's top AI.

All current frontier models are scoring well below the 1% mark on the new evaluation

Gemini 3.1 currently leads the pack with a dismal 0.37%

OpenAI's GPT-5.4 follows closely behind at 0.26%

Anthropic's Opus 4.6 sits at just 0.25%

It's easy to look at these sub-1% scores and question why benchmarks even matter if the goalposts just keep moving. But this cycle is exactly what forces the industry forward. By proving that models like GPT-5.4 and Gemini 3.1 still lack true, human-like fluid intelligence, ARC-AGI 3 ensures that labs don't get complacent. It exposes the gap between simple pattern matching and actual reasoning, forcing researchers to innovate entirely new architectures to solve it.

HOW TO AI

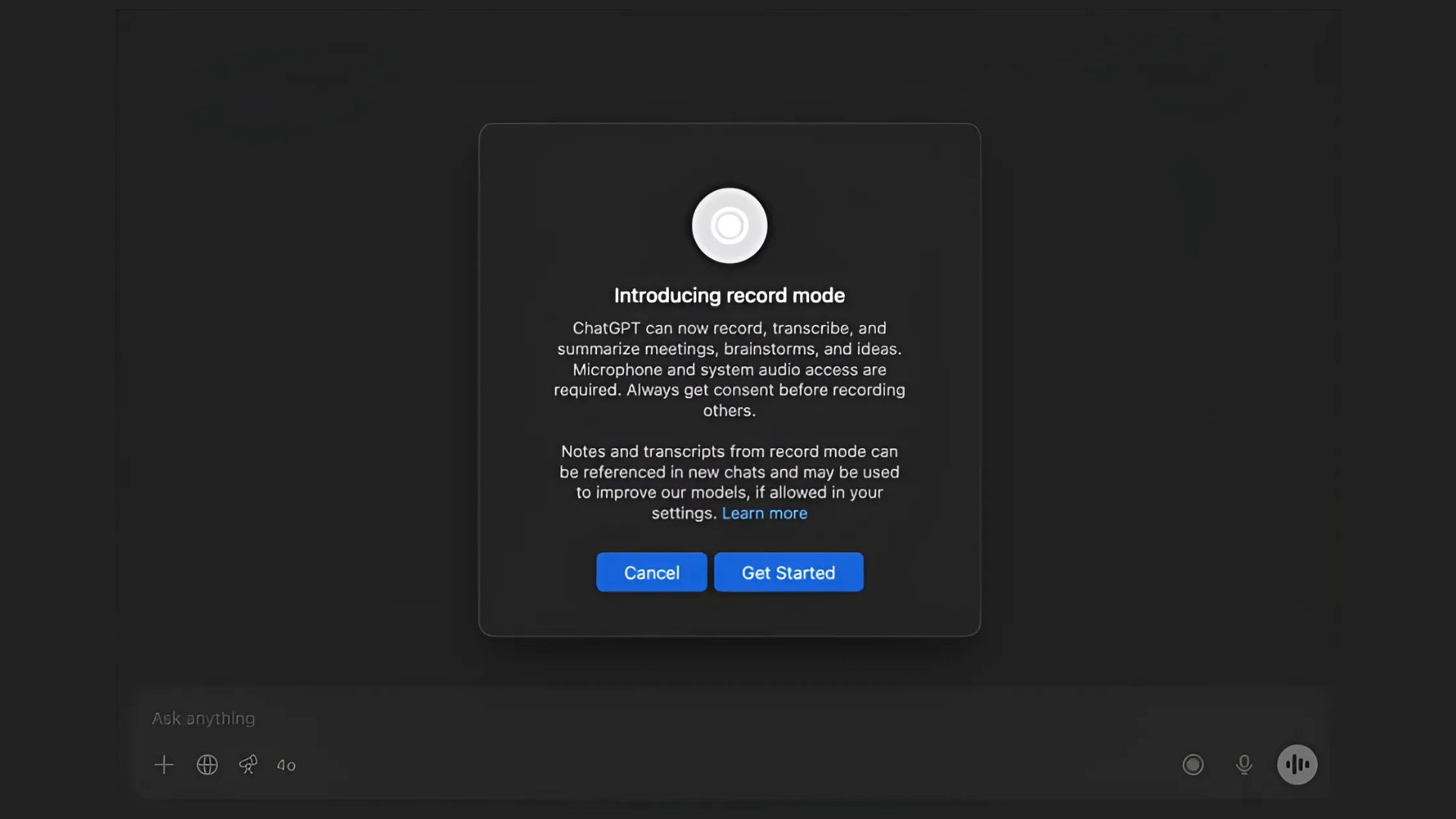

🗂️ How to Record and Summarize Any Meeting using ChatGPT

In this tutorial, you will learn how to instantly capture, transcribe, and summarize your meetings using the native ChatGPT desktop app for macOS, turning lengthy discussions into structured action items with just one click.

🧰 Who is This For

Mac users who attend lots of meetings

Remote workers and async teams

Founders jumping between calls all day

Product managers tracking discussions

STEP 1: Launch the App and Grant Permissions

Open the native ChatGPT desktop app on your macOS system. To begin, click the Record button located at the bottom of the chat screen. The first time you do this, your Mac will prompt you to grant the necessary permissions, approve these so the app can seamlessly capture both your microphone and the system audio.

STEP 2: Capture the Conversation

Once permissions are set, ChatGPT will begin recording the audio, automatically distinguishing between each speaker on the call. You can let it run in the background while you focus entirely on your meeting or brainstorming session. Just keep an eye on the clock, as each continuous recording session is currently capped at a maximum of 120 minutes.

STEP 3: Stop and Process the Audio

When your meeting wraps up, simply click the Stop button on the recording interface. To finalize the capture and instruct the AI to process the audio, select Send. ChatGPT will immediately process the recording and upload the full text transcript directly to your chat history.

STEP 4: Review and Repurpose Your Canvas

ChatGPT will instantly open a private, interactive canvas displaying a structured summary of the call, complete with key points, extracted action items, and suggested follow-up questions. From here, you can use text or voice commands to dive into specific elements of the conversation, or ask the AI to instantly rewrite the notes into a client email, a step-by-step project plan, or even a code scaffold for your next build.

Google sets a 2029 deadline for its post-quantum cryptography migration, aiming to “secure the quantum era” as “frontiers may be closer than they appear”.

Meta on Wednesday laid off around 700 employees in the Reality Labs unit, as well as some in recruiting, sales, and Facebook.

Google launches Lyria 3 Pro music generation model, with better creative control and allowing users to create three-minute tracks, up from Lyria 3's 30 seconds.

Reddit says it will label automated accounts that provide a service to users and will require accounts suspected of being bots to verify they are human.

🦞 NemoClaw: Nvidia’s open-source security layer for AI agents

🤖 ASMR: Supermemory’s experimental agent memory system

🎨 Uni-1: Luma’s all-in-one model for text + images

🧑💻 Figma: Now lets AI agents design directly on the canvas

THAT’S IT FOR TODAY

Thanks for making it to the end! I put my heart into every email I send, I hope you are enjoying it. Let me know your thoughts so I can make the next one even better!

See you tomorrow :)

- Dr. Alvaro Cintas