Good Morning! Mark Zuckerberg just completely rebooted Meta's AI strategy, launching a brand new model designed to take on OpenAI and Anthropic head-to-head. Plus, I’ll show you how to generate infinite AI videos locally without scene breaks.

Plus, in today’s AI newsletter:

Meta Reboots its AI Strategy with "Muse Spark"

Anthropic Launches Claude Managed Agents

Gemini Adds "Notebooks" to Organize Your AI Projects

How to Generate Infinite AI Videos Locally Without Scene Breaks

4 new AI tools worth trying

AI MODELS

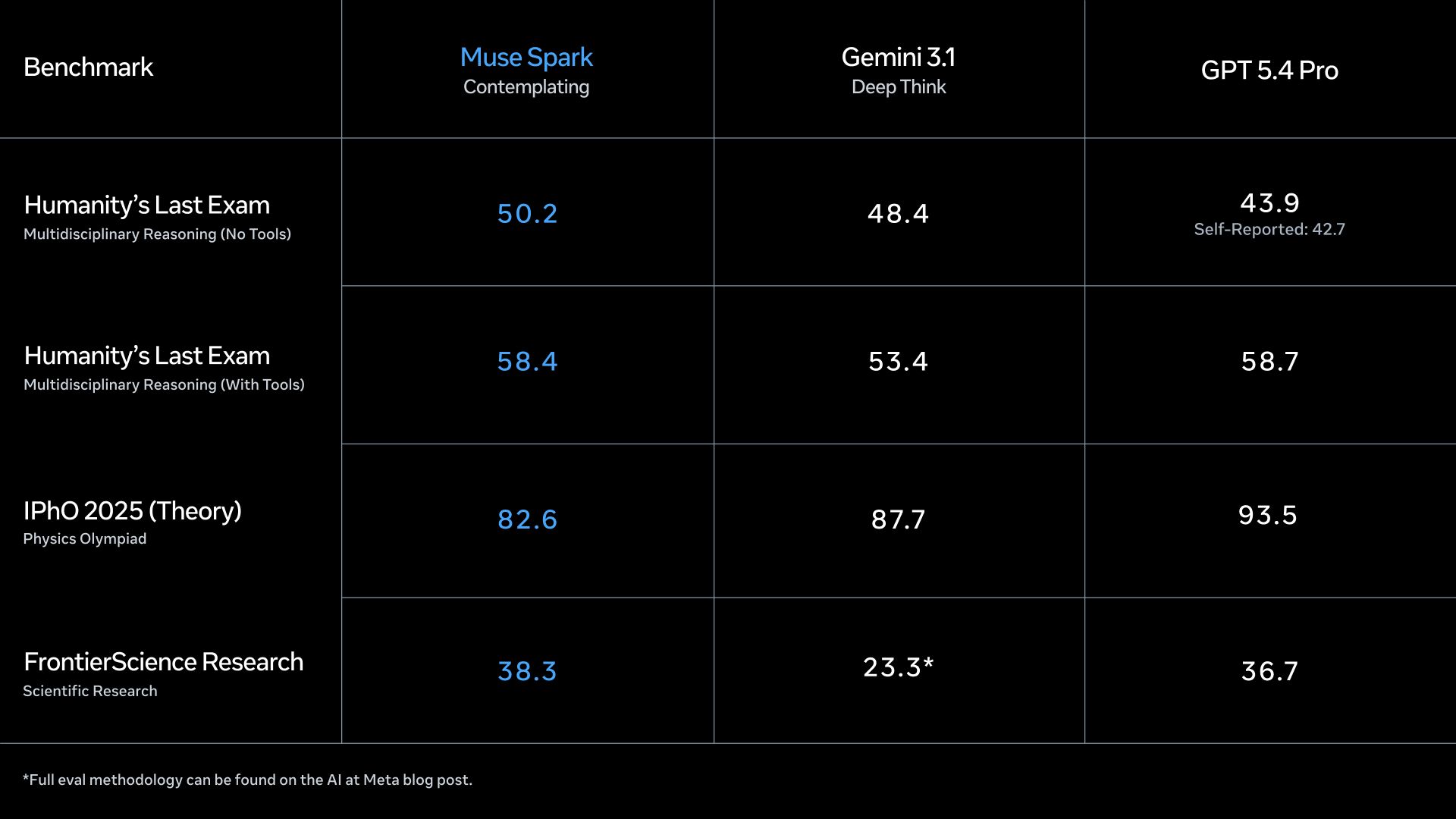

Following a massive internal overhaul, Meta just debuted Muse Spark. It's the first model to emerge from the newly formed "Meta Superintelligence Labs," led by former Scale AI CEO Alexandr Wang, marking a definitive pivot in Zuckerberg's quest to dominate the AI space.

The new lab was created after Zuckerberg reportedly grew frustrated with Llama lagging behind ChatGPT and Claude, prompting Meta to invest a staggering $14.3 billion for a 49% stake in Scale AI.

Muse Spark is available now on the web and the Meta AI app, and it specifically excels at visual STEM questions, coding mini-games, and troubleshooting physical hardware.

An upcoming "Contemplating" mode will use multiple parallel AI agents collaborating on the same problem to solve complex reasoning tasks quickly without massive latency spikes.

Because users must log in with a Facebook or Instagram account, privacy concerns are already swirling around how Meta might use personal social data to feed this new "personal superintelligence."

Meta is tired of playing catch-up. By poaching top industry talent, throwing billions at data labeling, and launching an entirely new model family, Zuckerberg is signaling a ruthless new phase in the AI wars. Tying Muse Spark directly to users' social media profiles also reveals Meta's ultimate advantage: leveraging its massive ecosystem to build a deeply personal, context-aware agent integrated into the daily digital lives of billions of people.

AI NEWS

Building autonomous AI agents just got significantly easier. Anthropic introduced Claude Managed Agents, an API-accessible cloud service that handles the heavy lifting of agent infrastructure and orchestration.

It automates the complex "scaffolding" required for production-grade agents, including isolated secure containers, infrastructure setup, and observability.

Developers simply describe the tasks, specify third-party tools, and define cybersecurity guardrails (like requiring human permission for certain actions).

The platform seamlessly handles complex state management, credential security, and tool orchestration, including an error recovery mechanism to resume tasks after an outage.

Currently in research preview: capabilities for an agent to spin up other sub-agents for complex tasks, and automatic self-evaluation prompt refinement.

Building an AI agent prototype is easy; deploying one securely in enterprise production is a nightmare of infrastructure, sandboxing, and state management. By abstracting away the containerization, security scaffolding, and tool orchestration, Anthropic is dramatically lowering the barrier to entry, shifting the developer's focus from how an agent runs to what it actually accomplishes.

AI TOOLS

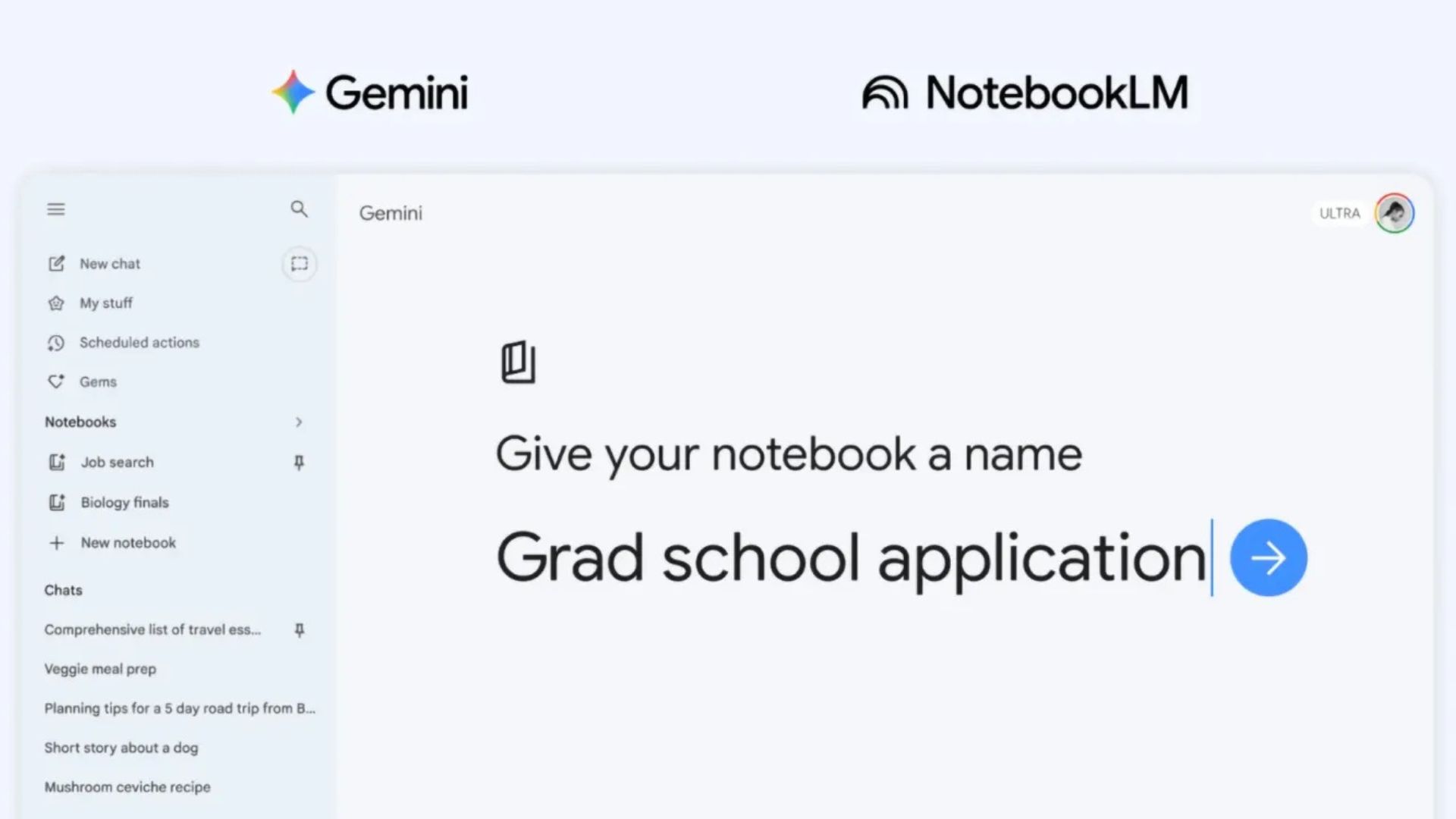

Google just announced a new "notebooks" feature for Gemini, allowing users to store files, past conversations, and custom instructions about specific topics in a single dedicated workspace.

Functions similarly to ChatGPT's "Projects" feature, acting as a personal knowledge base that gives the AI persistent context for your tasks

The killer feature: Notebooks sync seamlessly with Google’s NotebookLM AI research tool, meaning sources added in one app instantly show up in the other

Currently rolling out on the web for subscribers on Google’s AI Ultra, Pro, and Plus plans

Mobile support and access for free-tier users will launch in the coming weeks

Managing context windows has historically been the most annoying part of using AI chatbots. By letting you drop files and instructions into a dedicated workspace that natively syncs with NotebookLM, Google is turning Gemini from a transient chat interface into a permanent, highly organized research and project management hub.

HOW TO AI

🗂️ How to Generate Infinite AI Videos Locally Without Scene Breaks

In this tutorial, you’ll learn how to use Stable Video Infinite 2 Pro, that can extend videos endlessly while keeping characters, lighting, and motion consistent. It runs entirely on your own computer, is free to use, and removes the usual 3–4 second clip limitation that breaks most AI videos today.

🧰 Who is This For

Creators who want long, continuous AI-generated videos

Filmmakers experimenting with cinematic or anime-style scenes

Developers and tinkerers who like running AI locally with full control

Anyone tired of short, broken AI video clips

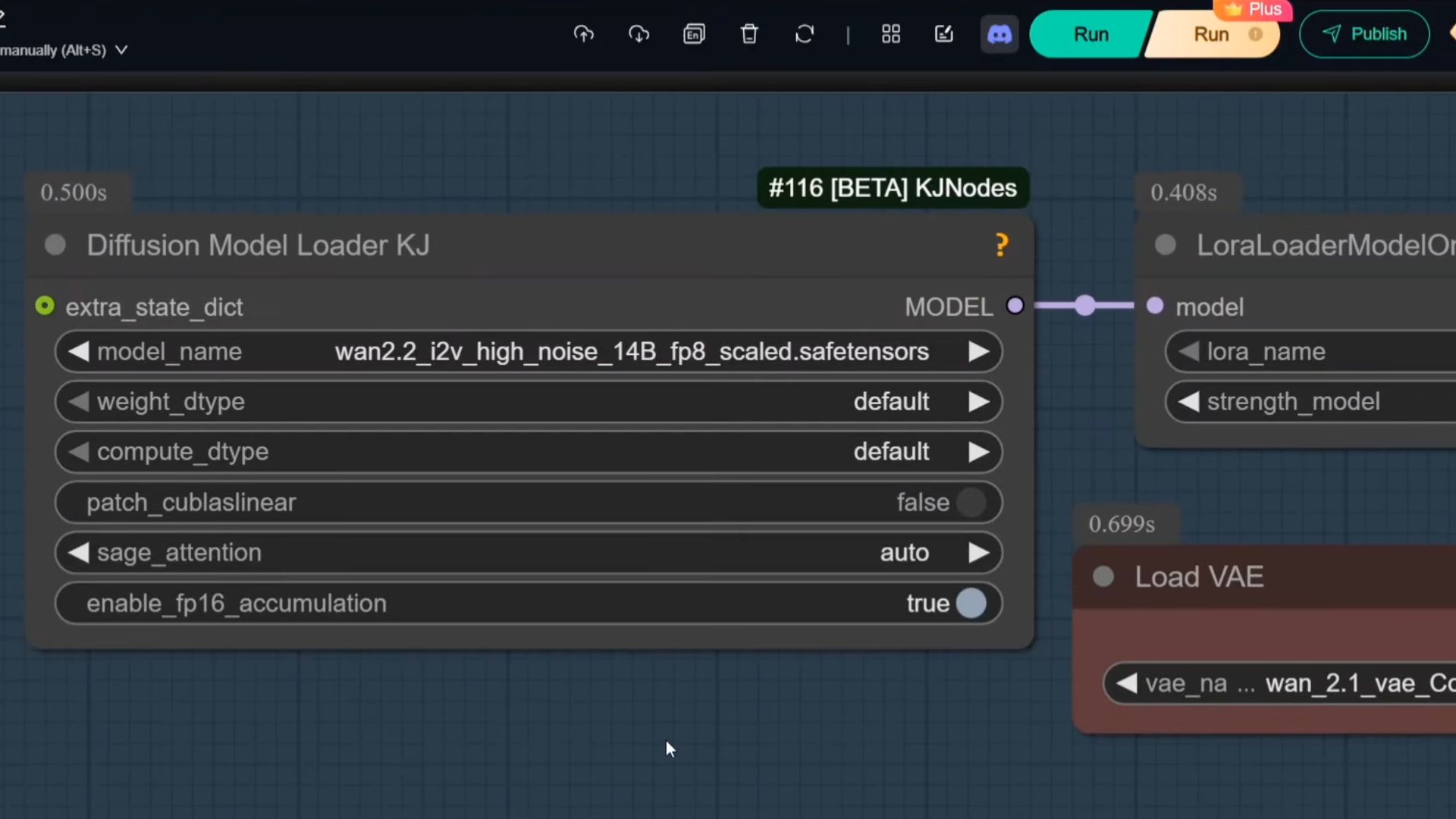

STEP 1: Download the Core Model Files

To get started, you first need the special model files that power infinite generation. These are not standard checkpoints but LoRA files designed specifically for long-term consistency.

Go to Hugging Face and search for Stable Video Infinite 2 Pro. On the model page, look for the FP16 LoRA files. You’ll usually see a High Rank and a Low Rank version, either one works fine for most setups.

Once downloaded, move the file into this folder on your system:

ComfyUI → models → loras

After placing the file there, restart or refresh ComfyUI so it can detect the new model. These LoRA files contain the logic that keeps characters and scenes stable across time, which is what makes infinite video possible.

STEP 2: Configure the Workflow Settings

Next, open a Stable Video Infinite 2 Pro workflow in ComfyUI. These are usually available on the same GitHub or Hugging Face pages as the model.

Inside the workflow, adjust the key settings to match the Pro configuration. Select SVI 2 Pro (KI version) in the model loader node. Set the resolution to 480 × 832 for your first tests, as this is much faster and ideal for debugging.

Set total steps to 8, CFG to 1, and choose a sampler like Euler Simple for quick testing or UniPC Simple for smoother motion. Make sure the workflow uses a 4-step Lightning/Lightex configuration for efficiency.

Starting small is important. Low resolution and fewer steps let you test ideas quickly before committing to heavier renders.

STEP 3: Control the Story With Multi-Prompting

This is where Stable Video Infinity really stands out. Instead of using one prompt for the entire video, you break the motion into segments.

Upload your starting image, which acts as the first frame of the video. Then locate the multiple prompt input boxes in the workflow. Prompt 1 describes the first few seconds, Prompt 2 describes what happens next, and Prompts 3 and 4 continue the sequence.

Make sure the Image Batch Extend with Overlap node is enabled. This overlap blends the end of one segment into the beginning of the next, preventing jump cuts and making the entire video feel like a single continuous shot.

This method lets you guide motion, expressions, and camera flow while maintaining consistency across the entire video.

STEP 4: Generate, Review, and Scale Up

Once everything is set, click Queue Prompt to generate the video. Watch the output carefully and check whether faces, lighting, and framing remain consistent from start to finish.

If motion feels slow or stiff, try switching the sampler from Euler Simple to UniPC Simple for smoother transitions. Once you’re happy with how the video looks, increase the resolution to 1280 × 720 and run it again for a cleaner, sharper result.

At this point, you can keep extending the video endlessly, adding more prompts and scaling quality as needed, all locally, with no limits and no usage caps.

The OpenAI Foundation says it is working to finalize over $100M in grants this month, across six institutions, to support and accelerate Alzheimer's research.

AWS debuts Amazon S3 Files, a new capability built on Amazon's Elastic File System that lets applications and AI agents access S3 buckets as local file systems.

xAI is reorganizing its engineering team, as SpaceX SVP Michael Nicolls says xAI is “clearly behind”; source: Nicolls has taken the title of xAI president.

Alibaba and China Telecom launch a data center in southern China that is powered by 10,000 of Alibaba's Zhenwu chips designed for AI training and inferencing.

🔥 Muse Spark: Meta’s new AI for personal, context-aware agents

💎 Gemma 4: Google’s powerful small AI model

🚀 GLM-5.1: Open-source AI built for long coding tasks

🧠 PikaStream 1.0: turns AI agents into talking, face-to-face video bots

THAT’S IT FOR TODAY

Thanks for making it to the end! I put my heart into every email I send, I hope you are enjoying it. Let me know your thoughts so I can make the next one even better!

See you tomorrow :)

- Dr. Alvaro Cintas