Good Morning! Nvidia just launched "Ising," a new family of open-source AI models designed to fix the one thing holding quantum computing back: the fact that qubits are incredibly fragile and prone to error. Plus I’ll show you how to turn questions into interactive visualizations with Gemini.

Plus, in today’s AI newsletter:

Anthropic Launches "Routines" to Automate Claude Code

OpenAI Drops GPT-5.4-Cyber for Security Pros

Nvidia Launches ‘Ising’ to Stabilize Quantum Computing

How to Turn Questions into Interactive Visualizations with Gemini

4 new AI tools worth trying

QUANTUN COMPUTING

While giants like IBM and Google focus on building the quantum hardware, Nvidia is positioning itself as the "control plane." The Ising models use AI to automate processor tuning and fix errors in real-time, turning experimental quantum machines into reliable tools.

The Ising suite tackles two of the biggest hurdles in quantum scaling: hardware calibration and real-time error correction.

Ising Calibration: An AI model that automates the tuning of quantum processors, slashing the setup process from several days down to just a few hours.

Ising Decoding: Neural network-based models that provide faster, more accurate error correction than any current open-source method.

Nvidia CEO Jensen Huang describes AI as the "control plane" that will transform fragile qubits into a scalable, usable computing platform.

For years, quantum computing has been stuck in the "experimental" phase because qubits are too sensitive to their environment. By open-sourcing the AI "recipes" to stabilize these systems, Nvidia is accelerating the timeline for practical quantum applications. They aren't building the quantum computer, they’re building the OS that makes the quantum computer actually work.

AI TOOLS

Claude Code is moving from interactive sessions to scheduled and event-driven automation. With the new Routines feature (currently in research preview), developers can package up prompts, repos, and connectors to handle the software development lifecycle on autopilot.

Scheduled Routines: Configure Claude to run on a cadence (hourly, nightly, or weekly). For example, it can pull the top bug from Linear at 2 AM, attempt a fix, and open a draft PR automatically.

API Routines: Every routine gets its own endpoint and auth token, allowing you to trigger Claude from alerting tools, deploy hooks, or any internal system via HTTP requests.

Webhook Routines: Currently starting with GitHub, Claude can automatically kick off a session in response to PR events, summarizing changes or running security checklists before a human even looks.

Infrastructure-Independent: Routines run on Claude Code's web infrastructure, meaning they don't depend on your local machine staying open or connected.

This is a massive step toward "hands-off" software engineering. By allowing Claude to respond to events and run on a schedule without human intervention, Anthropic is turning its AI from a coding assistant into an autonomous team member. This effectively eliminates the need for developers to manage their own cron jobs or infrastructure for simple agentic automations.

AI TOOLS

OpenAI has announced a limited release of GPT-5.4-Cyber, a version of its flagship model fine-tuned specifically for the cybersecurity landscape. It is currently exclusive to verified experts within the "Trusted Access for Cyber" program and won't be hitting your ChatGPT sidebar anytime soon.

Unlike the standard GPT-5.4, the Cyber version has significantly lower guardrails, allowing it to perform risky security tasks that would normally trigger a refusal.

The model is designed to act as a "red team" tool, helping researchers identify zero-day gaps and potential jailbreaks in major software before they are exploited.

This release is a direct response to Anthropic’s "Project Glasswing," which claimed its next-gen model had already discovered vulnerabilities in every major OS and browser.

While Anthropic’s Glasswing is a brand-new architecture (Claude Mythos), OpenAI's version is a surgical fine-tune of its existing 5.4 model aimed at winning back enterprise and government defense contracts.

By releasing a model that is essentially "pre-jailbroken" for security professionals, OpenAI is trying to prove that its tech is the superior choice for national security and enterprise defense. The fact that they’ve abandoned creative projects like Sora to focus on "Cyber" shows exactly where the real money, and the real danger, is heading in 2026.

HOW TO AI

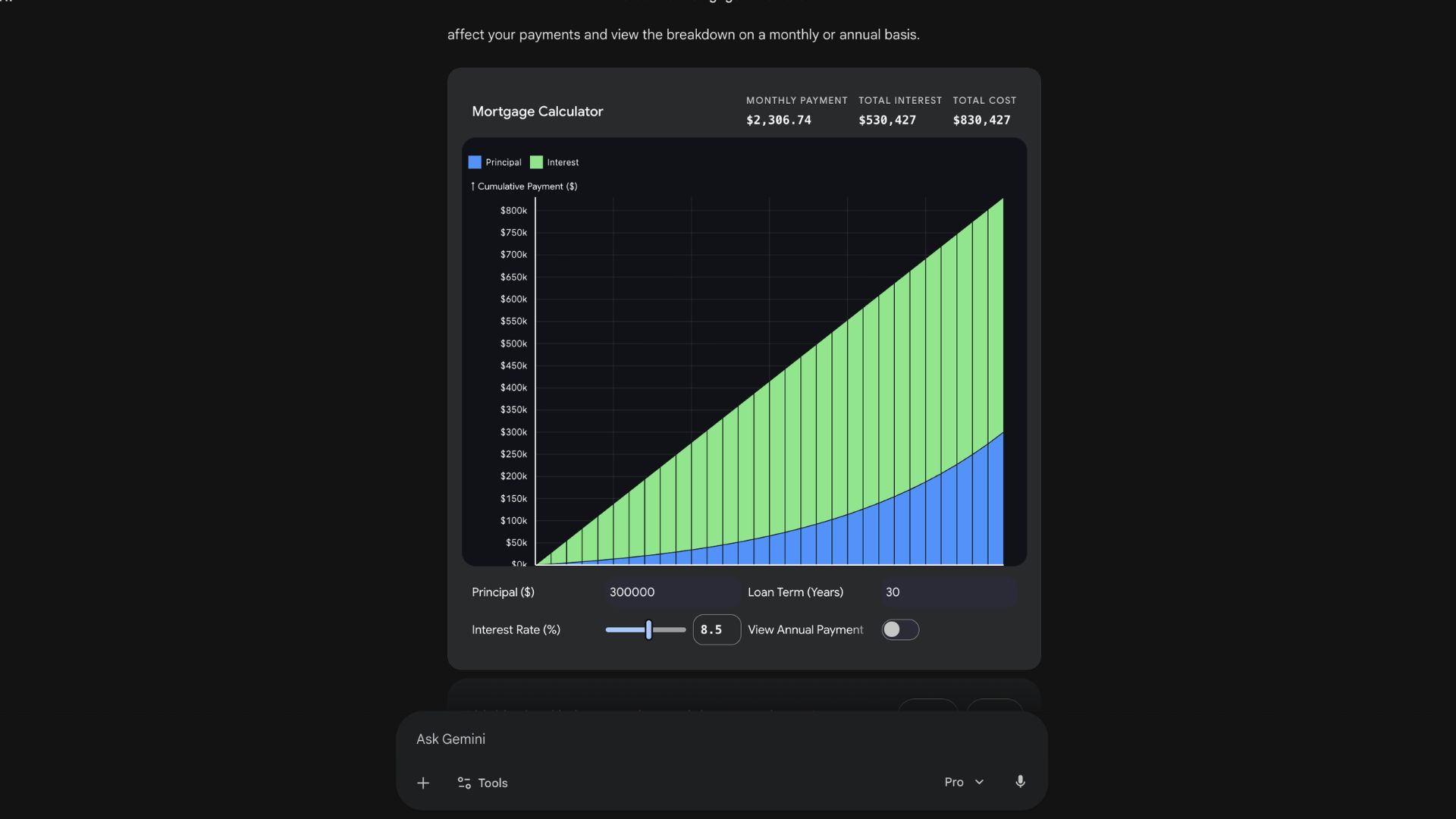

🗂️ How to Turn Questions into Interactive Visualizations with Gemini

In this tutorial, you will learn how to use Gemini’s "Dynamic View" and "Canvas" features to transform complex questions into interactive micro-apps, 3D simulations, and real-time dashboards directly in your browser.

🧰 Who is This For

People who struggle to understand complex data

Students learning through visuals instead of text

Analysts turning raw data into insights

Content creators making explainer visuals

STEP 1: Access Gemini and Choose Your Model

Head over to gemini.google.com and sign in. For the best visualization results, ensure you have selected Gemini 3.1 Pro (or Ultra) from the prompt bar. As of April 2026, these high-fidelity interactive features are primarily available on the web version of Gemini, as the mobile app is still rolling out full support for complex re-rendering.

STEP 2: Use Visual Triggers in Your Prompt

To activate the interactive engine, start your request with specific trigger phrases like "show me," "help me visualize," or "build an interactive simulation of."

Example: "Visualize a mortgage calculator with a slider for interest rates and a toggle for monthly versus annual views."

Gemini will then launch Gemini Canvas in a side panel, where it renders the code-based visualization (using WebGL for 3D or React/HTML for UI) in real time.

STEP 3: Interact and Refine the Visualization

Once the visualization appears, you can manually adjust sliders, toggles, and input fields to see how the data or simulation updates instantly. If the visual isn't quite right, use the chat to give specific feedback like, "Change the theme to dark mode with neon accents" or "Add a button to export this data to a CSV." Gemini will update the underlying code and re-render the app in seconds.

STEP 4: Export and Integrate Your Work

After you’ve polished your interactive tool, you can use the built-in export options. You can share your visualization as a standalone web link, embed it directly into a Google Doc or Slide, or even ask Gemini to output the full HTML/JavaScript file so you can host it on your own server. This allows you to go from a simple question to a functional professional asset in minutes.

Google releases a Windows desktop app with a macOS Spotlight-like search box for the web, Google Drive, and local files, a screen sharing feature, and more.

Amazon agrees to acquire satellite operator Globalstar, set to close in 2027, to expand Leo; Amazon and Apple say Leo will power some iPhone and Watch services.

Anthropic redesigns Claude Code on desktop, adding a sidebar for managing multiple sessions, a drag-and-drop layout, an integrated terminal, and a file editor.

Google launches Skills, repeatable AI prompts that Chrome users can run with a keyboard shortcut; users can set up their own Skills or choose from 50+ presets.

💳 Lovable Payments: Add payments to your app with just one chat

💎 Gemma 4: Google’s powerful small AI model

⚙️ HeyGen CLI: Create videos straight from your terminal with AI

💻 Holo 3: Open AI agent that can use computers like a human

THAT’S IT FOR TODAY

Thanks for making it to the end! I put my heart into every email I send, I hope you are enjoying it. Let me know your thoughts so I can make the next one even better!

See you tomorrow :)

- Dr. Alvaro Cintas