Good Morning! Perplexity just launched an AI agent that might outshine Claude Code and OpenClaw, by acting like a real digital worker that uses the same interfaces you do. Plus, you’ll learn how to connect NotebookLM to Gemini to unlock its full potential.

Plus, in today’s AI newsletter:

Perplexity Launches a “Computer” AI Agent

New AI That Uses Computers Like a Human

Mercury 2 Brings Editor-Style AI

How to Connect NotebookLM to Gemini to Unlock Its Full Potential

4 new AI tools worth trying

AI TOOLS

Perplexity unveiled Perplexity Computer, a general-purpose AI agent that can create and execute full workflows across tools, files, the web, and code, running for hours or even months with minimal input.

Works like a digital employee: operates browsers, tools, files, coding environments, and research workflows

Orchestrates 19 AI models (including OpenAI, Google, and others) by assigning tasks to the best model for each job

Lets users specify an outcome, then auto-splits work into agents and sub-agents that run in parallel

Launches web-only for Max users ($200/month) with per-token billing and bonus launch tokens

This is a shift from “chatting with AI” to managing AI workers. If Perplexity Computer proves reliable at long-running, multi-step tasks, it could set a new bar for personal AI agents, and make tools like Claude Code and OpenClaw feel like early prototypes rather than the endgame.

AI MODELS

FDM-1 is a new “computer action” model trained on massive amounts of screen recordings, allowing it to understand and replicate real on-screen actions across software and even physical systems.

Trained on 11 million hours of screen footage, far beyond existing datasets

Uses a breakthrough video compression system (up to 100× more efficient) to understand hours, not seconds, of activity

Can follow nearly 2 hours of continuous screen context in one go, ~50× more visual context than current models

Demos include CAD modeling in Blender, bug hunting, and driving a real car with under 1 hour of fine-tuning

This pushes AI beyond text and clicks into full computer operation. If models can truly learn by watching screens, “AI employees” that use any software, no APIs needed, just got a lot closer.

AI RESEARCH

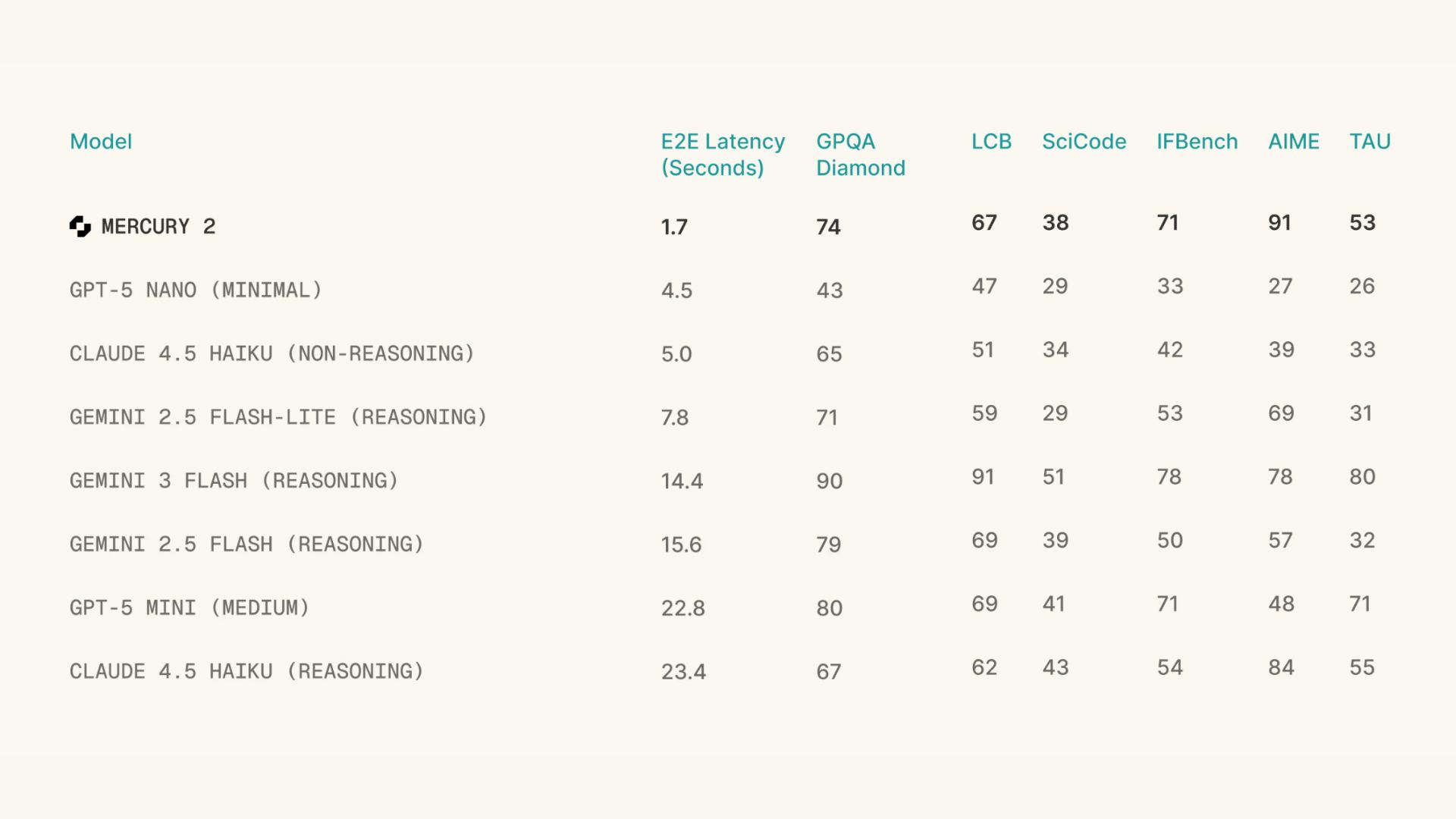

Inception Labs launched Mercury 2, a diffusion-based language model that drafts answers all at once, then refines them in parallel, more like an editor than a typewriter.

Uses a diffusion LLM approach (similar to image models like Midjourney, but for text + reasoning)

Hits 1,196 tokens/sec, ~3× faster than models like GPT-5 Mini or Claude Haiku (per Artificial Analysis)

Cheap + practical: $0.25/M input, $0.75/M output, 128K context, tool use, OpenAI-compatible

Built for agent loops and production speed, not frontier leaderboard battles

Speed compounds. In multi-step agent workflows, a 10× faster model unlocks entirely new experiences, real-time voice agents, background automations, and AI that keeps up with human thinking. Even Andrej Karpathy is calling this the next layer of AI. Expect big labs (yes, including Google) to follow fast.

HOW TO AI

🗂️ How to Connect NotebookLM to Gemini to Unlock Its Full Potential

In this tutorial, you will learn how to integrate NotebookLM directly into Gemini. This powerful workflow allows you to cross-reference multiple notebooks at once, turning scattered research into a unified, interactive knowledge base without losing your original organization.

🧰 Who is This For

Researchers managing massive amounts of technical documentation across different projects.

Content Creators who need to cross-reference past scripts, notes, and ideas.

Professionals who want to query their organized notes while utilizing the power of a larger LLM.

Anyone looking to reuse older notes and connect scattered information naturally

STEP 1: Access the Integration

Start by opening your main Gemini workspace. You can do this either by going to the Gemini website in your browser or opening the Gemini mobile app on your phone.

At the bottom of the screen, you’ll see the message box where you normally type prompts. Inside this box, look for the “+” icon. This button is used to attach tools, files, and integrations to your conversation. Click it to continue.

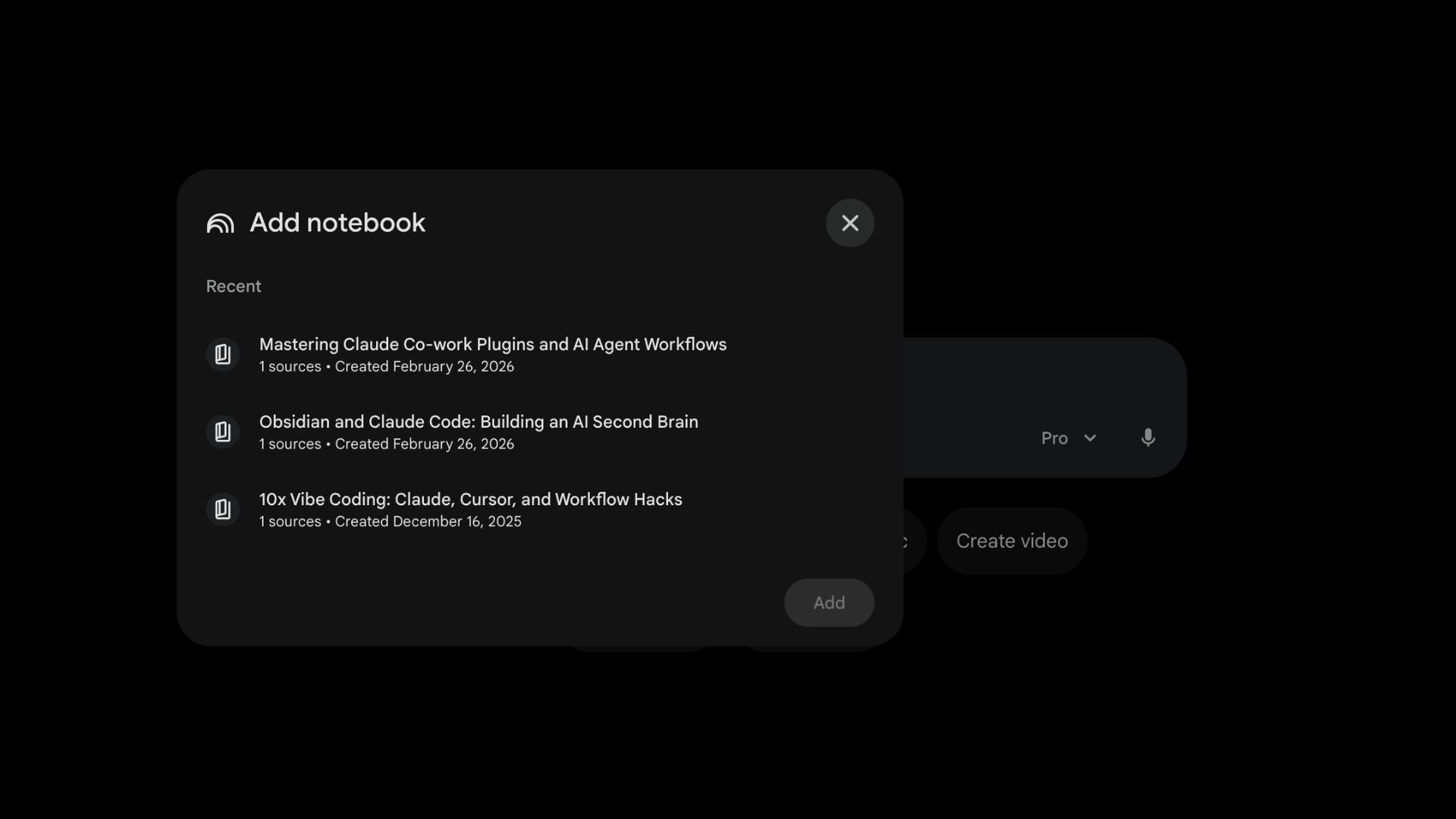

STEP 2: Select NotebookLM as Your Knowledge Source

After clicking the “+” icon, a menu will appear showing all available integrations Gemini can work with. From this list, select NotebookLM.

Once selected, Gemini will prompt you to connect your notebooks. This feature is available to Google Workspace users, Workspace Individual subscribers, and personal Google account users, so no special setup or upgrade is required.

STEP 3: Connect Multiple Notebooks and Cross-Reference

You’ll now see a list of all your existing NotebookLM notebooks. This is where the real power comes in.

Choose one or multiple notebooks you want Gemini to reference and add them to the conversation. From this point on, Gemini can answer questions using context from all selected notebooks at the same time.

You can now ask questions like comparing ideas across notebooks, summarizing research from different time periods, or synthesizing insights from multiple sources into one coherent explanation. Older notes suddenly become useful again, and disconnected ideas start linking naturally without manual effort.

STEP 4: Export and Apply the Results

Once Gemini generates answers or research summaries using your connected notebooks, you don’t need to copy-paste anything manually.

At the bottom of Gemini’s response, look for the export options. With one click, you can export the entire conversation into a Google Doc. This makes it easy to turn your research into reports, presentations, pitches, or study notes instantly.

This workflow keeps your notebooks clean and focused while Gemini handles the heavy thinking, cross-referencing, and synthesis for you.

Google launches task automation for Gemini on Pixel 10 and Samsung Galaxy S26, enabling Gemini to autonomously perform tasks using apps like Uber and DoorDash.

Alphabet's Intrinsic, which builds AI models and software for industrial robots, joins Google; it will remain a distinct entity and work with Google DeepMind.

A Cloudflare engineer rebuilt Next.js from scratch in one week using AI, reimplementing 94% of its API and spending $1,100 on Claude tokens.

Anthropic acquires Vercept, whose Vy desktop agent lets users control a Mac or PC with natural language, to “advance Claude's computer use capabilities”.

💼 Claude Cowork: Anthropic’s team AI platform with plug-ins

📸 Pomelli: Turns product photos into ready-to-use marketing content

🎥 Replit Animation: Turn text prompts into animated videos

⚙️ Rork Max: AI tool to build native iOS apps

THAT’S IT FOR TODAY

Thanks for making it to the end! I put my heart into every email I send, I hope you are enjoying it. Let me know your thoughts so I can make the next one even better!

See you tomorrow :)

- Dr. Alvaro Cintas