Good Morning! Zhipu AI just dropped GLM-5V-Turbo, a native multimodal vision model that finally solves the trade-off between seeing an interface and writing the rigorous code needed to build it. Plus I’ll show you how to bulk generate all your video assets in seconds using AI.

Plus, in today’s AI newsletter:

Z.ai Launches GLM-5V-Turbo for Agentic Coding Workflows

Holo3 Sets New Records for Computer Usage Automation

OpenAI Closes $122B Round, Hits $852B Valuation

How to Automate Bulk Video Asset Generation in Minutes

4 new AI tools worth trying

AI MODELS

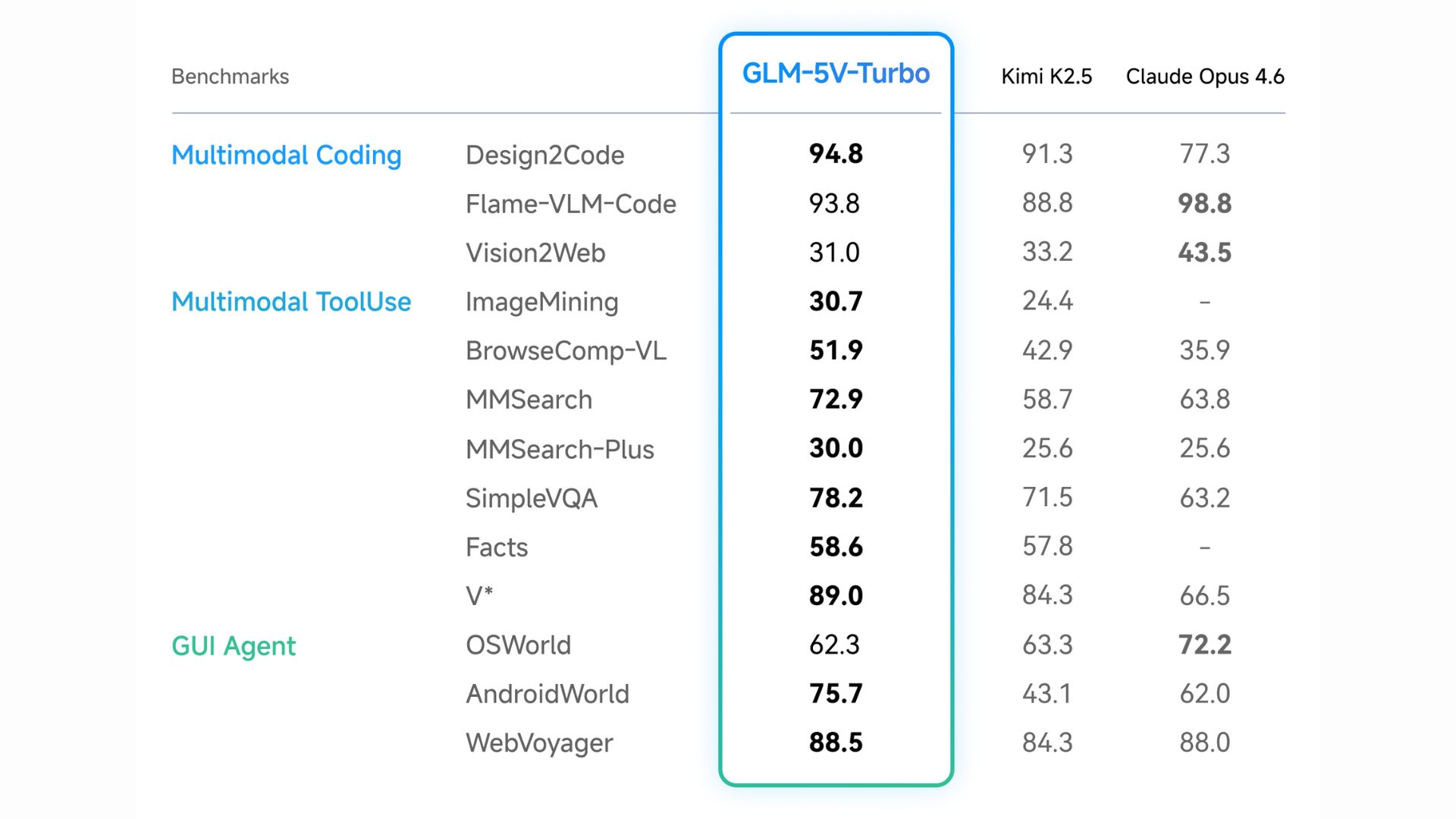

In the world of vision-language models, getting an AI to accurately "see" a user interface and simultaneously output complex software engineering code has been a massive challenge. Zhipu AI (Z.ai) aims to fix that with GLM-5V-Turbo, a native multimodal coding model built specifically to power high-capacity agentic workflows.

Native Multimodal Fusion: Unlike older systems that separate vision and language, this model natively processes images, video, design drafts, and complex document layouts as primary data without needing text translations.

Agentic Optimization: GLM-5V-Turbo is deeply integrated for workflows involving frameworks like OpenClaw and Claude Code, mastering the "perceive → plan → execute" loop for autonomous environment interaction.

30+ Task Joint Reinforcement Learning: The model was trained simultaneously on 30+ domains to prevent the "see-saw" effect, ensuring visual recognition improvements don't degrade strict STEM reasoning and programming logic.

High-Throughput Architecture: Built on an inference-friendly Multi-Token Prediction (MTP) architecture, it supports a massive 200K context window and up to 128K output tokens for repository-scale tasks.

Most vision-language models bolt vision and language together as an afterthought. GLM-5V-Turbo flips that, natively fusing both from the ground up and optimizing specifically for agentic coding. The result is a model that can genuinely see a UI and write production-quality code to interact with it. As autonomous agents become the norm, this kind of multimodal-native architecture isn’t a nice-to-have, it’s the baseline.

AI MODELS

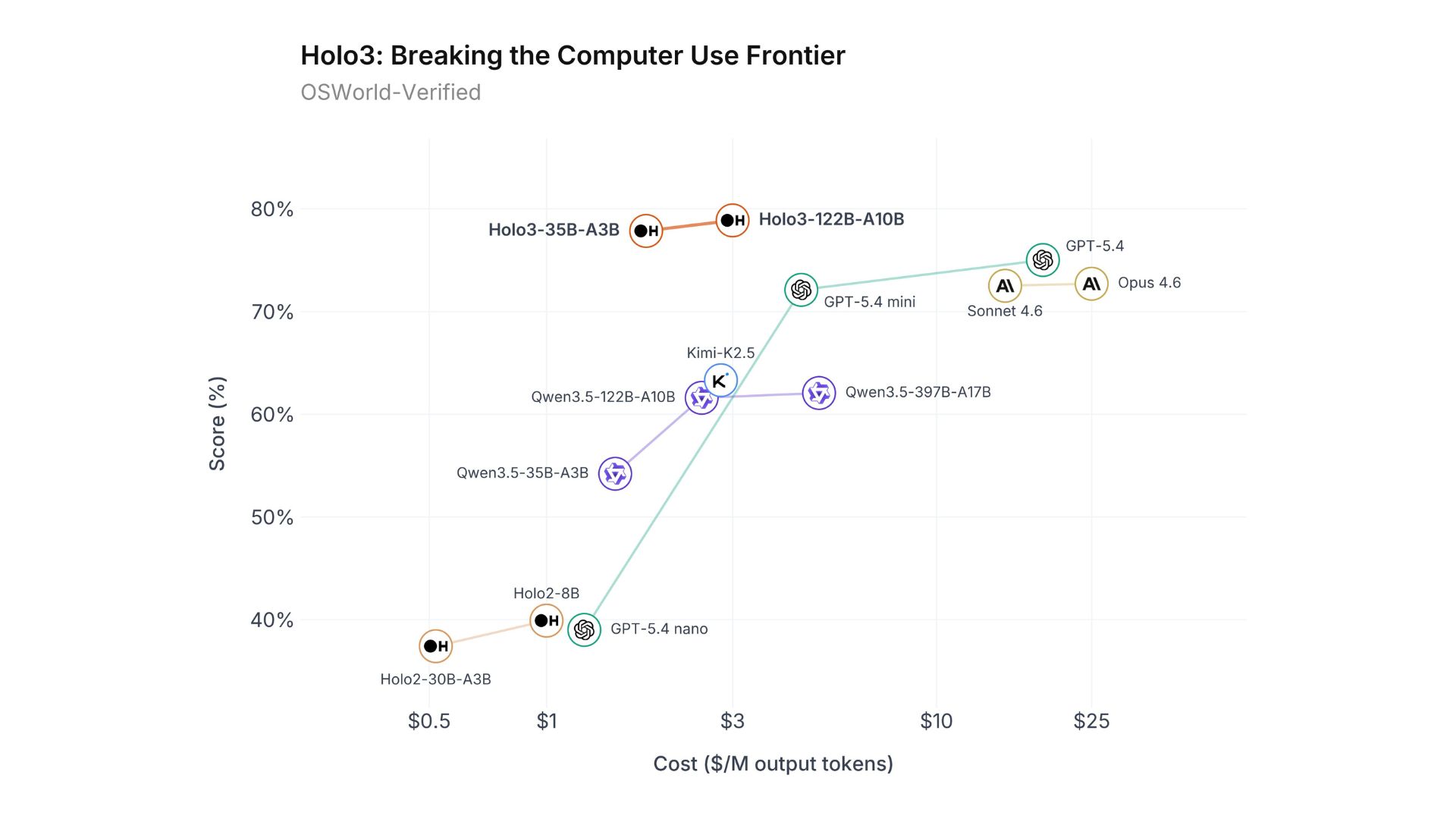

H Company has officially released the Holo3 series, setting new industry standards for GUI agents. Built specifically for computer usage automation across web, desktop, and mobile environments, the new Vision-Language Model is shattering benchmarks at a radically lower price point.

The flagship Holo3-122B-A10B achieved an impressive 78.85% on the OSWorld-Verified benchmark, outperforming mainstream giants like GPT-5.4 and Opus 4.6.

It accomplishes this state-of-the-art performance at just one-tenth of the inference cost of its leading competitors.

Built on a Qwen3.5 sparse Mixture of Experts (MoE) architecture, the model is highly efficient, the 122B version activates only 10B parameters during use, while the 35B version activates just 3B.

A fully open-source, lightweight version (Holo3-35B-A3B) is already available on Hugging Face under an Apache 2.0 license for free-tier users and local deployment.

The race for autonomous AI is shifting from conversational bots to actual computer-use agents that can click, scroll, and navigate digital environments just like human employees. By delivering state-of-the-art GUI automation at one-tenth the cost, and open-sourcing a highly capable 35B version, H Company is dramatically lowering the barrier to entry for enterprise automation and giving developers powerful new tools to build their own agentic workflows.

AI ECONOMY

OpenAI has officially closed a record-breaking $122 billion funding round. Anchored by massive investments from tech giants like Amazon, Nvidia, and SoftBank, the ChatGPT maker is building an unprecedented war chest to fund its physical infrastructure and compute needs.

The new round raises OpenAI's post-money valuation to a staggering $852 billion.

Amazon committed $50 billion (partially contingent on an IPO or reaching AGI), while Nvidia and SoftBank each invested $30 billion.

For the first time, OpenAI allowed retail participation, raising $3 billion from individual investors through bank channels, and will soon be included in ARK Invest ETFs.

The company is currently generating $2 billion in revenue per month and boasts over 900 million weekly active users, growing revenue 4x faster than early Internet-era giants like Meta and Alphabet.

Despite the massive revenue, OpenAI is still burning cash. To rein in costs ahead of a potential IPO, CEO Sam Altman recently shut down experimental consumer products like the Sora video app to focus entirely on enterprise adoption.

The AI boom is officially pivoting from consumer novelty to pure enterprise utility, which means the market demand for practical, step-by-step AI guides and tutorials is about to skyrocket as businesses scramble to actually integrate these tools. OpenAI shutting down flashy apps like Sora to focus on core revenue generators proves they are feeling the heat from competitors. As they barrel toward a public offering at an $852 billion valuation, they must prove to Wall Street that their technology isn't just a magic trick, but the foundational operating system for the global economy.

HOW TO AI

🗂️ How to Automate Bulk Video Asset Generation in Minutes

In this tutorial, you will learn how to build an automated workflow using FreePik Spaces that transforms a single text script into hundreds of ready-to-edit voiceovers and AI images instantly, saving you hours of tedious manual generation.

🧰 Who is This For

Content creators making videos at scale

Social media managers handling multiple accounts

Marketing teams creating ads in bulk

YouTubers and Shorts creators

STEP 1: Set the Foundation with Your Script

Head over to FreePik Spaces and click "New Space" to access their visual node canvas, it is similar to ComfyUI but much more beginner-friendly. Start by clicking the plus button to add a simple Text node and paste in your full video script. To keep your workspace organized, rename this node to "Script" so you can easily reference it later in the workflow.

STEP 2: Split the Script and Add Voiceover

Drag a line from your Script node and attach an Assistant node powered by a lightweight, fast model. Prompt the AI to act as a movie producer and split your text sentence by sentence.

Crucially, set the output format to "Export as a list" and connect it to a new List node. From this splitted list, branch off a Voiceover node (like ElevenLabs v2), select your preferred AI voice, and attach another List node to it. This automatically generates a separate audio file for every single sentence in your script.

STEP 3: Auto-Generate Image Prompts and Visuals

Go back to your splitted text list and attach a second Assistant node. Instruct the AI to write a highly descriptive image prompt for each sentence, dictating your exact style (e.g., "2D vector illustration cartoon, medium shot, no text"). Export this as a list, then connect it to an Image Generator node. For the absolute best visual fidelity, select the Nano Banana 2 model.

If your story follows a specific person, you can upload a reference character (like a photo of Elon Musk) to an Asset node and link it directly to your image generator so the face stays consistent.

STEP 4: Run, Download, and Assemble

Add one final List node to your image generator to catch the outputs. Double-check your connections, click the very first Script node, and hit Run. The AI will process the entire workflow in bulk. Once finished, click the download icon on your final List nodes to save the zipped audio and image files straight to your Mac Pro M5. Unzip the folders, drop the perfectly sequenced images and audio files into an editor like CapCut or Filmora, and your fully automated video is ready to export!

SpaceX has filed confidentially for an IPO, putting it on track for a June listing; it could reportedly seek a valuation of $1.75T+ and raise ~$75B.

Secondary share marketplaces say OpenAI shares have fallen out of favor, in some cases becoming difficult to unload, as investors pivot quickly to Anthropic.

Anthropic is racing to contain the fallout after accidentally leaking Claude Code's source code, issuing a copyright takedown request to remove 8,000+ copies.

Google plans to release a screenless Fitbit band later this year; it will include basic features and require a paid subscription for more functionality.

🎥 Veo 3.1 Lite: Google’s cheaper video generation AI

🧠 Qwen3.5-Omni: Alibaba’s AI that understands text, images, audio, and video

💻 Holo 3: Open AI agent that can use computers like a human

🔎 Model Council: Perplexity tool to query multiple AI models at once

THAT’S IT FOR TODAY

Thanks for making it to the end! I put my heart into every email I send, I hope you are enjoying it. Let me know your thoughts so I can make the next one even better!

See you tomorrow :)

- Dr. Alvaro Cintas